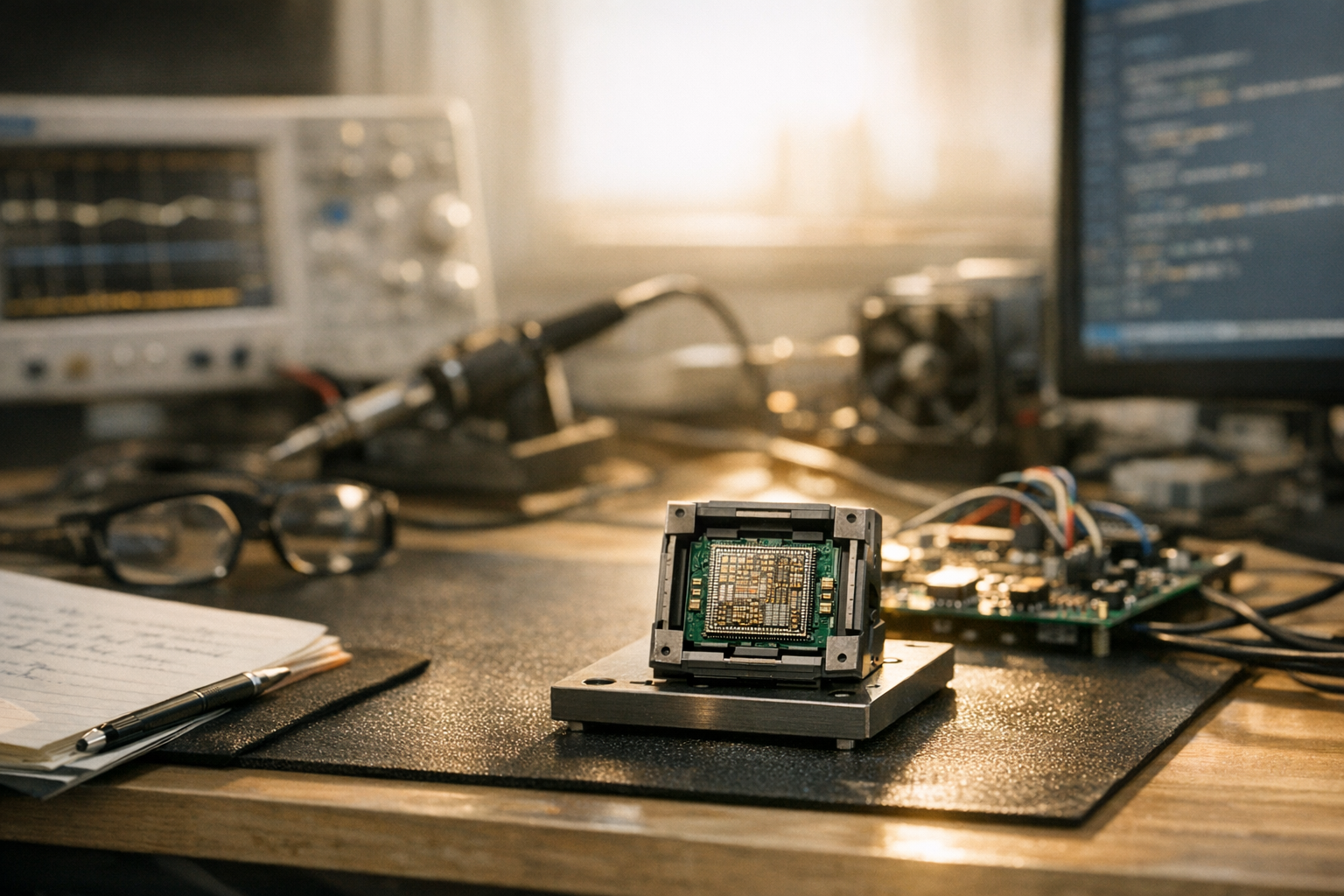

An AI Agent designs a RISC-V CPU core from scratch — and honestly, the semiconductor industry hasn’t seen a disruption this significant since EDA tools automated schematic capture in the ’80s. What used to take a team of engineers several months now happens in hours. Autonomous AI systems can generate, verify, and optimize complete processor designs with minimal human intervention.

This isn’t science fiction anymore.

Research teams at universities and major chip companies have already shown working RISC-V cores designed entirely by AI agents. Furthermore, these designs pass standard verification suites and sometimes rival human-crafted alternatives in power efficiency. I’ve been watching this space closely for years, and the pace of progress still catches me off guard.

How an AI Agent Designs a RISC-V CPU Core from Scratch

RTL Generation and Verification Automation in AI-Driven Design

LLM-Based vs. Symbolic Reasoning: Comparing AI Approaches

Case Studies: AI Agents in Real Chip Design Pipelines

Tools, Workflows, and Practical Steps for AI-Driven RISC-V Design

How an AI Agent Designs a RISC-V CPU Core from Scratch

To understand how an AI agent designs a RISC-V CPU core from scratch, you need to understand what the traditional chip design workflow actually looks like — because the contrast is striking.

Traditionally, engineers write Register Transfer Level (RTL) code in languages like Verilog or VHDL, then simulate, verify, and synthesize that code into physical circuits. Each step demands deep, hard-won expertise. It’s slow, expensive, and brutally unforgiving of mistakes.

AI agents automate this entire pipeline. Specifically, they break the process into discrete, manageable tasks:

1. Specification parsing — The agent reads the RISC-V ISA specification and extracts instruction formats, opcodes, and behavioral requirements.

2. RTL generation — Using large language models or reinforcement learning, the agent produces synthesizable Verilog or SystemVerilog code.

3. Functional verification — The agent runs testbenches against the generated design, checking correctness instruction by instruction.

4. Design optimization — The agent iterates on timing, area, and power metrics until targets are met.

5. Physical synthesis preparation — The agent outputs netlists ready for place-and-route tools.

Consequently, the entire flow from spec to silicon-ready design compresses dramatically. An AI agent that designs a RISC-V CPU core from scratch doesn’t just write code — it reasons about architecture tradeoffs, catches bugs, and refines performance on its own. This surprised me when I first dug into the research. I expected glorified autocomplete. What I found was closer to a junior engineer who never sleeps.

Why RISC-V specifically? The open-source instruction set architecture provides freely available specifications. That means AI agents can train on publicly available RISC-V implementations without licensing headaches. Moreover, the modular nature of RISC-V — with its base integer ISA and optional extensions — maps naturally to the divide-and-conquer approach AI agents excel at. It’s almost like RISC-V was designed with this use case in mind (it wasn’t, but the fit is uncanny).

RTL Generation and Verification Automation in AI-Driven Design

RTL generation sits at the heart of how an AI agent designs a RISC-V CPU core from scratch. Nevertheless, generating correct hardware description code is significantly harder than generating software. A single bit-level error can render an entire processor non-functional — there’s no runtime exception handler for bad silicon.

LLM-based RTL generation uses models fine-tuned on hardware description languages. Tools like ChipChat and emerging frameworks from research labs show that GPT-4 class models can produce working Verilog modules. However, raw LLM output often contains subtle errors — the kind that look plausible but fail under edge-case conditions. That’s where the agent architecture becomes critical.

The agent doesn’t simply prompt a model once and call it done. Instead, it follows a tight iterative loop:

- Generate a Verilog module for a specific functional unit (ALU, decoder, register file)

- Run the module through a linting tool like Verilator to catch syntax errors

- Execute simulation testbenches to verify functional correctness

- Analyze failure logs and feed error context back to the generation model

- Regenerate or patch the code until all tests pass

That closed-loop architecture is what separates a true AI agent from a simple code generator. The agent maintains state, tracks progress, and decides which modules need attention. It’s the difference between a tool and a collaborator.

Verification automation is arguably even more important than generation. Traditionally, verification consumes 60–70% of total chip design effort. That’s not a typo — most of the work isn’t building the thing, it’s proving the thing works. AI agents speed this up by:

- Auto-generating testbenches from specification constraints

- Using formal verification tools to prove correctness mathematically

- Running constrained random tests and analyzing coverage reports

- Identifying corner cases that human engineers might miss

Additionally, some research teams combine LLM-based agents with symbolic reasoning engines. The LLM handles natural language spec interpretation, while the symbolic engine handles mathematical proof obligations. Together, they achieve verification coverage that neither approach manages alone — and I’d argue this hybrid is where the real breakthroughs are happening right now.

LLM-Based vs. Symbolic Reasoning: Comparing AI Approaches

Not all AI agents work the same way. The underlying method shapes every output, so it matters enormously when an AI agent designs a RISC-V CPU core from scratch. Two main approaches have emerged, each with real strengths and real limitations.

| Feature | LLM-Based Approach | Symbolic Reasoning Approach |

|---|---|---|

| Input format | Natural language specs, code examples | Formal specifications, constraint sets |

| Strengths | Flexible, handles ambiguity well | Mathematically precise, provably correct |

| Weaknesses | Can produce incorrect logic | Brittle with incomplete specs |

| RTL quality | Good first drafts, needs iteration | Correct by construction when specs are complete |

| Verification | Pattern-matching for bug detection | Formal proofs of correctness |

| Speed | Fast initial generation | Slower but more thorough |

| Scalability | Handles large designs with chunking | Struggles with state space explosion |

| Best use case | Rapid prototyping, exploration | Safety-critical designs, final verification |

Importantly, the most successful implementations use hybrid approaches. An LLM agent generates initial RTL code quickly, then a symbolic reasoning engine formally verifies critical properties. You get the creativity of language models alongside the rigor of formal methods — and in my experience following this research, neither half works nearly as well without the other.

Notable hybrid examples include:

- NVIDIA’s ChipNeMo — A domain-adapted LLM that helps engineers with RTL generation and bug summarization. It doesn’t replace the full pipeline but speeds up key bottlenecks. NVIDIA’s research blog covers several applications, and the productivity numbers are genuinely impressive.

- Google’s reinforcement learning approach — Used for floorplanning and macro placement, showing that RL agents can outperform human experts on specific optimization tasks. These weren’t marginal improvements — they were measurable wins.

- Academic RISC-V projects — Multiple university teams have shown that combining transformer models with SAT solvers yields the best results when an ai agent designs a RISC-V CPU core from scratch.

Conversely, purely symbolic approaches struggle with the creative side of architecture design. They excel at verification but can’t easily explore novel microarchitecture configurations. Meanwhile, pure LLM approaches generate creative solutions but offer no guarantees. So the hybrid model wins — consistently, across basically every serious implementation I’ve seen documented.

Case Studies: AI Agents in Real Chip Design Pipelines

Theory is one thing. Real-world results are another. Several concrete case studies show how an AI agent designs a RISC-V CPU core from scratch in practice — and these aren’t lab curiosities.

Case Study 1: The Chinese Academy of Sciences’ “Enlightenment” Project. Researchers used an LLM-based agent to design a complete RISC-V processor. The agent generated RTL code that passed the RISC-V compliance test suite. Notably, the resulting core ran at competitive clock speeds on FPGA. This was a meaningful milestone — not just “the code compiled,” but “the code passed industry-standard benchmarks.”

Case Study 2: Efabless and Open-Source Silicon. The Efabless platform lets anyone tape out chips using open-source tools. Several teams have submitted AI-assisted RISC-V designs through their chipIgnite program, and these designs go through real fabrication at GlobalFoundries or SkyWater Technology. The fact that AI agent-designed RISC-V CPU cores built from scratch survive actual manufacturing validates the approach in the most concrete way possible. You can’t argue with physical silicon.

Case Study 3: Industry adoption at scale. Major EDA vendors like Synopsys and Cadence now integrate AI assistants into their toolchains. Although these aren’t fully autonomous agents yet, they represent significant steps toward it. Synopsys’s DSO.ai optimizes chip design space exploration using reinforcement learning and has been used in production designs at Samsung and other foundries. Fair warning: don’t expect plug-and-play. The integration complexity is real.

Key takeaways from these case studies:

- AI agents produce functional silicon, not just academic papers

- Open-source RISC-V specifications enable rapid AI training and validation

- The gap between AI-generated and human-designed cores is narrowing fast

- Verification remains the hardest challenge, but agents are improving rapidly

Similarly, smaller startups are building specialized agents for niche processor designs. Custom accelerators for machine learning, signal processing, and IoT devices are natural targets. The economics strongly favor AI-driven design when you need many specialized cores quickly — and that’s exactly where the market is heading.

Tools, Workflows, and Practical Steps for AI-Driven RISC-V Design

Here’s the thing: you don’t need a million-dollar EDA license to experiment with this. Open-source tools have genuinely opened up hardware design in a way that wasn’t true even five years ago.

Essential tools for AI-driven hardware design:

- Yosys — Open-source synthesis suite that converts Verilog to gate-level netlists

- Verilator — Fast Verilog simulator, perfect for running AI-generated testbenches

- OpenROAD — Complete open-source RTL-to-GDSII flow for physical design

- LangChain or AutoGen — Agent frameworks that coordinate LLM calls with tool use

- RISC-V GNU Toolchain — Compiles test programs to validate your CPU core

I’ve tested dozens of agent frameworks and this combination is the most practical starting point I’ve found — it’s not the only way, but it gets you to a working experiment fastest.

A practical workflow looks like this:

1. Define your target RISC-V configuration (RV32I is a great starting point — simple enough to be tractable, complete enough to be meaningful)

2. Set up an agent framework with access to a capable LLM (GPT-4, Claude, or open-source alternatives)

3. Give the agent tools: file I/O, Verilator invocation, synthesis commands

4. Prompt the agent with the RISC-V spec and let it generate module-by-module

5. Configure the agent to run tests after each module and iterate on failures

6. Once all tests pass, synthesize the design and check area, timing, and power

7. Optimize by having the agent explore microarchitecture alternatives

Tips for better results:

- Break the design into small modules. An agent handles a 200-line ALU better than a 5,000-line full core in one shot. This is the single most important practical lesson repeated across every serious implementation I’ve seen.

- Provide reference implementations. Giving the agent access to existing open-source RISC-V cores like PicoRV32 or SERV improves output quality significantly.

- Use formal verification early. Don’t wait until the end — have the agent prove properties as it generates each module. Retrofitting verification is painful whether a human or an AI wrote the code.

- Track coverage metrics. The agent should know which instructions and edge cases it hasn’t tested yet.

Therefore, the barrier to entry for AI-driven chip design has dropped to a level that would’ve seemed impossible a decade ago. A skilled engineer with Python experience and basic hardware knowledge can build a working AI agent that designs a RISC-V CPU core from scratch using freely available tools. That’s not hype — it’s just where we are.

The Future of AI Agents in Semiconductor Engineering

The trajectory here is pretty clear. As AI agents grow more capable, their role in chip design will expand well beyond RISC-V cores. Specifically, we’re already seeing early work on AI-designed memory controllers, network-on-chip fabrics, and custom accelerators.

What’s coming next:

- Multi-agent collaboration — Separate agents for architecture exploration, RTL coding, verification, and physical design will work together, each specializing in its domain. Think less “one AI does everything” and more “a team of AIs with different expertise.”

- Continuous learning — Agents that learn from every design iteration will build up institutional knowledge and avoid past mistakes on their own. This is potentially the biggest long-term unlock.

- Specification-to-silicon automation — The ultimate goal: an agent that takes a natural language description and produces a manufacturable chip. We’re perhaps 3–5 years away from this for simple cores, though I’d bet on the aggressive end of that range.

- Democratized chip design — When an ai agent designs a RISC-V CPU core from scratch reliably, small companies and individuals gain access to custom silicon. That reshapes the economics of the entire semiconductor industry.

Nevertheless, real challenges remain. Verification at scale is still computationally expensive — a full formal verification run on a complex design can take days even on powerful hardware. Additionally, AI-generated designs sometimes contain subtle timing bugs that only appear under specific operating conditions. That’s exactly the kind of failure mode that’s hard to catch systematically.

Importantly, none of this means AI agents will replace hardware engineers. They’ll amplify them. An engineer who previously designed one core per year might supervise agents producing ten. The human role shifts from writing RTL to reviewing, guiding, and making high-level architecture decisions — which, honestly, is the more interesting part of the job anyway.

Conclusion

The reality that an AI agent designs a RISC-V CPU core from scratch isn’t a future prediction anymore — it’s a present fact. From RTL generation to verification automation to design optimization, AI agents are transforming every stage of the chip design pipeline, and the results are showing up in real fabricated silicon.

Here are your actionable next steps:

- Read the RISC-V specification and get comfortable with RV32I as a baseline target

- Set up an open-source toolchain with Yosys, Verilator, and an agent framework

- Start small — have an agent generate and verify a single ALU module before attempting a full core

- Study hybrid approaches that combine LLMs with formal verification for the best results

- Follow research from groups actively pushing the boundaries of AI agents that design RISC-V CPU cores from scratch

The tools exist today. The specifications are open. The results are proven. Whether you’re a hardware engineer looking to 10x your productivity or a software developer who’s always been curious about chip design, now is genuinely the time to start experimenting. This isn’t a “wait and see” moment — it’s a get-in-early moment.

FAQ

Can an AI agent really design a working RISC-V CPU from scratch?

Yes. Multiple research teams have shown AI agents that design RISC-V CPU cores from scratch producing working silicon. These designs pass standard compliance test suites, and some have been fabricated through programs like Efabless chipIgnite. However, human oversight is still important for production-quality designs — we’re not at fully autonomous production-ready output yet, but we’re closer than most people realize.

What tools do I need to build an AI agent for chip design?

You’ll need an LLM (GPT-4, Claude, or an open-source model), an agent framework like LangChain or AutoGen, and hardware tools. Specifically, Verilator for simulation, Yosys for synthesis, and the RISC-V GNU toolchain for test compilation are essential. All of these are freely available, which makes the barrier to experimentation genuinely low.

How does an LLM-based approach differ from symbolic reasoning in hardware design?

LLM-based approaches excel at generating initial RTL code from natural language specifications — they’re fast, flexible, and handle ambiguity reasonably well. Conversely, symbolic reasoning approaches use formal mathematical methods to guarantee correctness, though they struggle when specs are incomplete. The best results come from combining both — using LLMs for generation and symbolic engines for verification when an AI agent designs a RISC-V CPU core from scratch. Neither approach alone comes close to matching the hybrid.

Is AI-generated hardware reliable enough for production use?

Currently, AI-generated designs work well for prototyping and simple cores, and furthermore they pass standard test suites consistently. However, production chips for safety-critical applications still require extensive human review and formal verification. The reliability gap is closing rapidly — but “closing” and “closed” aren’t the same thing yet.

How long does it take for an AI agent to design a RISC-V core?

Simple RV32I cores can be generated in hours. More complex designs with pipelining, caches, and extensions may take days of agent iteration. Although this is dramatically faster than traditional design cycles measured in months, the verification phase often takes longer than initial generation — which, notably, is also true when humans do the work.

Will AI agents replace hardware engineers?

No. AI agents will augment hardware engineers, not replace them. Engineers will shift from writing RTL manually to supervising AI agents, making architecture decisions, and reviewing generated designs. Notably, the demand for engineers who understand both AI and hardware design is growing rapidly — so if anything, this is a career opportunity worth paying attention to. When an AI agent designs a RISC-V CPU core from scratch, a skilled engineer still guides the process and validates the output. That human judgment piece isn’t going away anytime soon.