If you’ve ever watched AI accurately describe a photo or answer a detailed question about an image, you’ve already seen visual-language models in action. These systems don’t just “see” images — they reason about them, talk about them, and connect what they see to what they know about language.

Visual-language models (VLMs) represent a genuine architectural shift in AI, not just a marketing rebrand. Instead of processing text or images in separate silos, they handle both simultaneously. Consequently, they can tackle tasks that neither vision-only nor language-only models could ever pull off alone. From medical imaging to autonomous driving, VLMs are fundamentally changing how machines make sense of the world.

The Architecture Behind VLMs

Understanding how VLMs work starts with their architecture — and honestly, once you see it, it clicks fast.

At the core, every visual language model has three essential components:

1. A vision encoder — Processes raw images into meaningful numerical representations called embeddings. Most modern VLMs use a Vision Transformer (ViT) here.

2. A language model — Handles text generation, comprehension, and reasoning. Typically a large language model like LLaMA or a GPT-variant.

3. A fusion mechanism — Bridges the gap between visual and textual information. Arguably the most critical piece of the whole stack.

The fusion mechanism deserves its own spotlight. Several distinct approaches exist:

- Early fusion combines image and text features at the input level, so the model processes everything together from the start. This gives the model maximum opportunity to learn joint representations, but it also means errors in either modality can compound early and affect everything downstream.

- Late fusion processes each modality separately first, then merges the outputs near the final layers. It’s simpler to implement and easier to debug, though it can miss subtle interactions between image regions and specific words.

- Cross-attention fusion lets the language model attend to visual features at multiple layers — and this is the approach powering many state-of-the-art systems right now.

A concrete way to think about the difference: imagine you’re describing a busy street scene. Early fusion is like handing someone the photo and a caption simultaneously from the start. Late fusion is like having two specialists — one who analyzes the photo, another who reads the caption — then comparing notes at the end. Cross-attention is closer to having both specialists work side by side, constantly checking in with each other as they go. That back-and-forth is expensive, but it produces richer results.

Notably, models like GPT-4V from OpenAI use sophisticated cross-attention mechanisms. Similarly, Google’s Gemini architecture processes interleaved image and text tokens natively. The architectural choice directly determines what tasks the model handles well — and where it quietly falls apart.

So how does the vision encoder actually work? It splits an image into small patches — typically 16×16 pixels — and converts each patch into a vector embedding. Those embeddings then pass through transformer layers, just like word tokens in a language model. The output is a sequence of visual tokens the fusion mechanism can work with.

One practical implication worth noting: because the image is divided into fixed-size patches, very small details — a tiny label on a bottle, fine print on a document — can fall awkwardly across patch boundaries and get partially lost. This is one reason VLMs sometimes miss small text in images even when the overall scene description is accurate. Higher-resolution patch strategies are an active area of improvement.

I’ve looked closely at a lot of these architectures over the years, and the patch-based approach still surprises people when they first hear it. It feels almost too simple. But it works remarkably well.

Training Methods for Visual-Language Models

Training a VLM isn’t a single-step process — it involves multiple carefully designed phases. This is where visual-language models gets genuinely interesting, technically speaking.

Phase 1: Pre-training the vision encoder. Models like CLIP, developed by OpenAI, learn to align images with text descriptions. CLIP trained on 400 million image-text pairs scraped from the internet, building a shared embedding space where related images and text cluster together. That number — 400 million pairs — should give you a sense of the data appetite involved.

Phase 2: Pre-training the language model. The LLM backbone trains on massive text corpora, building strong language understanding and generation capabilities before it ever sees an image.

Phase 3: Multimodal alignment. This is the crucial step — where the model actually learns to connect visual representations with language understanding. Common techniques include:

- Contrastive learning — The model learns that matching image-text pairs should have similar embeddings, while non-matching pairs should sit far apart in the embedding space. A useful analogy: think of it like training someone to recognize that a photo of a golden retriever and the phrase “fluffy dog” belong together, while “fluffy dog” and a photo of a fire truck clearly don’t.

- Image-text matching — The model predicts whether a given image and text actually correspond to each other.

- Masked language modeling with visual context — The model predicts missing words using both surrounding text and the image simultaneously. For example, given an image of a snowy mountain and the sentence “The hikers reached the _____ of the peak,” the model uses both the visual and textual context to predict “summit.”

Phase 4: Instruction tuning. After alignment, models get fine-tuned on specific tasks using curated datasets with human-written instructions. Furthermore, reinforcement learning from human feedback (RLHF) often improves output quality significantly at this stage. In practice, this phase is where a model transitions from being technically capable to being actually useful — it’s the difference between a model that can describe an image and one that follows your specific formatting preferences while doing so.

Additionally, researchers have developed efficient training approaches that are genuinely worth knowing about. LLaVA (Large Language and Vision Assistant) showed that you can build a competitive VLM by freezing both the vision encoder and language model, then training only a small projection layer between them. The real kicker? This dramatically reduces computational costs — we’re talking a fraction of the resources needed for full end-to-end training.

Fair warning, though: that efficiency comes with tradeoffs in flexibility. You’re working with whatever capabilities the frozen backbones already have. If your vision encoder was never exposed to medical scans during pre-training, a frozen-backbone approach won’t magically fix that gap — you’d need to consider adapter-based fine-tuning or a domain-specific encoder instead.

| Training Approach | Data Required | Compute Cost | Typical Use Case |

|---|---|---|---|

| Full end-to-end training | Billions of pairs | Very high | Foundation models (Gemini, GPT-4V) |

| Frozen backbone + projection | Millions of pairs | Moderate | Research models (LLaVA, MiniGPT-4) |

| Adapter-based fine-tuning | Thousands of pairs | Low | Domain-specific applications |

| Zero-shot transfer | None (uses pre-trained) | Minimal | Quick prototyping |

Real-World Applications of VLMs

VLMs aren’t just research curiosities anymore. They’re solving real problems across industries — and some of the use cases are more mature than people realize.

Image captioning and description. VLMs generate detailed, accurate descriptions of images, powering accessibility features for visually impaired users. Screen readers integrated with VLMs provide far richer descriptions than older rule-based systems ever could. I’ve seen this firsthand in demos, and it’s genuinely moving how much more context gets conveyed. Where an older system might output “image: two people outdoors,” a VLM might say “two people sitting at a picnic table in a park, laughing, with a dog lying at their feet” — a description that actually tells you something.

Visual question answering (VQA). You show the model an image, ask a question — “What color is the car?” or “How many people are in this photo?” — and it reasons about the visual content and responds in natural language. Importantly, modern VLMs also handle complex reasoning questions like “Is this room safe for a toddler?” That’s a big leap from simple object detection. A practical tip here: specificity in your question usually gets you a better answer. Asking “Is there anything on the floor that a child could trip on?” tends to produce more actionable output than a vague “Is this safe?”

Document understanding. This is a massive enterprise use case, and honestly an underrated one. VLMs read invoices, receipts, contracts, and forms, then extract structured data from unstructured visual documents. A logistics company, for instance, might use a VLM to automatically parse hundreds of shipping manifests per day, pulling out vendor names, quantities, and delivery addresses without any manual data entry. Companies like Google Cloud offer document AI services built directly on these capabilities.

Medical imaging analysis. VLMs are being tested for radiology report generation — a doctor uploads an X-ray and the model produces a preliminary report highlighting potential findings. Nevertheless, these systems support rather than replace medical professionals. That distinction matters enormously right now. The practical value is in reducing the time a radiologist spends on routine cases, freeing attention for the complex ones.

Autonomous driving. Self-driving systems use VLMs to understand road scenes, describe what’s happening, predict risks, and explain decisions in natural language. The explainability angle is specifically what makes VLMs useful over traditional vision models. When a system can say “slowing down because a cyclist is merging from the right shoulder,” that’s far more useful for debugging and regulatory review than a black-box decision.

Content moderation. Social media platforms use VLMs to detect harmful visual content. Moreover, the model understands context — not just what objects appear in an image, but their arrangement and implied meaning. That nuance is something earlier systems completely lacked.

Here’s a practical code example showing how to use a VLM for image captioning with the popular Hugging Face Transformers library:

from transformers import BlipProcessor, BlipForConditionalGeneration

from PIL import Image

import requests

processor = BlipProcessor.from_pretrained("Salesforce/blip-image-captioning-large")

model = BlipForConditionalGeneration.from_pretrained("Salesforce/blip-image-captioning-large")

# Load an image from URL

url = "https://example.com/sample-image.jpg"

image = Image.open(requests.get(url, stream=True).raw)

# Generate a caption

inputs = processor(image, return_tensors="pt")

output = model.generate(**inputs, max_new_tokens=50)

caption = processor.decode(output[0], skip_special_tokens=True)

print(f"Generated caption: {caption}")

And here’s an example of visual question answering using the same framework:

from transformers import BlipProcessor, BlipForQuestionAnswering

from PIL import Image

# Load VQA model

processor = BlipProcessor.from_pretrained("Salesforce/blip-vqa-base")

model = BlipForQuestionAnswering.from_pretrained("Salesforce/blip-vqa-base")

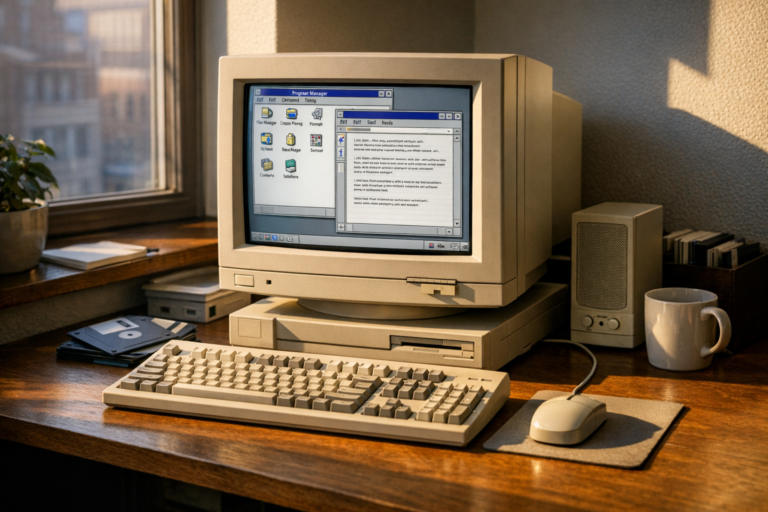

# Process image and question

image = Image.open("office_photo.jpg")

question = "How many monitors are on the desk?"

inputs = processor(image, question, return_tensors="pt")

output = model.generate(**inputs)

answer = processor.decode(output[0], skip_special_tokens=True)

print(f"Answer: {answer}")

These examples use Hugging Face’s Transformers library, which gives you pre-trained VLMs ready for immediate use. It’s a no-brainer starting point if you want to get your hands dirty quickly. One practical tip: run these examples on a machine with at least 8GB of GPU VRAM for reasonable inference speed. CPU-only inference works but can be painfully slow for interactive use.

Comparing Leading Visual Language Models

The VLM field is moving fast — almost uncomfortably fast if you’re trying to make a long-term architecture decision. Therefore, understanding the real differences between major options matters more than ever.

| Model | Developer | Open Source | Key Strength | Modalities |

|---|---|---|---|---|

| GPT-4V / GPT-4o | OpenAI | No | Best overall reasoning | Image, text, audio |

| Gemini 1.5 Pro | No | Long context, native multimodal | Image, text, video, audio | |

| LLaVA 1.6 | University of Wisconsin | Yes | Strong open-source option | Image, text |

| Claude 3.5 Sonnet | Anthropic | No | Document understanding | Image, text |

| Qwen-VL | Alibaba | Yes | Multilingual support | Image, text |

| PaLI-X | Google Research | No | Fine-grained visual understanding | Image, text |

Proprietary models like GPT-4V and Gemini generally outperform open-source alternatives on standard benchmarks. However, the gap is narrowing fast — and I mean fast. Open-source models offer real, tangible advantages in customization, data privacy, and ongoing cost.

Specifically, if you need on-premise deployment, LLaVA or Qwen-VL are solid choices worth serious consideration. Conversely, if raw performance matters most, GPT-4o currently leads most benchmarks by a meaningful margin. Meanwhile, Claude 3.5 Sonnet consistently excels at document-heavy workflows — that’s where I’d reach for it first.

A useful decision framework: start by asking whether your data can leave your infrastructure. If the answer is no — common in healthcare, finance, and legal contexts — open-source models with local deployment are your only realistic path. If data residency isn’t a constraint, run a quick benchmark on a representative sample of your actual use case rather than relying solely on published leaderboard scores. Real-world performance on your specific data frequently diverges from general benchmarks in ways that matter.

Here’s the thing: multimodal AI explained through these models reveals a clear trend. Each new generation handles more modalities with better accuracy, and models are simultaneously getting smaller yet more capable. Consequently, deploying VLMs in production is becoming increasingly practical — even on modest infrastructure.

Challenges and Future Directions

Despite the impressive progress, visual-language models still face real, significant challenges. And if you’re building with this technology, you need to go in with clear eyes.

Hallucination remains a core problem. VLMs sometimes describe objects that simply aren’t in an image, or confidently state incorrect spatial relationships. A model might claim a person is wearing a hat when they aren’t, or describe a document as containing a signature when the field is blank. This is particularly dangerous in medical or safety-critical applications. I’ve seen this happen in demos with leading models, not just obscure ones. A practical mitigation: where possible, ask the model to quote or locate specific evidence for its claims rather than just summarize — it doesn’t eliminate hallucination, but it makes errors easier to catch during review.

Bias in training data propagates. VLMs inherit biases from their training datasets, and images from certain cultures or demographics are frequently underrepresented. A model trained predominantly on Western internet imagery may describe traditional clothing from other cultures inaccurately, or default to stereotyped associations when describing people in professional settings. Although researchers are actively working on mitigation strategies, bias remains a persistent and genuinely difficult concern.

Computational costs are substantial. Training a state-of-the-art VLM requires thousands of GPUs running for weeks. Even inference can get expensive, though smaller distilled models help — at the cost of some capability. That tradeoff is worth being explicit about. A 7-billion-parameter model might cost a fraction of a cent per query at scale, while a frontier model via API can run to several cents per image — a difference that adds up fast in high-volume production environments.

Evaluation is tricky. Benchmarks like VQAv2 and GQA test specific skills, but they don’t capture the full range of visual understanding. Measuring whether a model truly “understands” an image — versus pattern-matching really well — remains an open research problem. It’s a harder question than it sounds.

Looking ahead, several exciting directions are emerging:

- Video understanding — Moving beyond static images to comprehend temporal sequences and actions

- 3D scene understanding — Reasoning about spatial depth and object relationships in three dimensions

- Embodied AI — Connecting VLMs to robotic systems that can act on visual understanding

- Efficient architectures — Building powerful VLMs that run on edge devices and smartphones

- Better grounding — Ensuring models can point to exactly which part of an image supports their answer

Moreover, the integration of visual-language models with retrieval-augmented generation (RAG) is a particularly promising direction. Imagine a VLM that can pull relevant documents while simultaneously analyzing an image — a radiologist’s assistant that cross-references a chest X-ray against a patient’s prior imaging history and relevant clinical guidelines at the same time. That combination could dramatically improve accuracy in specialized domains like legal or medical work. This surprised me when I first started exploring it — the accuracy gains in domain-specific tests are striking.

Conclusion

This guide on visual-language models has covered architecture, training methods, real-world applications, and the challenges you’ll actually run into. These models represent one of the most exciting frontiers in AI right now — and notably, they’re no longer just a research story. They’re shipping in products.

Here are your actionable next steps:

- Start experimenting with open-source VLMs like LLaVA using the Hugging Face library

- Try the code examples above to build image captioning and VQA prototypes

- Evaluate your use case against the model comparison table to pick the right tool

- Stay updated on new releases — the field of multimodal AI moves fast, and similarly, the open-source options are catching up quickly

- Consider fine-tuning a pre-trained model on your domain-specific data for best results

Bottom line: whether you’re building accessibility tools, document processing pipelines, or creative applications, understanding visual-language models gives you a genuinely strong foundation. The technology is mature enough for production use — and it’s only getting better from here.

FAQ

What exactly are visual-language models?

Visual-language models are AI systems that process both images and text simultaneously. They combine a vision encoder with a language model through a fusion mechanism, which lets them perform tasks like describing images, answering visual questions, and understanding documents. Think of them as AI that can both “see” and “talk” about what it sees — and importantly, reason about the connection between the two.

How do VLMs differ from standard image classifiers?

Traditional image classifiers assign predefined labels to images — they might output “cat” or “dog” and that’s it. Visual-language models, however, generate free-form text responses. They can describe scenes in detail, answer open-ended questions, and reason about image content in ways that feel genuinely flexible. Additionally, VLMs understand the relationship between visual and textual information — something classifiers fundamentally cannot do.

Can I run visual-language models on my own hardware?

Yes, although it depends on the model size. Smaller VLMs like LLaVA-7B can run on a consumer GPU with 16GB of VRAM. Larger models need more powerful hardware. Specifically, quantized versions — reduced precision builds — make local deployment considerably more feasible. Ollama offers an easy way to run some multimodal models locally — worth a shot if you want to experiment without cloud costs.

What training data do visual-language models need?

VLMs require large datasets of paired images and text. Common sources include image-caption datasets like LAION-5B and curated instruction-following datasets. For fine-tuning on specific domains, you might need only a few thousand high-quality image-text pairs. Nevertheless, data quality matters more than quantity for fine-tuning tasks — a lesson that’s come up repeatedly in practice.