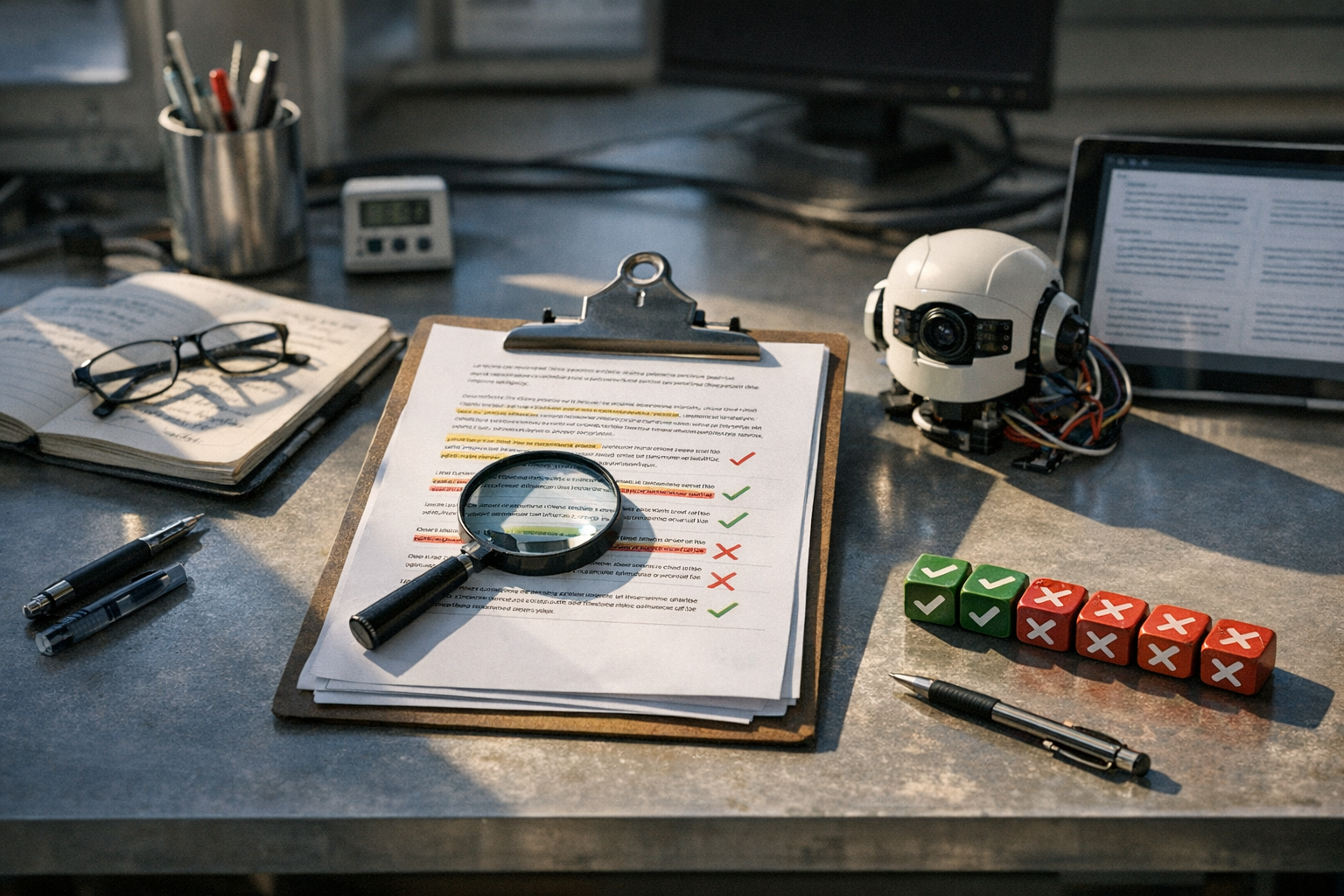

If you’re producing AI-generated content at scale, you need a real way to measure what’s actually working. An AI content scale framework evaluation metrics system gives you exactly that — a structured method to score, compare, and improve machine-written text. Without one, you’re flying blind and hoping for the best.

The explosion of tools like ChatGPT, Claude, and Gemini has made content creation faster than ever. However, speed without quality control is just a faster way to produce mediocre output. A proper evaluation framework turns subjective “I think this feels okay” opinions into repeatable, data-driven assessments. It’s the difference between guessing and actually knowing.

Why You Need an AI Content Scale Framework

Most teams judge AI content by gut feeling. That approach doesn’t scale.

When you’re producing dozens or hundreds of pieces weekly, you need standardized AI content scale framework evaluation metrics to stay consistent. I’ve watched teams skip this step and spend months wondering why their AI content underperforms — the answer is almost always that nobody defined “good” in the first place.

The core problem is simple. Different people define “quality” differently. Your editor prioritizes readability. Your SEO lead cares about keyword density and structure. Your brand manager is laser-focused on voice consistency. A framework aligns everyone around shared criteria instead of letting those competing priorities create chaos.

Specifically, a well-designed evaluation framework helps you:

- Compare AI models objectively — Is Claude actually better than GPT-4o for your use case, or does it just feel that way?

- Track quality over time — Are your prompts improving output or quietly degrading it?

- Identify weak spots — Maybe your AI nails structure but consistently fumbles transitions

- Justify tool investments — Show stakeholders measurable ROI instead of vibes

- Maintain brand standards — Ensure every piece clears a minimum quality threshold

Furthermore, frameworks bridge the gap between prompt engineering and content strategy. When you know which metrics actually matter, you write better prompts. Better prompts produce better content. It’s a virtuous cycle — and once it clicks, it clicks hard.

Organizations like the National Institute of Standards and Technology (NIST) have been developing AI evaluation standards for years. Their work on AI trustworthiness provides a foundation that content-specific frameworks build upon. Consequently, the concept isn’t new — it’s just finally reaching content marketing teams who need it most.

The Core Metrics That Define AI Content Quality

Not all metrics carry equal weight. The best AI content scale framework evaluation metrics systems balance multiple dimensions at once, and I’ve seen teams get this wrong by over-indexing on just one or two.

Here are the categories that matter most.

1. Accuracy and factual correctness

This is non-negotiable — full stop. AI models hallucinate with alarming confidence. They invent statistics, misattribute quotes, and state wrong information like they’re reading from a textbook. Your framework needs a binary or scaled accuracy check on every piece. Every claim should be verifiable. (I once caught a piece that cited a “2023 Harvard study” that simply didn’t exist. The model made it up completely.)

2. Readability and clarity

Tools like Hemingway Editor can measure reading level automatically, which is a solid starting point. Nevertheless, readability goes beyond grade level. Does the content flow logically? Are paragraphs tightly focused? Do transitions actually connect ideas, or just signal that a new sentence is starting?

3. Originality and uniqueness

AI content often sounds generic. Notably, models tend to produce eerily similar outputs for similar prompts. Your framework should measure how distinct each piece is from competitors and from your own prior content. Originality scores below 3/5 are usually a prompt engineering problem, not a model problem.

4. SEO alignment

Does the content target the right keywords? Is the structure optimized for featured snippets? Are headings properly nested? These are measurable, objective criteria that belong in any AI content scale framework evaluation metrics system — and they’re some of the easiest to automate.

5. Brand voice consistency

This is harder to quantify but equally important. Create a voice rubric with specific attributes — formal vs. casual, technical vs. accessible, authoritative vs. conversational. Score each piece against it. This surprised me when I first built one: even small rubric details make a massive difference in scorer agreement.

6. Engagement potential

Will readers actually care? Look at hook strength, emotional pull, and actionable takeaways. Although this is subjective, you can standardize it with clear scoring criteria. Fair warning: this is the metric reviewers argue about most, so define it carefully upfront.

7. Structural completeness

Does the piece have a clear introduction, logical body sections, and a strong conclusion? Are there enough supporting details? Is the content complete for its target keyword, or does it feel like a first draft that stopped too early?

Here’s how these metrics typically break down in a scoring framework:

| Metric Category | Weight | Scoring Range | Measurement Method |

|---|---|---|---|

| Accuracy | 25% | 1–5 | Manual fact-check |

| Readability | 15% | 1–5 | Automated tools + human review |

| Originality | 15% | 1–5 | Plagiarism check + manual assessment |

| SEO Alignment | 15% | 1–5 | SEO audit tools |

| Brand Voice | 15% | 1–5 | Rubric-based human scoring |

| Engagement Potential | 10% | 1–5 | Editorial judgment |

| Structural Completeness | 5% | 1–5 | Checklist-based review |

You can adjust these weights based on your priorities. Similarly, some teams add or remove categories depending on content type. The key is consistency — pick your weights and stick with them long enough to gather meaningful trend data.

Building Your Own Evaluation Metrics System

Theory is great. Implementation is what actually matters. Here’s a practical, step-by-step approach to building your own AI content scale framework evaluation metrics process — one that won’t collapse the moment a second person tries to use it.

Step 1: Define your content types

Blog posts need different criteria than product descriptions. Email copy differs from whitepapers. Start by listing every content type you produce with AI, then decide which metrics apply to each. Don’t force a blog rubric onto a product page — it’ll produce garbage scores.

Step 2: Create scoring rubrics

Vague criteria produce vague results. Instead of “Is the content good?”, define what a 5 looks like versus a 3. For example:

- Accuracy 5/5: Every factual claim is verified. Sources are cited where appropriate. No hallucinations detected.

- Accuracy 3/5: Most facts are correct. One or two minor inaccuracies found. No critical errors.

- Accuracy 1/5: Multiple factual errors. Hallucinated statistics or sources present. Requires a complete rewrite.

Step 3: Choose your tools

You don’t need to build everything from scratch. I’ve tested dozens of tool combinations — here’s what actually works:

- Grammarly for grammar and clarity scoring

- Originality.ai for AI detection and plagiarism checks

- Google Search Central guidelines for SEO best practices

- Spreadsheets or project management tools for tracking scores over time

Step 4: Establish baseline scores

Run your current AI content through the framework before you change anything. This gives you a real starting point. Moreover, it reveals immediate problem areas — most teams find that accuracy and originality are their weakest metrics right out of the gate. That’s normal. The point is knowing.

Step 5: Create feedback loops

The framework isn’t a one-time exercise. Use scores to improve your prompts, refine your processes, and train your team. Track trends monthly — are scores actually improving? Which metrics keep lagging? The real kicker is that this data usually points to one or two fixable issues driving most of your quality problems.

Step 6: Calibrate among reviewers

If multiple people score content, you need calibration sessions. Have everyone score the same piece independently, compare results, and dig into disagreements. This ensures your AI content scale framework evaluation metrics produce reliable, consistent data rather than just reflecting whoever happened to review that piece.

Additionally, document everything. A framework that lives only in someone’s head isn’t a framework — it’s just an opinion with extra steps.

How AI Content Scales Across Models and Use Cases

One of the most valuable uses of AI content scale framework evaluation metrics is model comparison. Different tools genuinely excel at different things, and a structured evaluation makes those differences visible instead of anecdotal.

Model-to-model comparison

GPT-4o might score higher on creativity and engagement. Claude might win on accuracy and instruction-following. Gemini might edge ahead on technical content. Without a framework, these differences stay in the “I feel like Claude is better” category. With one, they become decisions you can act on. I’ve seen teams switch models — or start using different models for different content types — based purely on this data. That’s worth something.

Here’s what a typical model comparison might reveal:

| Evaluation Metric | GPT-4o | Claude 3.5 Sonnet | Gemini 1.5 Pro |

|---|---|---|---|

| Accuracy | 3.8 | 4.2 | 4.0 |

| Readability | 4.3 | 4.1 | 3.9 |

| Originality | 4.0 | 3.7 | 3.5 |

| SEO Alignment | 3.5 | 3.9 | 3.6 |

| Brand Voice | 3.8 | 4.0 | 3.4 |

| Engagement | 4.2 | 3.8 | 3.6 |

Note: These scores are illustrative examples, not benchmarks. Your results will vary based on prompts, use cases, and evaluation criteria.

Use-case-specific scaling

Content type dramatically affects quality scores. Consequently, your framework should track performance by use case, not just overall. AI might produce solid first-draft blog posts but fall apart on case studies. It might nail social media copy but struggle badly with technical documentation. Notably, these patterns repeat consistently once you have enough data to see them.

Prompt quality impact

Here’s where AI content scale framework evaluation metrics connect directly to prompt engineering — and this matters. Better prompts consistently produce higher scores across all metrics. Specifically, prompts that include these elements tend to outperform vague ones:

- Clear role definitions

- Specific output requirements

- Examples of desired quality

- Constraints and guardrails

- Target audience descriptions

The OpenAI Prompt Engineering Guide offers excellent starting points. Meanwhile, testing prompt variations against your framework metrics shows exactly which elements drive the biggest quality improvements — and the answers sometimes surprise you.

Scaling considerations

As volume increases, quality tends to drop. Your framework should track this relationship clearly. If you’re producing 10 pieces per week at an average score of 4.2, what happens at 50 pieces? At 100? Understanding that curve helps you staff appropriately and set realistic expectations before you hit a quality cliff.

Advanced Evaluation Techniques at Scale

Once you’ve got the basics running smoothly, several advanced techniques can sharpen your AI content scale framework evaluation metrics further. These aren’t required on day one — but they’re worth building toward.

A/B testing with real performance data

Don’t just score content in isolation — measure how it actually performs. Track organic traffic, time on page, bounce rate, and conversions. Then connect those outcomes to your framework scores. This shows whether your metrics actually predict success, or whether you’ve been measuring the wrong things. (This step has humbled me more than once, honestly.)

Automated scoring pipelines

Manual evaluation doesn’t scale past a certain point. Importantly, you can automate a meaningful chunk of it. Readability scores, keyword density, content length, and structural checks can all run automatically. Reserve human evaluation for the genuinely subjective stuff: brand voice, engagement potential, and nuanced accuracy checks.

Regression analysis

Which metrics most strongly predict content performance? Regression analysis can answer this. You might find that readability and accuracy together explain 70% of your traffic variance. Consequently, you’d increase their weight in your framework. This turns your evaluation system from a quality gate into an actual predictive tool.

Inter-rater reliability testing

If your framework depends on human scorers, you need to measure how consistently they agree. Cohen’s Kappa is the standard measure for this. Scores below 0.6 suggest your rubrics need work. Scores above 0.8 mean you’ve built something genuinely reliable — and that’s harder to achieve than it sounds.

Temporal drift monitoring

AI models change over time. OpenAI, Anthropic, and Google update their models regularly. Although these updates often improve overall performance, they can shift output in unexpected ways — sometimes in ways that hurt your specific use case. Run benchmark prompts monthly and compare scores. Your framework should catch these shifts before they quietly erode your content quality.

Content decay tracking

Even high-scoring content degrades. Facts go stale, search trends shift, and competitors publish better material. Build content refresh triggers into your AI content scale framework evaluation metrics system. When scores for existing content drop below a threshold, flag it for updates. This feature feels unnecessary until suddenly it isn’t.

Practical tools for advanced evaluation

- Use Ahrefs or similar platforms to connect content scores with actual search performance

- Build dashboards that show score trends across models, content types, and time periods

- Set up automated alerts when average scores drop below acceptable thresholds

Nevertheless, don’t over-engineer this. Start simple. Add complexity only when basic metrics stop giving you useful insights — otherwise you’ll build a system nobody actually uses.

Conclusion

Building an effective AI content scale framework evaluation metrics system isn’t optional anymore — it’s essential for any team producing AI content at scale. The frameworks, metrics, and techniques covered here give you a concrete starting point, not a theoretical one.

Here are your actionable next steps:

1. Start with the seven core metrics — accuracy, readability, originality, SEO alignment, brand voice, engagement, and structure

2. Create detailed scoring rubrics for each metric with clear definitions at every score level

3. Benchmark your current content to establish real baselines before changing anything

4. Compare AI models using your framework to match the right tools to the right use cases

5. Automate what you can and reserve human judgment for genuinely subjective criteria

6. Track and iterate monthly — your AI content scale framework evaluation metrics should evolve as your needs change

The teams winning at AI content aren’t the ones producing the most. They’re the ones producing the best — consistently, measurably, and at scale. Importantly, that consistency doesn’t happen by accident. A solid evaluation framework is what makes it repeatable.

FAQ

What exactly is an AI content scale framework?

An AI content scale framework is a structured system for scoring and evaluating AI-generated content. It defines specific quality metrics, assigns weights to each one, and provides rubrics for consistent scoring. Think of it as a report card for your AI content — it turns subjective quality judgments into repeatable, data-driven assessments that teams can actually use to improve output over time. Moreover, it gives everyone on your team a shared language for what “good” means.

How many metrics should my framework include?

Start with five to seven core metrics. That’s enough to capture meaningful quality differences without overwhelming your team. Specifically, accuracy, readability, originality, SEO alignment, and brand voice cover the essentials — and honestly, you can get a lot of mileage from just those five. You can add more metrics later as your process matures. However, more isn’t always better — each metric you add increases evaluation time and complexity, so add them deliberately.

Can I fully automate AI content quality evaluation?

You can automate roughly 40–60% of the evaluation process. Readability scores, grammar checks, plagiarism detection, keyword analysis, and structural checks all lend themselves well to automation. Conversely, metrics like brand voice consistency, factual accuracy, and engagement potential still need human judgment — and probably always will. The best approach combines automated screening with targeted human review for the subjective stuff.

How often should I update my evaluation metrics?

Review your framework quarterly at minimum. Additionally, trigger a review whenever you adopt a new AI model, change your content strategy, or notice a growing gap between your scores and actual content performance. AI models update frequently — sometimes in ways that meaningfully shift output quality — and your evaluation criteria should keep pace. Stale frameworks produce misleading scores, which is arguably worse than no framework at all.

Which AI model scores highest across evaluation frameworks?

No single model wins across all metrics and use cases — and anyone telling you otherwise is probably selling something. Performance depends heavily on your specific prompts, content types, and evaluation criteria. Furthermore, models improve rapidly, and today’s leader might fall behind next quarter after an update. That’s precisely why having your own AI content scale framework evaluation metrics matters. It gives you current, personalized data rather than forcing you to rely on generic benchmarks that may not reflect your reality at all.

How do I get my team to actually use the framework?

Make it easy and make it matter. Keep the scoring process under 10 minutes per piece — if it takes longer, people will find reasons to skip it. Integrate it into existing workflows rather than bolting on a separate step. Importantly, tie framework scores to team goals and content approval processes. When scores determine whether content gets published, people pay attention fast. Also, share wins openly — celebrate when average scores improve and show the team how better scores connect to better real-world content performance. That connection is what makes it stick.