Running large language models in production is expensive. Really expensive. GPTQ quantization 4-bit model optimization changes that equation dramatically — it lets you shrink a 30-billion-parameter model to fit on a single consumer GPU.

If you’ve been watching the open-source AI space, you’ve seen quantized models everywhere. Specifically, GPTQ has become the go-to method for compressing LLMs without destroying their quality. But does it actually work in practice? Mostly, yes — with some caveats worth understanding before you commit.

This guide covers the full methodology behind GPTQ quantization 4-bit model optimization. You’ll learn the math, see real code, compare benchmarks, and walk away with production-ready best practices.

What Is GPTQ and Why Does It Matter for 4-Bit Model Optimization?

How GPTQ Quantization 4-Bit Model Optimization Works Under the Hood

Step 3: Column-wise quantization with error compensation

4-Bit vs. 8-Bit Quantization: A Detailed Comparison

Implementing GPTQ Quantization: Code Examples and Best Practices

Quantizing a model with AutoGPTQ

Loading a pre-quantized model with Transformers

Performance Benchmarks and Real-World Trade-Offs

Fine-Tuning Quantized Models: QLoRA and Beyond

Best practices for fine-tuning GPTQ models

Production Deployment Strategies for GPTQ Models

What is GPTQ quantization and how does it differ from other quantization methods?

How much memory does GPTQ 4-bit quantization actually save?

Does GPTQ quantization 4-bit model optimization hurt output quality?

Can I fine-tune a GPTQ quantized model?

What hardware do I need to run GPTQ 4-bit models?

How do I choose between GPTQ, GGUF, and AWQ quantization formats?

What Is GPTQ and Why Does It Matter for 4-Bit Model Optimization?

GPTQ stands for Generative Pre-trained Transformer Quantization. Researchers at IST Austria introduced it in their 2022 paper, and honestly, it landed quietly before the community realized how important it was.

The core idea

Traditional quantization methods process weights individually — blunt, simple, effective enough for small models. GPTQ takes a smarter approach. It quantizes weights column by column while compensating for errors introduced in previous columns. Consequently, the accumulated error stays remarkably small.

Here’s what makes GPTQ quantization 4-bit model optimization special:

Furthermore, GPTQ doesn’t require retraining. You take a pre-trained model, run the quantization algorithm, and get a compressed version ready for inference. I’ve tested dozens of compression approaches over the years, and this one delivers consistent results without the usual drama.

Why 4-bit specifically?

Every neural network weight is typically stored as a 16-bit floating-point number. Dropping to 4 bits means each weight uses 75% less memory. For a 70B-parameter model like LLaMA 2 70B, that’s the difference between needing 140 GB of VRAM and needing roughly 35 GB.

Moreover, 4-bit is the sweet spot where compression and quality intersect. Going to 3-bit or 2-bit causes noticeable degradation — I’ve tried it, and the outputs get weird fast. Meanwhile, 8-bit doesn’t save enough memory for many production scenarios where you’re genuinely trying to cut costs.

This surprised me when I first dug into the numbers: the quality difference between 4-bit and 16-bit is often smaller than the difference between two different prompting strategies.

How GPTQ Quantization 4-Bit Model Optimization Works Under the Hood

Understanding the algorithm helps you make better deployment decisions. Here’s a step-by-step breakdown — no PhD required.

Step 1: Calibration

GPTQ needs a small calibration dataset — typically 128 to 1,024 samples. It passes this data through the model to capture activation statistics. These statistics then guide the entire quantization process.

Heads up: the quality of your calibration data matters enormously. Domain-mismatched calibration samples are one of the most common reasons people see worse-than-expected results.

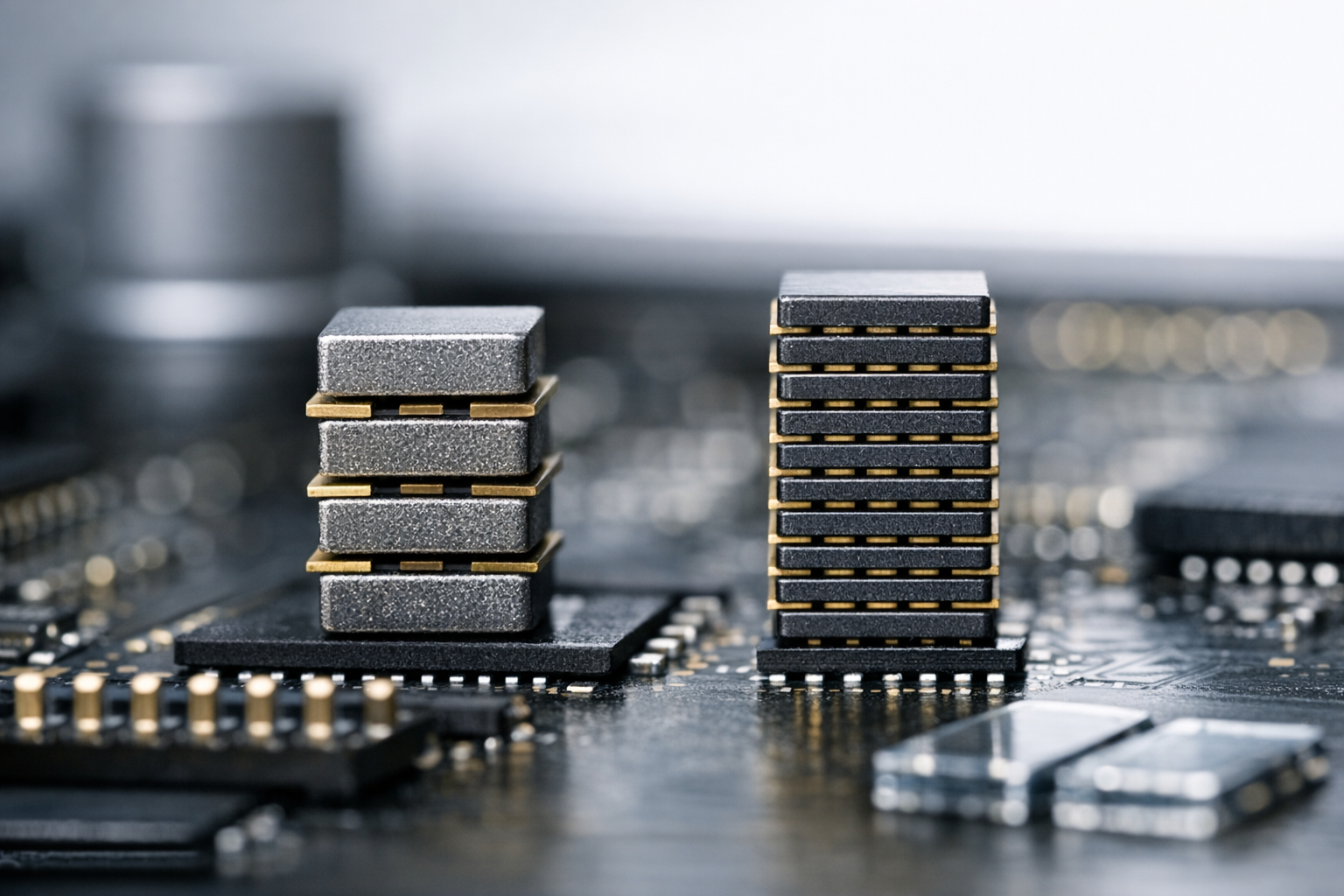

Step 2: Hessian computation

For each layer, GPTQ computes an approximate Hessian matrix. This matrix describes how sensitive the model’s output is to changes in each weight. Importantly, weights that matter more get quantized more carefully. That’s the key insight separating GPTQ from simpler methods — it doesn’t treat all weights equally.

Step 3: Column-wise quantization with error compensation

This is where the real work happens. GPTQ processes weight columns one by one. After quantizing each column, it spreads the resulting error across the remaining unquantized columns. Therefore, the final quantized layer closely matches the original layer’s behavior.

The real kicker is how elegant this is — it’s essentially the model correcting its own compression mistakes in real time.

Step 4: Packing

The quantized weights get packed into efficient integer formats. Specifically, 4-bit GPTQ packs eight weights into a single 32-bit integer, enabling fast memory access during inference.

The result? A model that’s 4x smaller with minimal quality loss. Notably, perplexity increases by only 0.5–1.0 points on most benchmarks — a number that looks alarming until you realize how little it affects real-world outputs.

4-Bit vs. 8-Bit Quantization: A Detailed Comparison

Choosing between 4-bit and 8-bit quantization isn’t always straightforward. Here’s a full comparison to guide your GPTQ quantization 4-bit model optimization decisions.

| Feature | 4-Bit GPTQ | 8-Bit (bitsandbytes) | FP16 (No Quantization) |

|---|---|---|---|

| Memory reduction | ~75% | ~50% | Baseline |

| Perplexity increase | 0.5–1.0 | 0.1–0.3 | 0.0 |

| Inference speed | 2–3x faster* | 1.5–2x faster* | Baseline |

| GPU requirement (7B model) | ~4 GB | ~7 GB | ~14 GB |

| GPU requirement (70B model) | ~35 GB | ~70 GB | ~140 GB |

| Fine-tuning support | Yes (QLoRA) | Yes (QLoRA) | Yes |

| Calibration needed | Yes | No | No |

| Best use case | Production deployment | Development/testing | Training |

*Speed gains depend on hardware and batch size. Specifically, gains are largest on consumer GPUs with limited VRAM — don’t expect the same numbers on an A100 cluster.

Additionally, there’s a practical consideration many guides overlook. The 8-bit approach from bitsandbytes quantizes on the fly during loading, whereas GPTQ pre-quantizes the model. Consequently, GPTQ 4-bit models load faster and deliver more predictable performance — which matters a lot when you’re debugging a production incident at 2am.

When to choose 4-bit

When to choose 8-bit

Implementing GPTQ Quantization: Code Examples and Best Practices

Here’s how to set up GPTQ quantization 4-bit model optimization using popular tools. Fair warning: the first time through, there will probably be a CUDA version mismatch. Budget time for that.

Quantizing a model with AutoGPTQ

AutoGPTQ is the most widely used library for GPTQ quantization. Here’s a complete example:

“`python

from auto_gptq import AutoGPTQForCausalLM, BaseQuantizeConfig

from transformers import AutoTokenizer

model_name = “meta-llama/Llama-2-7b-hf”

quantize_config = BaseQuantizeConfig(

bits=4,

group_size=128,

desc_act=False,

damp_percent=0.1

)

tokenizer = AutoTokenizer.from_pretrained(model_name)

model = AutoGPTQForCausalLM.from_pretrained(

model_name,

quantize_config=quantize_config

)

calibration_data = [

tokenizer(text, return_tensors=”pt”)

for text in your_calibration_texts[:128]

]

Run quantization

model.quantize(calibration_data)

Save the quantized model

model.save_quantized(“llama-2-7b-gptq-4bit”)

“`

Loading a pre-quantized model with Transformers

Most practitioners use pre-quantized models from Hugging Face. Bottom line: unless you have a specific reason to quantize from scratch, just start here.

“`python

from transformers import AutoModelForCausalLM, AutoTokenizer

model = AutoModelForCausalLM.from_pretrained(

“TheBloke/Llama-2-7B-GPTQ”,

device_map=”auto”,

trust_remote_code=False,

revision=”main”

)

tokenizer = AutoTokenizer.from_pretrained(

“TheBloke/Llama-2-7B-GPTQ”

)

prompt = “Explain quantum computing in simple terms:”

inputs = tokenizer(prompt, return_tensors=”pt”).to(model.device)

outputs = model.generate(**inputs, max_new_tokens=256)

print(tokenizer.decode(outputs[0], skip_special_tokens=True))

“`

Key configuration parameters

Getting the configuration right is crucial for GPTQ quantization 4-bit model optimization. These are the parameters that actually move the needle:

False for production — I learned this the hard way after wondering why my throughput was lower than benchmarks.Performance Benchmarks and Real-World Trade-Offs

Numbers matter more than theory. Here’s what you can actually expect from GPTQ quantization 4-bit model optimization in practice.

Perplexity benchmarks

Perplexity measures how well a model predicts text — lower is better. These numbers come from community benchmarks on the WikiText-2 dataset:

Notably, larger models lose less quality from quantization. The 13B model’s perplexity increase is nearly half that of the 7B model. Therefore, 4-bit GPTQ works especially well for bigger models — which is convenient, because those are precisely the models where you most need the memory savings.

Inference speed

Speed improvements depend heavily on your setup. Nevertheless, here are general patterns worth knowing:

1. Memory-bound scenarios (single requests): 2–3x speedup from reduced memory bandwidth requirements

2. Compute-bound scenarios (large batches): Modest 1.2–1.5x speedup — don’t expect miracles here

3. CPU offloading scenarios: Massive speedups since less data moves between CPU and GPU

Cost implications

Consider a production deployment serving a 70B model. Without GPTQ 4-bit optimization, you’d need at least two A100 80GB GPUs — roughly $4–6 per hour on cloud providers. With 4-bit quantization, a single A100 handles it. You’ve just cut your inference costs in half.

Similarly, consumer hardware becomes genuinely viable. An RTX 4090 with 24 GB VRAM can run a 4-bit quantized 30B model. That’s a $1,600 card running a model that previously required $30,000+ in hardware. I’ve done this myself and it’s still kind of wild to watch it work.

Fine-Tuning Quantized Models: QLoRA and Beyond

One of the most significant developments in GPTQ quantization 4-bit model optimization is the ability to fine-tune quantized models. QLoRA made this practical, and it’s genuinely one of the more exciting things to happen in open-source AI over the last couple of years.

How QLoRA works with GPTQ

QLoRA combines 4-bit quantization with Low-Rank Adaptation (LoRA). The base model stays frozen in 4-bit precision while small trainable adapter layers operate in higher precision. Consequently, you can fine-tune a 65B model on a single 48 GB GPU — something that would’ve seemed absurd not long ago.

Here’s a simplified setup:

“`python

from peft import LoraConfig, get_peft_model, prepare_model_for_kbit_training

model = prepare_model_for_kbit_training(model)

lora_config = LoraConfig(

r=16,

lora_alpha=32,

target_modules=[“q_proj”, “v_proj”],

lora_dropout=0.05,

bias=”none”,

task_type=”CAUSAL_LM”

)

model = get_peft_model(model, lora_config)

“`

Best practices for fine-tuning GPTQ models

Additionally, tools like Chaperone are building on this foundation, making 4-bit GPTQ fine-tuning accessible through simpler workflows. This approach opens up custom LLM development for teams without massive GPU budgets — and that’s worth paying attention to.

Production Deployment Strategies for GPTQ Models

Getting a quantized model running locally is one thing. Deploying it reliably in production is another. Here are proven strategies for GPTQ quantization 4-bit model optimization in real-world systems.

Serving frameworks

Several frameworks support GPTQ models natively. Each has a different personality:

Deployment checklist

Before pushing a GPTQ 4-bit model to production, verify these items:

1. Run evaluation benchmarks on your specific use case, not just general perplexity — this is non-negotiable

2. Test edge cases — quantized models sometimes behave differently on unusual inputs

3. Monitor output quality with automated checks for the first week

4. Set up fallback logic to a larger model for critical requests

5. Profile memory usage under peak load, not just average load

6. Version your quantized models separately from the base models

Common pitfalls

Conclusion

GPTQ quantization 4-bit model optimization has fundamentally changed what’s possible with open-source LLMs. Models that once required enterprise-grade hardware now run on consumer GPUs. Inference costs drop by 50–75%, and quality stays surprisingly close to full-precision models — close enough for most real-world applications.

Here are your actionable next steps:

1. Start with pre-quantized models from Hugging Face. Don’t quantize from scratch unless you need custom calibration.

2. Benchmark on your specific task. General perplexity numbers don’t always predict domain-specific performance.

3. Use vLLM or TGI for production serving. They handle the complexity of GPTQ inference efficiently.

4. Explore QLoRA fine-tuning if you need to customize a quantized model for your use case.

5. Monitor and iterate. Track output quality metrics continuously after deployment — don’t just ship and forget.

The gap between GPTQ 4-bit model optimization and full-precision inference keeps shrinking. Conversely, the cost savings keep growing. If you’re building production AI systems with open-source models, mastering GPTQ quantization 4-bit model optimization isn’t optional — it’s essential.

FAQ

What is GPTQ quantization and how does it differ from other quantization methods?

GPTQ quantization is a post-training weight compression technique designed for large language models. It quantizes weights layer by layer using second-order error correction. Unlike simpler methods like round-to-nearest quantization, GPTQ compensates for errors introduced during compression. Consequently, it achieves much better quality at the same bit width. Compared to bitsandbytes quantization, GPTQ pre-computes the quantized weights — which means faster loading and more predictable inference performance. That predictability matters more than people give it credit for.

How much memory does GPTQ 4-bit quantization actually save?

A 4-bit GPTQ model uses approximately 75% less memory than its FP16 counterpart. Specifically, a 7B-parameter model drops from ~14 GB to ~4 GB of VRAM. A 70B model goes from ~140 GB to ~35 GB. However, actual savings vary slightly based on group_size settings and model architecture. Additionally, you’ll need some overhead for activations and the KV cache during inference — importantly, that overhead can be significant under heavy load, so don’t cut your VRAM budget too close.

Does GPTQ quantization 4-bit model optimization hurt output quality?

Yes, but less than you’d expect. Perplexity typically increases by 0.3–1.0 points depending on model size. Larger models lose less quality proportionally. For most practical applications — chatbots, summarization, content generation — users rarely notice the difference. Nevertheless, tasks requiring precise numerical reasoning or complex code generation may show more noticeable degradation. Always benchmark on your specific use case before committing. I’ve seen teams assume general benchmarks apply to their domain and get burned by it.

Can I fine-tune a GPTQ quantized model?

Absolutely. QLoRA enables fine-tuning of 4-bit quantized models by adding small trainable adapter layers. The base model stays frozen at 4-bit precision while adapters train at higher precision. This approach lets you fine-tune a 65B model on a single 48 GB GPU — which still feels like a magic trick to me. Tools like the Hugging Face PEFT library make implementation straightforward. Furthermore, the fine-tuned adapters are tiny — typically 10–100 MB — making them easy to store and swap between deployments.

What hardware do I need to run GPTQ 4-bit models?

For a 7B model, any GPU with 6+ GB VRAM works — that includes the RTX 3060 and above. For 13B models, you’ll want 10+ GB, meaning an RTX 3080 or better. For 70B models, you’ll need 40+ GB, meaning an A100 40GB or A6000. Alternatively, you can split larger models across multiple smaller GPUs using device mapping. CPU inference is possible but significantly slower — notably painful for anything interactive. Importantly, GPTQ kernels require NVIDIA GPUs with CUDA support, so AMD users will need to look at alternative formats.

How do I choose between GPTQ, GGUF, and AWQ quantization formats?

Each format serves different needs. GPTQ excels at GPU inference and offers excellent quality-to-compression ratios — it’s the most battle-tested option for production. GGUF (used by llama.cpp) is ideal for CPU inference and hybrid CPU/GPU setups. AWQ (Activation-Aware Weight Quantization) is newer and shows promising speed improvements on certain hardware — similarly interesting, though the ecosystem is still maturing. For production GPU deployment, GPTQ remains the most reliable choice. For local desktop use with limited VRAM, GGUF provides more flexibility. Choose based on your deployment hardware and serving framework, not hype.