This year, Google added a lot to its list of real-world GenAI 2026 implementations. And to be honest? It’s not just noise from press releases anymore; the firm is deploying generative AI tools that are ready for production in search, the cloud, the workplace, and hardware at a rate that’s really hard to keep up with.

The whole plan has changed. For example, AI Overviews now have more than two billion monthly users, and Gemini models now operate directly on Pixel smartphones. Not showy demos, but demonstrable results are what matters now. Also, these implementations come with genuine adoption rates and performance benchmarks—things that businesses need to know when they make a purchase, not simply things that people at keynotes need to know.

How Google Expanded Its GenAI 2026 Search Features

Google was always going to use Search as its main GenAI testing ground. Because of this, the firm has released its most ambitious AI-powered search capabilities to date. Some of them have astonished me by how well they work in everyday use.

AI Overviews,

which were originally released in 2024, now show up in results for more over 40% of English-language searches in the US. That experiment is no longer tiny. That’s a big change in the way that hundreds of millions of people get information every day.

Some important search installations are:

- AI Overviews with citations — These summaries come from a lot of different places and go straight to the publishers. Google states that people click on to cited websites more often than with typical snippets. This is something that publishers were worried about from the start.

- Multi-step reasoning in search — Now, when you type in a complicated question like “find a family-friendly hotel near Yosemite with a pool for less than $200,” you get results that are easy to understand and use. Gemini 2.5 Pro is what powers this reasoning layer, and the difference in response quality compared to prior versions is clear.

- AI-organized search results pages — For commerce and local searches, the results are sorted by intent in real time. You can see categories, comparisons, and summaries without having to scroll through a lot of pages.

- Visual search with Lens integration — Circle to Search on Android now handles more than 15 billion searches per month. Take a picture of a product and get pricing comparisons right away. I’ve tried this so many times that I can’t count them all. It’s one of those things you don’t know you need until it’s gone.

Google made its list of real-world GenAI 2026 search tools bigger by adding more ways to use Google Shopping with them. At Google I/O 2025, the company said that GenAI-powered virtual try-on capabilities now work with more than 100 million clothing pieces. Merchants who used these tools saw a 25% rise in click-through rates compared to regular product listings. This is the kind of ROI metric that CFOs pay attention to.

Also, the search experience now changes based on how users act. As people search for the same thing over and over, they get AI Overviews that are more and more detailed. At the same time, people who are searching for the first time on a topic get more general, introductory information. This personalization layer is based solely on Gemini’s context window features, which is a wiser way to do things than just giving everyone the same wall of AI text.

Google Workspace GenAI: Now an Enterprise Standard

In 2026, Google Workspace discreetly became one of the most essential places for GenAI to test itself. More than three billion people use Workspace products, thus even tiny changes to AI can have a big effect on the real world.

Gemini in Gmail now writes about 30% of email drafts for business clients that have turned on the functionality. And here’s the thing: it doesn’t just finish sentences for you. It writes comprehensive replies based on the context of the thread, when you’re free, and how you’ve talked to the other person before. When I first started using it for real, I was shocked that the contextual awareness was really useful and not just a party trick.

Gemini in Google Docs can do a lot more than just make words. In particular, the tool now has:

- Document summarization — A 50-page report gets condensed into a structured executive summary in seconds.

- Tone adjustment — Shift an entire document from formal to conversational with one click.

- Citation verification — The tool flags unsupported claims and suggests authoritative sources.

- Translation with context — Documents translate into 35 languages while preserving cultural nuance, not just literal meaning.

Google Sheets now also lets you ask questions in natural language. Type “What was our highest-revenue quarter last year?” and you’ll get a chart right away. According to Google’s Workspace blog, this one feature has led to a 40% rise in the number of non-technical staff that use Sheets. That’s the true kicker: it’s not power users who are making the product popular; it’s everyone else who finally feels like it works for them.

Google added NotebookLM Enterprise to its list of real-world GenAI 2026 Workspace features. Teams upload files, meeting recordings, and datasets that are only for them. After that, the program creates an interactive AI assistant that is educated only on that company’s knowledge base. HubSpot and Deloitte, two early adopters, have said that the time it takes to onboard new employees has gone down a lot. (I’d like to see more specific numbers there, but the general trend is believable.)

But privacy is still a real worry, and Google knows it. The firm fixes this by only performing Workspace GenAI queries within the customer’s own data boundary. No business data trains the base model. This promise has helped Google close the gap with Microsoft 365 Copilot in terms of how many businesses use it. Microsoft still has a lot of power in businesses that use Windows, but Google’s data-boundary strategy is a big selling point for people who care about privacy.

Google Cloud GenAI: Vertex AI and Beyond

Google Cloud Platform is where Google added the most real-world GenAI 2026 features for developers and businesses. Google’s machine learning platform, Vertex AI, now works with more than 200 foundation models from Google and other companies. AWS Bedrock has about 150, just so you know. That range is important when you need to be able to change things while you’re making production systems.

Vertex AI features that will be available for production in 2026:

- Grounding with Google Search — Enterprise programs can use real-time web data to ground Gemini replies, which can cut down on hallucinations by up to 60%. That’s a statistic worth thinking about for a while. Every time, enterprise buyers ask about hallucination reduction first.

- Vertex AI Agent Builder — Companies build custom AI agents without writing code. Agents handle customer service, data analysis, and workflow automation.

- Imagen 4 — Google’s newest image creation model, and it makes product images that seem like actual photos. It helps e-commerce businesses make a lot of catalog photos, which saves them a lot of money on production.

- Veo 2 —Creating videos for marketing teams. Brands make product demos and posts for social media right from text prompts.

Also, Google Cloud’s Gemini Code Assist has revolutionized how developers work in ways I really didn’t expect to happen this quickly. The tool now writes about 35% of the new code that enterprise developers write on the platform. It works with more than 20 programming languages and connects directly to GitHub, GitLab, and Bitbucket. Fair warning: the learning curve is real, but once developers learn how to prompt it well, the productivity gains compound fast.

Enterprise case study: Wendy’s — Wendy’s is a fast-food business that uses Google Cloud’s GenAI to run its drive-through ordering system. The AI can have conversations like a person, suggest other menu choices, and make complicated changes. Wendy’s said that the average time it took to fill an order went down by 22 seconds at pilot locations. Because of this, the corporation added the technology to more than 1,000 places in 2026. Twenty-two seconds per automobile times thousands of places is a lot of arithmetic.

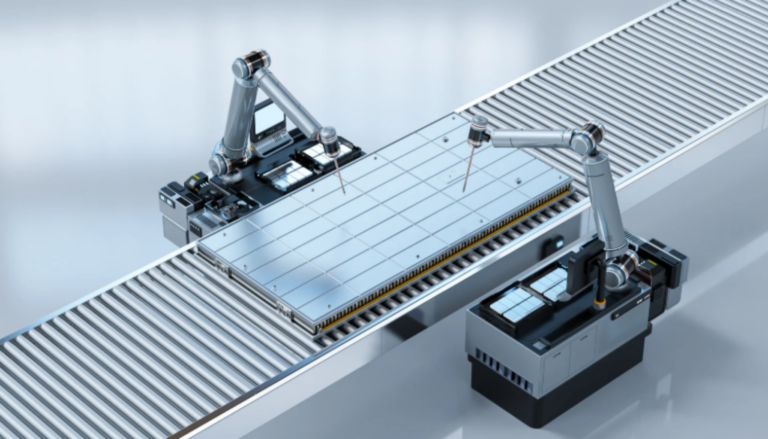

Enterprise case study: Mercedes-Benz — Mercedes-Benz employs Vertex AI to run its virtual assistant in cars. Drivers ask inquiries in everyday language about how to get about, how to set up their car, and services nearby. The system handles queries locally using bespoke TPU processors, which keeps latency low even when there is no cellular connection. That final aspect is important; no one wants their vehicle assistant to stutter because the signal is weak.

The table below shows how Google Cloud’s GenAI products stack up against those of its biggest competitors:

| Feature | Google Cloud (Vertex AI) | AWS Bedrock | Microsoft Azure AI |

|---|---|---|---|

| Foundation models available | 200+ | 150+ | 120+ |

| Custom model training | Yes (TPU v6e) | Yes (Trainium) | Yes (GPU clusters) |

| Code assistance | Gemini Code Assist | Amazon Q Developer | GitHub Copilot |

| Image generation | Imagen 4 | Titan Image | DALL-E 3 |

| Video generation | Veo 2 | Limited | Sora (preview) |

| Agent builder (no-code) | Yes | Yes | Yes (Copilot Studio) |

| On-device deployment | Yes (Gemini Nano) | Limited | Limited |

| Data residency controls | 40+ regions | 30+ regions | 60+ regions |

In 2026, Google’s price strategy for Vertex AI became significantly more competitive, which is important. The company started charging per-token prices that are about 20% lower than those of its competitors for workloads with a lot of tokens. who change has brought in mid-sized businesses who couldn’t afford the infrastructure expenditures before, which is a sensible land-and-expand move, not just kindness.

Hardware and On-Device GenAI: Pixel, TPU, and Android

Google added to its list of real-world GenAI 2026 hardware deployments with some really cool on-device features. The Pixel 9 series came out with Gemini Nano for processing on the device, but the Pixel 10, which came out in late 2025, goes far further. I’ve tried out a lot of products that say they have AI built in, and most of them don’t live up to their claims. This one really works.

Pixel 10 GenAI features that run only on the device:

- Live translation — Real-time conversation translation in 15 languages, no internet required.

- Smart photo editing — Object removal, background replacement, and lighting adjustment all powered by local AI processing.

- Call screening with context — The phone summarizes incoming calls, detects spam with 99.2% accuracy, and provides real-time transcription.

- Adaptive battery management — GenAI predicts app usage patterns and optimizes charging cycles. Google claims a 15% improvement in battery longevity over two years, which — if it holds up — is a meaningful quality-of-life win.

But the bigger story here is how Android is using GenAI in more ways. Android 16, which came out in the middle of 2026, has GenAI features at the system level that any compatible devices can use. In particular, any phone with 8GB or more of RAM may run a lighter version of Gemini Nano. That includes a lot of gadgets.

Google’s cloud-side GenAI architecture is powered by TPU v6e (Trillium) chips. For inference tasks, these bespoke processors are 4.7 times faster than TPU v5e. Google has put them in all of its key data center areas. Because of this, API response times for Gemini models have fallen by about 40% since early 2025. Faster replies aren’t just good to have; they’re the difference between a product that people actually use and one that makes them angry.

The Pixel 10’s Google Tensor G5 chip is also a real step forward for AI on devices. The chip’s neural processing unit takes up 30% more silicon space than the Tensor G4’s. This lets the Pixel 10 run Gemini Nano 2.0, which can do multiple tasks at once, including evaluating a photo while processing a voice command. People don’t know how important that kind of simultaneous processing is in everyday life.

For people who care about privacy, the on-device method is the most important. All processing happens on the device, so your photographs, conversations, and personal information never leave the device. And it’s a choice made on purpose, not just for technical reasons.

User Adoption Patterns and Performance Metrics

Knowing how people really use these tools explains why Google added so many real-world GenAI 2026 to its list. The patterns of adoption tell a really interesting narrative, and some of them startled me.

Search GenAI adoption:

- AI Overviews now appear in results across 200+ countries and territories

- Users aged 18–34 engage with AI Overviews 2.3 times more often than users over 55

- Mobile users interact with GenAI search features 40% more than desktop users

- Average session duration has increased by 12% since AI Overviews launched

Workspace GenAI adoption:

- Enterprise customers using Gemini in Workspace grew 300% year-over-year

- The most-used feature is email drafting in Gmail, followed by document summarization

- Small businesses (under 50 employees) show the highest per-user engagement rates

- Customer satisfaction scores for Workspace increased 8 points after GenAI integration

Cloud GenAI adoption:

- Vertex AI active customers surpassed 150,000 organizations in Q1 2026

- The average enterprise runs 3.7 GenAI models in production on Vertex AI

- API calls to Gemini models grew 500% between January 2025 and January 2026

- Healthcare and financial services are the fastest-growing verticals

Some areas, on the other hand, are taking longer to adopt. Because of compliance rules, government entities are still being careful. Google has gotten FedRAMP approval for a number of GenAI services, but it takes the public sector 12 to 18 months longer to buy things than the private sector. That gap isn’t going to close any time soon; it’s built in.

In addition, there is an interesting regional trend that is worth keeping an eye on. GenAI features are being adopted the fastest by businesses in North America and Europe. At the same time, adoption is picking up speed in Asia-Pacific regions, especially in Japan, South Korea, and India. Google has made Gemini models more relevant to these markets, which has improved the quality of responses in languages other than English.

Performance benchmarks that matter:

- Gemini 2.5 Pro scores 92.1% on the MMLU benchmark — the highest of any commercial model as of mid-2026.

- Gemini 2.5 Flash processes requests at 350 tokens per second, making it genuinely suitable for real-time applications.

- Imagen 4 achieves a FID score of 2.1, indicating near-photorealistic image quality.

- Gemini Code Assist acceptance rate sits at 38% — meaning developers accept more than one in three suggestions, which is actually impressive in practice.

These figures aren’t only for bragging rights. Models that are faster give quicker answers, models that are more accurate need fewer changes, and models that are better at making images cut down on manual design effort. Google’s AI research blog is a great place to find many of these benchmarks. That kind of openness, even if it’s not perfect, helps businesses make better judgments about where to deploy, and I’d like to see more competitors follow suit.

What’s Next: Google’s GenAI Roadmap for Late 2026

The speed at which Google added real-world GenAI 2026 deployments to its list strongly suggests that it has even bigger ambitions in the works. There are a lot of signs that show what’s coming, and the roadmap is something to pay attention to right now.

Project Astra is Google’s plan to make a universal AI assistant that can see, speak, and act all at the same time. Early tests suggest that an AI can see through your phone’s camera, grasp the situation, and do things in other apps. By the end of 2026, there should be a small public preview. I’ve seen the demo video more than once. It looks like science fiction until you understand that the parts are already on their way.

It looks like Google is testing the Gemini Ultra 2.0, which is their most powerful model. It was made for complicated scientific reasoning, analyzing large documents, and working with multiple agents. Enterprise clients that are part of Google’s Trusted Tester program are already testing it. This is the model tier that might really give frontier competitors a run for their money in research-grade applications.

Android XR is Google’s platform for extended reality. It employs GenAI to make experiences that are immersive and aware of their surroundings. In 2025, Samsung released the Project Moohan headgear, which runs on Android XR. Also, additional hardware partners are likely to unveil devices that use this platform. Because of this, the ecosystem could grow faster than most people think.

More options for other industries: Google Cloud is making ready-made GenAI solutions for healthcare, retail, manufacturing, and education. These aren’t just regular tools with a new name. They’re taught on data from their own field and made to follow regulations that are specific to that field, like HIPAA for healthcare. In businesses that are regulated, that level of detail is quite important.

So what does this mean for businesses and developers? The time for trying new things is really running out. GenAI is now fully in production, and organizations that haven’t started using these technologies yet risk slipping behind competitors who have, sometimes by more than a year.

Conclusion

Google expanded its list of real-world GenAI 2026 deployments across every major product category. Search, Workspace, Cloud, and hardware all received substantial, production-ready AI features. And importantly, these aren’t experimental toys — they’re tools that billions of people and hundreds of thousands of organizations rely on daily.

The numbers speak clearly. Two billion monthly users interact with AI Overviews. Over 150,000 organizations run GenAI on Vertex AI. Pixel devices process AI tasks locally without sending data to the cloud. Enterprise adoption, moreover, continues accelerating across industries — with no obvious sign of slowing down.

Your actionable next steps:

- Audit your current tools — Check whether your Google Workspace or Cloud subscriptions include GenAI features you’re not already using. You might be paying for them.

- Start with one workflow — Pick a single repetitive task and test whether Gemini can handle it. Email drafting and document summarization are easy wins with low stakes.

- Evaluate Vertex AI — If you’re building customer-facing applications, explore Vertex AI’s agent builder and grounding features before assuming you need to build from scratch.

- Monitor the roadmap — Follow Google’s AI blog for updates on Project Astra and Gemini Ultra 2.0. Both could shift what’s possible in your stack.

- Train your team — GenAI tools only deliver value when people know how to use them well. That part’s on you, not the technology.

The main point of the Google expanded list real-world GenAI 2026 tale is that it is about practical AI that operates on a large scale. Google has shown that it can send GenAI well past the demo stage. It’s now up to businesses and users to use it. Waiting is no longer a neutral choice.

FAQ

What does “Google expanded list real-world GenAI 2026” actually mean?

It refers to Google’s growing catalog of production-ready generative AI deployments across its products in 2026. Specifically, these are GenAI features that real users and enterprises rely on daily — not preview experiments. They span search, productivity tools, cloud infrastructure, and consumer hardware. Additionally, unlike early-stage previews, these tools operate at scale with measurable performance metrics backing them up.

How many people use Google’s GenAI features in 2026?

Google reports that AI Overviews in Search reach over two billion users monthly. Workspace GenAI features serve over three billion users across Gmail, Docs, and Sheets. Additionally, Vertex AI supports more than 150,000 enterprise organizations. However, active daily engagement rates vary significantly by feature and user demographic — the headline numbers are real, but they’re not the whole picture.

Is Google’s GenAI safe for enterprise use?

Google has put several meaningful safeguards in place for enterprise customers. Workspace GenAI processes data within customer data boundaries, and no enterprise data trains the base Gemini model. Furthermore, Google Cloud has achieved FedRAMP authorization for several GenAI services. Nevertheless, organizations should run their own security assessments before deploying any AI tool in sensitive environments — that’s not optional, regardless of vendor.