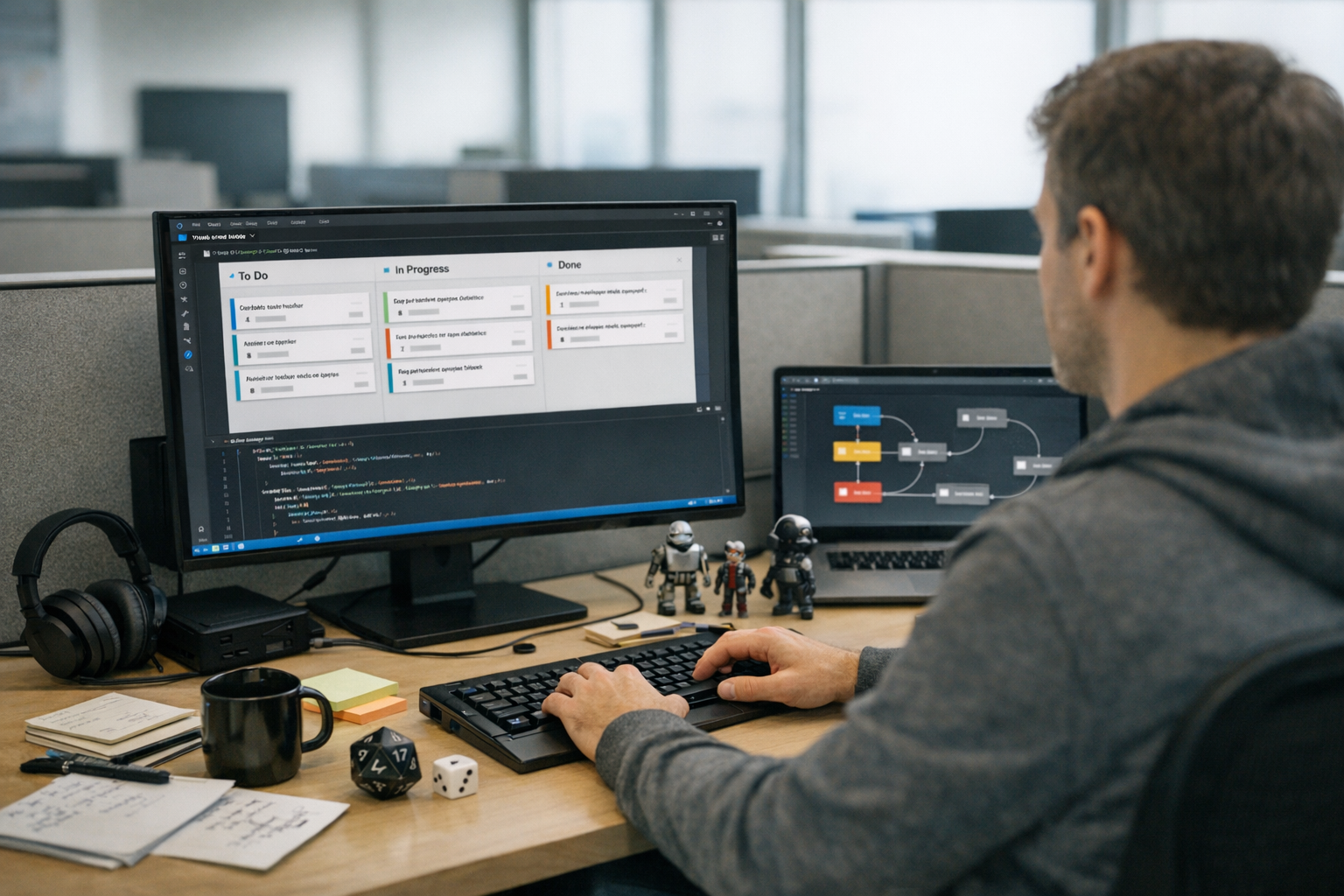

AgentKanban VS Code task management AI workflow automation solves a problem every AI developer knows well. You’re juggling multiple agents, prompts, and execution chains — with no visual way to track what’s actually happening. AgentKanban fixes that by embedding a Kanban-style task board directly inside Visual Studio Code.

If you’re building agentic AI systems, you already know the pain. Agents spawn subtasks, hit errors, retry, and branch in ways you didn’t anticipate. Traditional project management tools weren’t designed for any of this. Consequently, most developers end up drowning in terminal logs or scribbling scattered notes. AgentKanban gives you a structured, visual approach to managing these complex workflows — without ever leaving your editor.

Here’s the thing: this extension bridges the gap between AI agent orchestration and practical task tracking. It’s purpose-built for developers who need real-time visibility into what their agents are doing, what’s queued, and what’s broken.

How AgentKanban Brings AI Workflow Automation Into VS Code

AgentKanban is a Visual Studio Code extension that creates an interactive task board inside your editor. Specifically, it’s designed for tracking tasks generated by Large Language Model (LLM) agents during autonomous workflows.

A lot of tools claim to solve this problem. Most of them just bolt a generic board onto your environment and call it done. AgentKanban actually thinks about how agents behave.

Here’s what makes it different from a generic Kanban tool:

- Agent-aware columns — Tasks move through stages like “Queued,” “Agent Processing,” “Awaiting Review,” and “Completed”

- LLM context linking — Each task card stores prompt context, model responses, and token usage

- Automatic task creation — Agents can add tasks to the board programmatically via API hooks

- VS Code native — No browser tabs, no external apps, no context switching

Furthermore, the extension supports multiple board configurations. You can run one board per agent or consolidate everything into a single view — and that flexibility matters when you’re orchestrating multi-agent systems. For example, a developer running a research pipeline might keep a dedicated board for a web-scraping agent and a separate board for a summarization agent, then switch between them with a single keyboard shortcut. Alternatively, a team building a customer-support bot could consolidate every agent — intent classifier, retrieval agent, response generator — onto one board and use color-coded labels to tell them apart at a glance.

Why does this matter for AI workflow automation? Because agentic AI isn’t like traditional software. Tasks emerge dynamically — an agent might decide at runtime to break a problem into five subtasks you never anticipated. Without a visual tracking system, you’re flying blind. AgentKanban captures that dynamic behavior and makes it manageable.

The tool follows patterns outlined in research on agent design patterns, particularly the “plan and execute” pattern. The board becomes your live execution plan, updated in real time as agents work through their tasks.

Setting Up AgentKanban: Installation and Configuration

Getting started with AgentKanban VS Code task management AI workflow automation takes about five minutes. Honestly, that surprised me — I expected more friction.

Here’s the step-by-step process:

- Open VS Code and go to the Extensions marketplace

- Search for “AgentKanban” and click Install

- Open the Command Palette (Ctrl+Shift+P or Cmd+Shift+P)

- Type “AgentKanban: Initialize Board” and press Enter

- Choose a board template — select “AI Agent Workflow” for the best starting point

- A

.agentkanbanconfiguration file appears in your project root

One quick tip: if you’re working inside a monorepo with multiple agent projects, run the initialization command from the subfolder you want the board scoped to. AgentKanban will anchor the config file there, keeping boards isolated per project rather than polluting the root.

Configuring your first board is straightforward. The .agentkanban config file uses JSON format:

{

"boardName": "Multi-Agent Research Pipeline",

"columns": [

{ "id": "backlog", "title": "Backlog", "color": "#6B7280" },

{ "id": "agent-queue", "title": "Agent Queue", "color": "#3B82F6" },

{ "id": "processing", "title": "Agent Processing", "color": "#F59E0B" },

{ "id": "review", "title": "Human Review", "color": "#8B5CF6" },

{ "id": "done", "title": "Completed", "color": "#10B981" }

],

"agentIntegration": {

"enabled": true,

"apiEndpoint": "http://localhost:8080/kanban",

"autoCreateTasks": true

}

}

Additionally, you can customize columns to match your specific workflow. Some developers add an “Error” column for failed agent tasks. Others add a “Blocked” column for tasks waiting on external data. Both are worth considering from day one. A practical tradeoff to keep in mind: more columns give you finer-grained status tracking, but they also spread your attention thinner. I’ve found that five to seven columns hit the sweet spot for most agent pipelines — beyond that, you spend more time categorizing tasks than acting on them.

Connecting to your LLM agent requires a small integration layer. AgentKanban exposes a local REST API that your agent code can call. Here’s a Python example:

import requests

def create_agent_task(title, description, agent_id):

payload = {

"title": title,

"description": description,

"column": "agent-queue",

"metadata": {

"agent_id": agent_id,

"model": "gpt-4",

"max_tokens": 4096

}

}

response = requests.post("http://localhost:8080/kanban/tasks", json=payload)

return response.json()

create_agent_task("Summarize research paper #42", "Extract key findings and methodology from the uploaded PDF", agent_id="research-agent-01")

Notably, this integration means your agents become self-organizing. They create tasks, update statuses, and flag issues — all visible on your board in real time. You’re not manually updating anything.

Workflow Patterns for Managing AI Agents With AgentKanban

The real power of AgentKanban VS Code task management AI workflow automation shows up when you apply proven workflow patterns. Developers waste weeks building custom dashboards for exactly this kind of visibility — and then find that these patterns solve 80% of their problems out of the box.

Pattern 1: Plan-and-Execute Pipeline

This is the most common pattern, and the one you should start with.

A planning agent breaks a complex goal into discrete tasks, and each task lands on the board. Execution agents then pick up tasks from the queue and process them in order:

- Planning agent creates 5–10 tasks in the “Backlog” column

- A dispatcher moves tasks to “Agent Queue” based on priority

- Execution agents pull tasks and update status to “Processing”

- Completed tasks move to “Human Review” for validation

- Approved tasks shift to “Completed”

To make this concrete: imagine you ask a planning agent to “Analyze Q3 sales data and produce a board-ready report.” The planner might create tasks like “Pull raw CSV from warehouse,” “Clean missing values,” “Compute regional breakdowns,” “Generate visualizations,” and “Draft executive summary.” Each task appears on the board the moment the planner decides it’s needed, so you can see the full execution plan before a single token of work begins — and intervene early if the plan looks off.

Pattern 2: Multi-Agent Collaboration

When multiple agents work on related tasks, AgentKanban provides real coordination. Similarly to how a Kanban board works in manufacturing, it limits work-in-progress and prevents bottlenecks before they snowball.

You can set WIP (Work-in-Progress) limits per column:

{

"columns": [

{

"id": "processing",

"title": "Agent Processing",

"wipLimit": 3

}]

}

That wipLimit: 3 setting alone has saved me from accidentally hammering API rate limits more times than I’d like to admit. Here’s a scenario that illustrates why: I once had four agents simultaneously calling the OpenAI API with large context windows. Within two minutes I burned through my rate-limit budget for the hour, and every subsequent request returned a 429 error. A WIP limit of 3 would have held the fourth agent in the queue until a slot opened, spreading the load evenly and keeping the pipeline moving instead of stalling it entirely.

Pattern 3: Human-in-the-Loop Review

Not every agent output should go straight to production. The “Human Review” column creates a natural checkpoint — and moreover, you can set up notifications that alert you when tasks need your attention:

{

"notifications": {

"onColumnEntry": {

"review": {

"type": "vscode-notification",

"message": "Agent task ready for review"

}

}

}

}

A practical tip: batch your reviews. If you respond to every notification the moment it fires, you’ll context-switch yourself into oblivion. Instead, let three or four tasks accumulate in the review column and then evaluate them together. You’ll catch inconsistencies between related outputs that you’d miss reviewing them one at a time.

Pattern 4: Error Recovery and Retry

Agents fail. APIs time out. Models hallucinate. It happens.

AgentKanban handles this well — when an agent hits an error, it moves the task to an “Error” column with diagnostic metadata attached. You inspect the failure, adjust parameters, and requeue the task. No restarting entire workflows from scratch. This pattern is especially valuable for long-running pipelines where a single retry beats blowing everything up and starting over.

Consider a real-world scenario: a data-extraction agent is processing 200 PDFs overnight. PDF #137 triggers a parsing error because the file is image-only. Without AgentKanban, you’d wake up to a crashed pipeline and have to figure out which files succeeded and which didn’t. With the Error column, PDF #137 sits there with the traceback attached, the other 199 tasks show “Completed,” and you can fix the one failure in isolation — swap in an OCR pre-processing step, drag the task back to the queue, and move on.

AgentKanban Compared to Other Task Management Approaches

Developers building AI systems have several options for task management and AI workflow automation. However, not all tools are built equally for agentic workloads. Here’s how AgentKanban stacks up — and fair warning, some of these comparisons will surprise you.

| Feature | AgentKanban (VS Code) | Jira/Linear | LangGraph Studio | Custom Dashboard | Plain Text Files |

|---|---|---|---|---|---|

| VS Code integration | Native | Browser only | Separate app | Browser only | Native |

| Agent API hooks | Built-in | Requires plugins | Built-in | Custom build | None |

| Real-time updates | Yes | Yes | Yes | Depends | No |

| LLM metadata tracking | Yes | No | Partial | Custom build | Manual |

| WIP limits | Yes | Yes | No | Custom build | No |

| Setup time | 5 minutes | 30+ minutes | 15 minutes | Hours/days | 1 minute |

| Cost | Free | Paid plans | Free tier | Development cost | Free |

| Multi-agent support | Yes | Limited | Yes | Custom build | No |

Nevertheless, each approach has its place — and it’s worth being honest about that.

LangGraph excels at defining agent execution graphs and is genuinely strong for the orchestration layer. However, it doesn’t give you a persistent, visual task board. AgentKanban works alongside tools like LangGraph rather than replacing them. They solve different sides of the same problem.

Meanwhile, traditional project management tools like Jira work well for human team coordination. They fall apart at machine-speed task creation — an agent generating 50 subtasks per minute would overwhelm most project management interfaces before you even noticed.

Plain text files and TODO comments are surprisingly popular among solo developers. They’re fast, simple, and have zero overhead. But they don’t scale past about two agents and one session.

One tradeoff worth calling out explicitly: AgentKanban’s local-first design means it starts fast and requires no cloud account, but it also means you lose the built-in collaboration features that tools like Linear or Jira offer. If your team has five engineers all watching the same agent fleet, you’ll need to layer on a shared state store or wait for the planned cloud-sync feature. For a solo developer or a pair working on the same machine, the local model is actually an advantage — zero latency, zero auth headaches, zero subscription fees.

The sweet spot for AgentKanban VS Code task management AI workflow automation is developers who want visual clarity without leaving their coding environment. It’s notably effective for teams adopting agentic AI design patterns that involve planning, tool use, and reflection loops.

Advanced Integration: Connecting AgentKanban to Your AI Stack

Taking AgentKanban VS Code task management AI workflow automation further requires deeper integration with your existing tools. Here’s how to connect it to the frameworks most developers are actually using.

Integration with LangChain agents:

from langchain.agents import AgentExecutor

from langchain.callbacks import BaseCallbackHandler

class KanbanCallback(BaseCallbackHandler):

def on_agent_action(self, action, **kwargs):

Update task status on the board

requests.patch(

"http://localhost:8080/kanban/tasks/current",

json={"status": "processing", "metadata": {

"tool": action.tool, "input": action.tool_input

}

})

def on_agent_finish(self, finish, **kwargs):

requests.patch(

"http://localhost:8080/kanban/tasks/current",

json={"status": "review", "result": finish.return_values})

A practical note on the callback approach: attach the KanbanCallback to your AgentExecutor by passing it in the callbacks list. If you’re running multiple agents concurrently, include the agent_id in each PATCH request so the board routes updates to the correct task card. Without that identifier, concurrent updates can collide and you’ll see status flicker on the wrong cards — a subtle bug that’s annoying to diagnose.

Integration with CrewAI:

CrewAI uses a multi-agent crew model. Each crew member can map to a column or label on your AgentKanban board — therefore, you get clear visibility into which agent is handling which task without building any custom tooling.

from crewai import Agent, Task, Crew

Tag tasks with AgentKanban metadata

research_task = Task(

description="Research competitor pricing models",

agent=researcher,

metadata={"kanban_column": "agent-queue", "priority": "high"}

)

Webhook support enables two-way communication. Importantly, AgentKanban can notify external services when tasks change status — which means you can trigger downstream actions like deploying code or pinging a Slack channel based on board activity. I’ve built several pipelines around this and it’s become a standard part of my setup.

Here’s a quick example of a webhook payload you might send to Slack when a task enters the “Completed” column:

{

"webhook_url": "https://hooks.slack.com/services/T00/B00/xxxx",

"trigger": {

"column": "done",

"event": "task_entered"

},

"payload_template": {

"text": "✅ Task '{{task.title}}' completed by {{task.metadata.agent_id}} — tokens used: {{task.metadata.token_usage}}"

}

“`

That kind of notification closes the feedback loop: your agent finishes work, the board updates, and your team knows about it in Slack within seconds — no one has to poll the board manually.

A few things that matter for production-grade setups:

- Use environment variables for API endpoints, not hardcoded URLs

- Set task timeouts to catch stalled agents before they quietly eat resources

- Archive completed boards weekly to keep performance up (boards slow down past ~2,000 tasks)

- Tag tasks with model names and versions for reproducibility

- Export board state to JSON for audit trails and debugging

Additionally, AgentKanban supports board templates. Save a working configuration and share it across projects — for teams trying to standardize their AI workflow automation practices, this is genuinely worth using.

Conclusion

AgentKanban VS Code task management AI workflow automation represents a practical shift in how developers manage agentic AI systems. It brings visual task tracking directly into your coding environment, with agent-native features that generic tools simply don’t offer.

The combination of automatic task creation, LLM metadata tracking, and WIP limits makes it uniquely suited for AI workflows. Importantly, it works alongside existing orchestration tools rather than competing with them — so you’re not ripping out your stack, you’re adding visibility to it.

Here are your next steps:

- Install AgentKanban from the VS Code marketplace today

- Configure a basic board using the AI Agent Workflow template

- Connect one agent using the REST API integration

- Set WIP limits to prevent agent overload

- Add a Human Review column for quality control checkpoints

- Iterate on your workflow — adjust columns and patterns as your system grows

Bottom line: whether you’re building a single research agent or a complex multi-agent pipeline, AgentKanban gives you the visibility you need. Stop guessing what your agents are doing. Start seeing it.

FAQ

What exactly is AgentKanban, and how does it differ from regular Kanban tools?

AgentKanban is a VS Code extension built specifically for AI workflow automation. Unlike regular Kanban tools, it includes agent API hooks, LLM metadata tracking, and automatic task creation. Standard tools like Trello or Jira require manual task entry — AgentKanban lets your AI agents create and update tasks programmatically. Consequently, it’s purpose-built for dynamic, agentic workloads where tasks emerge at runtime rather than being planned upfront by a human.

Does AgentKanban work with any LLM framework, or is it limited to specific ones?

AgentKanban uses a framework-agnostic REST API. Therefore, it works with any LLM framework that can make HTTP requests — including LangChain, CrewAI, AutoGen, and custom Python or JavaScript agents. You simply POST task updates to the local API endpoint. The board reflects changes in real time, regardless of your underlying framework. There’s no lock-in, which is notably important as the agentic AI space is still moving fast.

Can I use AgentKanban for team collaboration, or is it only for solo developers?

AgentKanban supports team use through shared configuration files. The .agentkanban config lives in your project repository, so anyone who clones the repo gets the same board setup. However, real-time multi-user sync requires a shared backend server — that’s a real limitation worth knowing upfront. Solo developers get the smoothest experience out of the box. Teams should consider pairing it with a shared state store for concurrent access.

How does AgentKanban handle agent failures and error recovery?

When an agent hits an error, it updates the task status via the API and the task moves to an “Error” column with diagnostic metadata attached. Specifically, this metadata includes error messages, stack traces, and the last successful state. You inspect the failure directly on the board, fix the issue, and drag the task back to the queue. Moreover, this avoids restarting entire workflows from scratch — which, for long-running pipelines, is a genuinely big deal.

Is AgentKanban free, and what are its system requirements?

AgentKanban’s core extension is free and open source. It requires VS Code version 1.80 or later, and the local API server needs Node.js 18+ on your machine. Additionally, it uses minimal system resources — typically under 50MB of RAM — so it won’t compete with your other tools for headroom. There are no cloud dependencies for basic use. Premium features like cloud sync and team dashboards may follow a paid model in future releases, so keep that in mind if you’re planning ahead.