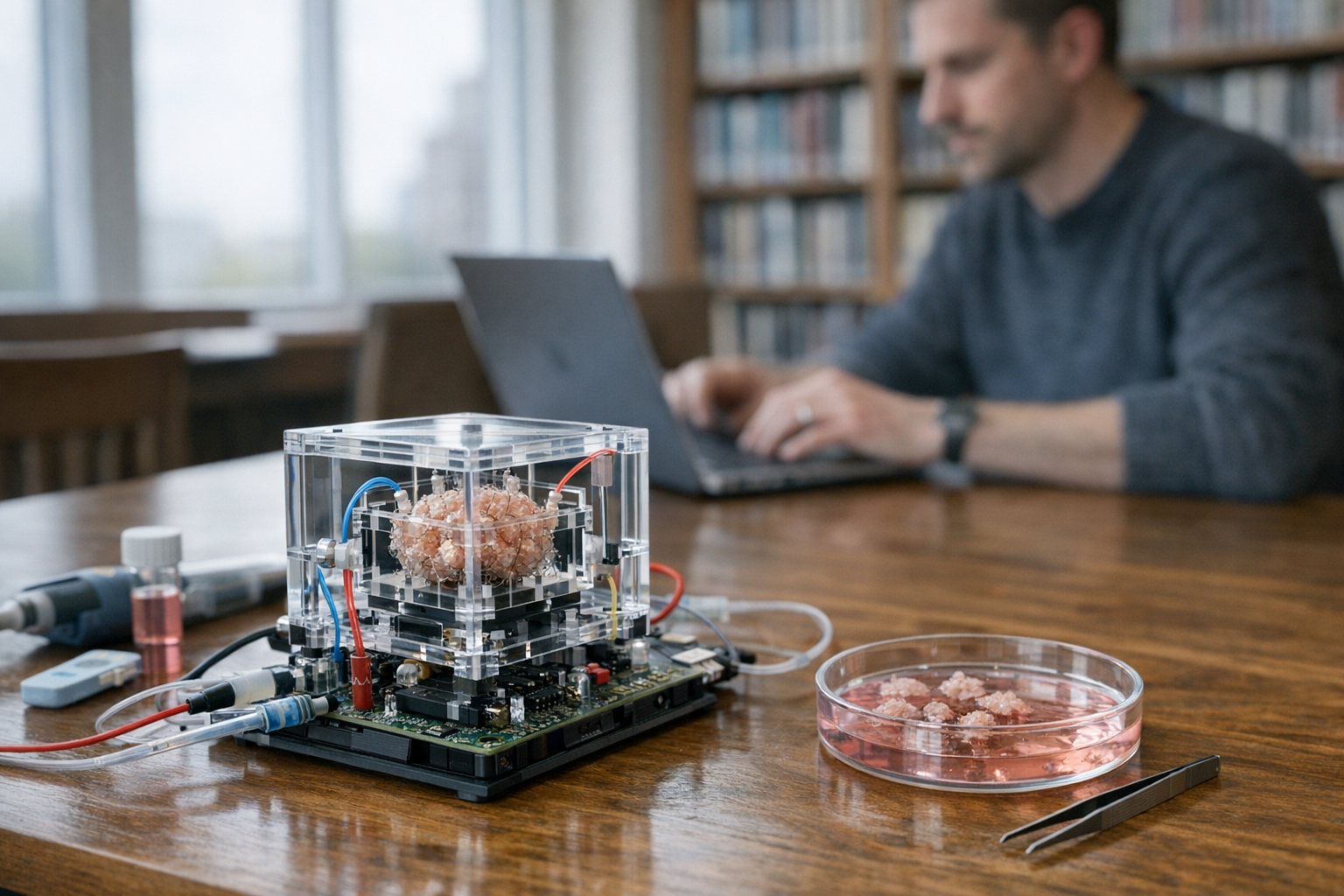

A new 3D device harnesses living brain cells to perform computational tasks once reserved for silicon chips. And no, this isn’t science fiction — researchers have actually built functional hardware that uses cultured neurons as processing units. I’ve been watching this space for years, and this one genuinely surprised me when it first crossed my radar.

This breakthrough sits right at the intersection of neuroscience and computer engineering. Specifically, it challenges the long-held assumption that faster processing requires smaller transistors. Biological neurons offer something silicon fundamentally can’t: massive parallelism, ultra-low power consumption, and adaptive learning baked right in. Consequently, the tech world is paying very close attention to what comes next — and honestly, so am I.

How Living Brain Cell Devices Differ From Silicon

Traditional computers rely on binary logic — transistors flipping between ones and zeros at incredible speed. However, they burn through enormous amounts of energy doing it. A single data center can consume as much electricity as a small city (that number still blows my mind every time). Moreover, silicon chips are rapidly approaching the physical limits described by Moore’s Law.

The new 3D device uses living brain cells through a fundamentally different approach. Biological neurons don’t operate in binary — they communicate through electrochemical signals across synapses. Each neuron can form thousands of connections at once. That’s a network complexity no transistor array comes close to matching.

How biological computing works in practice:

- Researchers culture neurons on multi-electrode arrays (MEAs)

- Electrical signals feed input data directly into the neurons

- The neural network processes that information through synaptic connections

- Output signals get recorded and interpreted by software

- The whole system learns and adapts — no explicit programming required

Furthermore, the three-dimensional structure here matters enormously. Flat 2D cultures severely limit how neurons can connect with each other. However, a 3D scaffold lets them grow in every direction. This mimics the brain’s natural architecture far more closely, producing richer — and more useful — computational behavior.

Energy efficiency is perhaps the most striking advantage, and it’s where the real kicker lives. The human brain runs on roughly 20 watts of power. Meanwhile, training a large language model can consume megawatts. Although biological systems are slower in raw clock speed, they absolutely dominate at pattern recognition and parallel processing.

| Feature | Silicon Chips | Biological Neural Computing |

|---|---|---|

| Power consumption | High (kilowatts per server) | Ultra-low (microwatts per organoid) |

| Processing style | Sequential/parallel digital | Massively parallel analog |

| Learning method | Software-driven training | Intrinsic synaptic plasticity |

| Scalability limit | Atomic-scale transistors | Cell growth and viability |

| Fault tolerance | Low (single point failures) | High (distributed processing) |

| Speed (clock rate) | Gigahertz range | Millisecond response times |

| Adaptability | Requires reprogramming | Self-organizing |

Notably, Cortical Labs in Melbourne has already shown that cultured neurons can learn to play Pong. Their DishBrain system showed neurons adapting their behavior based on feedback — a landmark proof of concept that I’ve revisited probably a dozen times. It proved that living brain cells could perform goal-directed computation outside a body, which is still kind of wild to say out loud.

The Science Behind Brain Organoids as Computing Substrates

Brain organoids are tiny, lab-grown clusters of brain cells that self-organize into structures resembling actual parts of the human brain. Scientists grow them from stem cells using carefully controlled protocols. Additionally, these organoids develop spontaneous electrical activity within just weeks of formation — no external prompting needed.

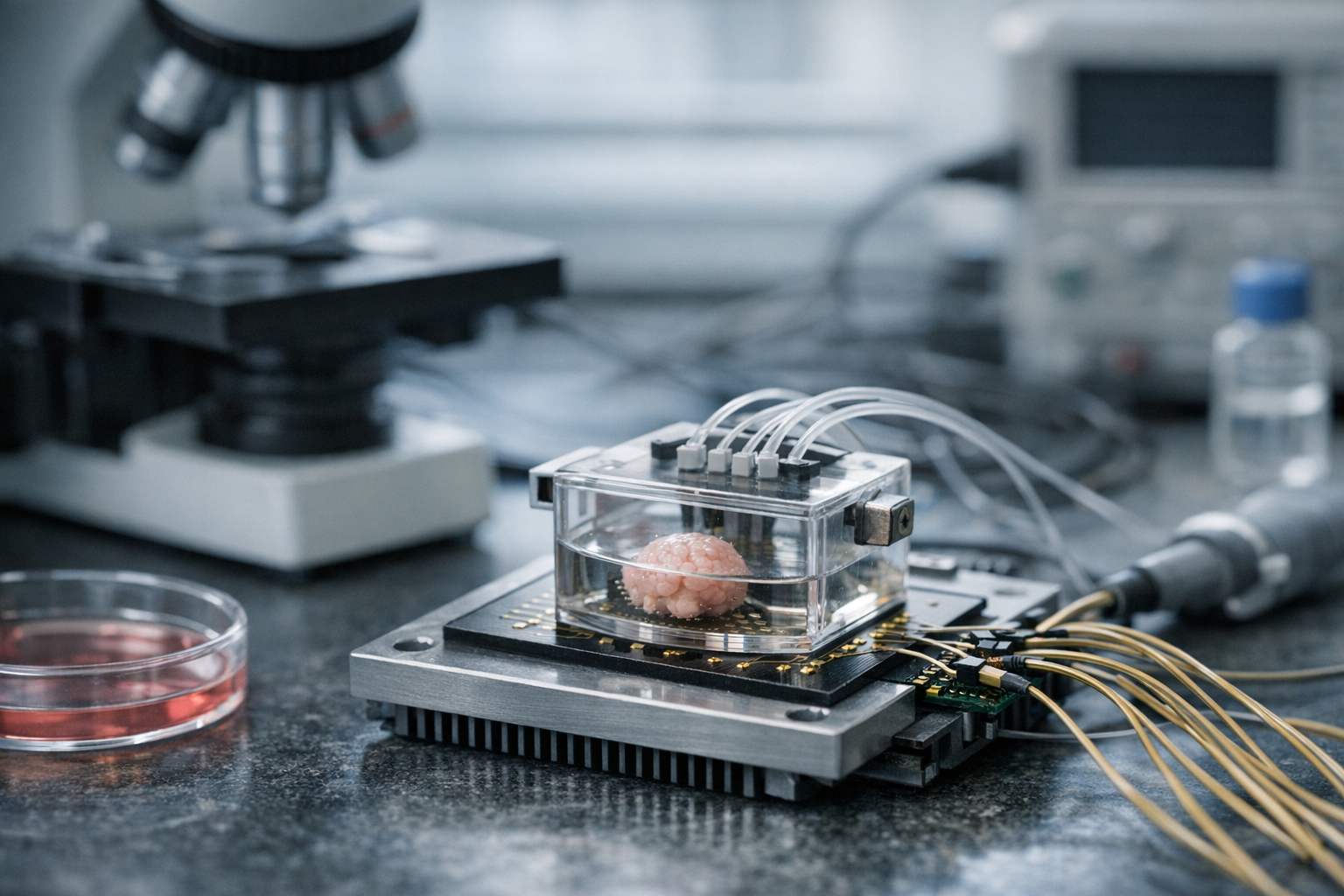

The new 3D device that uses living brain cells typically takes one of two approaches. First, dissociated neurons spread across electrode arrays. Second, intact organoids used as more complex processing units. Both carry distinct advantages, and I’ve seen compelling arguments for each side.

Dissociated neuron approach:

- Easier to control and measure precisely

- Functional networks establish themselves faster

- Better suited for simpler computational tasks

- More reproducible results between experiments

Organoid approach:

- Richer internal connectivity from the start

- More diverse cell types present throughout

- Closer to how natural brain function actually works

- Capable of handling more complex processing tasks

Researchers at Johns Hopkins University coined the term “organoid intelligence” to describe this field — and it stuck. Their work focuses on scaling organoid-based computing into practical systems. Specifically, they’re developing methods to keep organoids alive and functional for months, because longevity is critical for any real-world application. Fair warning: that challenge is harder than it sounds.

The three-dimensional structure of these devices deserves special attention. Traditional cell cultures grow in flat layers, but neurons naturally exist in 3D space. Therefore, researchers developed biocompatible scaffolds — hydrogels, silk proteins, synthetic polymers — that support three-dimensional growth and actually let neurons do their thing.

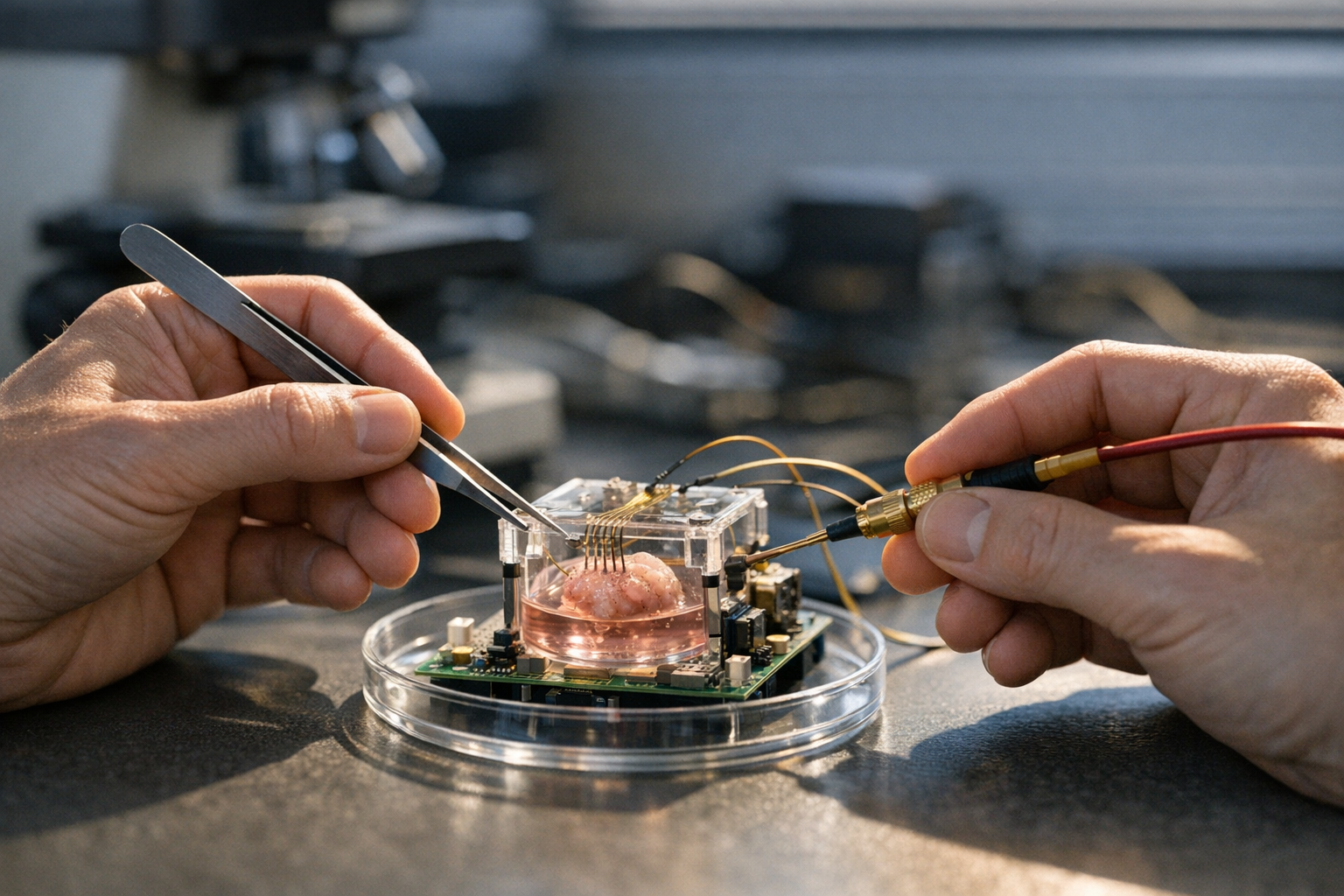

Signal input and output remain significant engineering challenges. Nevertheless, advances in microelectrode technology have been genuinely remarkable. Modern MEAs can record from thousands of neurons at once and deliver precise electrical stimulation to specific regions. This two-way communication is what turns a cluster of cells into a computing device.

One particularly promising development involves combining organoids with microfluidic systems. These tiny channels deliver nutrients and continuously remove waste products. Consequently, organoids can survive and function far longer than in static cultures — some labs report active organoids maintained for over a year, which wasn’t even close to possible five years ago.

Real-World Applications: Drug Discovery, Disease Modeling, and Beyond

The practical implications here extend far beyond academic curiosity. A new 3D device uses living brain cells not just for raw computation — it’s also a powerful tool for understanding disease and developing treatments. And this is honestly where things get exciting.

Drug discovery represents the most immediate commercial application. Testing drugs on living neural tissue produces data that animal models simply can’t match. Human brain organoids respond to drugs the way human brains actually do. This removes enormous amounts of guesswork in early-stage development. Similarly, it dramatically reduces the need for animal testing — a win most people can get behind.

Pharmaceutical companies are already investing heavily here. Organoid-based screening can evaluate thousands of compounds rapidly. Moreover, patient-derived organoids make genuinely personalized medicine approaches possible. A doctor could theoretically test drugs on a patient’s own brain cells before ever writing a prescription. That’s a real shift for neurology.

Disease modeling is another major application. Researchers can grow organoids from stem cells of patients with neurological disorders, creating what some call “disease-in-a-dish” models. These have already proven valuable for studying:

- Alzheimer’s disease progression and potential interventions

- Parkinson’s disease mechanisms at the cellular level

- Epilepsy and seizure disorders

- Autism spectrum conditions

- Zika virus effects on brain development

- Traumatic brain injury responses

Furthermore, the computational side opens entirely new possibilities. A biocomputer powered by living brain cells could tackle optimization problems where biological neural networks outperform digital ones — route planning, resource allocation, pattern matching. These are strong candidates, and researchers are actively exploring each one.

The National Institutes of Health has funded multiple research programs exploring organoid intelligence. Their involvement signals that this technology has moved well beyond the speculative phase. Because federal funding follows rigorous peer review, the scientific community now treats this as a serious research direction — not fringe stuff.

Robotics and autonomous systems could benefit enormously too. A biological processor might handle sensory integration more naturally than digital alternatives. Additionally, the adaptive learning capability means the system improves with experience — no retraining, no software update cycle. It just gets better.

Some researchers envision hybrid systems — silicon chips handling precise calculations alongside biological components managing adaptive processing. The new 3D device uses living brain cells alongside traditional hardware in this model. Importantly, this best-of-both-worlds approach could arrive sooner than fully biological computers, and it might honestly be the more practical path.

The environmental implications are significant too. Because biological computing handles certain tasks more efficiently, it could meaningfully reduce data center carbon footprints. Even a modest reduction in energy consumption at that scale has massive global impact.

Key Technical Breakthroughs Driving This Field

Several key technical advances have made biological computing viable. Understanding these breakthroughs helps explain why this field is accelerating so fast — and why researchers who seemed overly optimistic three years ago are starting to look prescient.

Electrode technology has improved dramatically. Early microelectrode arrays had dozens of contact points. Current versions feature thousands. Next-generation devices promise millions. This density allows researchers to communicate with neural networks at unprecedented resolution. Consequently, the computing interface becomes genuinely powerful rather than just theoretically interesting.

Stem cell protocols have also matured considerably. Generating consistent, high-quality brain organoids was once brutally difficult — I’ve talked to researchers who spent years troubleshooting protocols that barely worked. Today, standardized methods produce reliable results across different labs. The International Society for Stem Cell Research publishes guidelines that help maintain quality standards worldwide, which matters more than people realize.

Machine learning integration plays a crucial supporting role. Although the biological network does the core processing, software interprets its outputs — algorithms that translate neural activity patterns into usable data. Machine learning additionally helps optimize stimulation patterns for better performance, creating a feedback loop between biological and digital systems.

The 3D architecture itself required novel engineering solutions. Researchers needed to solve several problems at once:

1. Nutrient delivery — Cells deep inside a 3D structure need food and oxygen

2. Waste removal — Metabolic byproducts must be cleared continuously

3. Signal access — Electrodes must reach neurons throughout the entire volume

4. Structural support — The scaffold must be biocompatible and genuinely stable

5. Scalability — The system must grow beyond proof-of-concept size

Microfluidic engineering solved the first two — tiny channels woven through the scaffold act like artificial blood vessels. Meanwhile, flexible electrode arrays that conform to 3D shapes addressed signal access. These aren’t simple tweaks. They represent years of work across fields that don’t always talk to each other.

Nevertheless, significant hurdles remain. Biological systems are inherently variable — two organoids grown from the same cell line won’t be identical. That variability complicates reproducibility in ways that drive engineers absolutely crazy. Researchers are developing calibration techniques to account for biological differences, though standardization remains an active and unsolved area of work.

Longevity is another ongoing concern. Although organoids can survive for months, they do eventually degrade. A practical biocomputing device using living brain cells needs predictable operational lifetimes — something you can actually build a product around. Some teams are exploring cryopreservation techniques, while others focus on continuous cell replacement strategies. Neither approach is fully solved yet.

Ethical Implications of Computing With Living Brain Cells

No discussion of this technology is complete without addressing ethics — and honestly, this section matters as much as any of the technical ones.

A new 3D device uses living brain cells for computation, and that raises serious questions society needs to confront before the technology outpaces the conversation. I’ve sat in on a few of these ethics discussions, and the range of perspectives is genuinely fascinating.

The most fundamental question is whether these organoids can experience anything. Current brain organoids are tiny and lack the complexity of a full brain. However, they do produce electrical activity patterns similar to those seen in developing brains. Nature has published studies showing organoid activity resembling that of premature infants — a comparison that, notably, tends to make people in the room go quiet.

Key ethical questions include:

- At what point does a neural system deserve moral consideration?

- Who owns the intellectual output of a biological computer?

- Should there be limits on organoid size or complexity?

- How do we handle organoids derived from specific patients’ cells?

- What regulations should govern commercial biocomputing?

- Could biological computers ever develop something resembling consciousness?

Importantly, most ethicists agree that current organoids aren’t conscious — they lack sensory input, bodily context, and sufficient complexity. But because the technology is advancing rapidly, establishing ethical frameworks now is essential. Waiting for a crisis to emerge would be genuinely irresponsible, and history suggests we’re bad at not doing exactly that.

The consent question is particularly nuanced. Researchers typically grow organoids from donated stem cells whose original donors may not have expected their cells to be used for computing. Although existing consent frameworks cover research use broadly, commercial biocomputing is new territory. Specifically, current regulations simply weren’t designed with this application in mind.

Several institutions have formed dedicated bioethics committees for organoid research, bringing together neuroscientists, philosophers, legal scholars, and patient advocates. Their recommendations will likely shape future regulations. Moreover, international coordination is necessary here — this research spans multiple countries with very different regulatory cultures.

Animal welfare considerations cut both ways. If biological computers can replace animal models in drug testing, that’s a clear ethical win. Conversely, growing human brain tissue for commercial purposes introduces new ethical terrain that requires careful, honest thought — not just reassuring PR statements.

Regulatory frameworks are still catching up. The U.S. Food and Drug Administration hasn’t issued specific guidance on biocomputing devices yet. Similarly, European regulators are monitoring developments without firm rules in place. This gap creates both opportunity and real risk for companies in the space — heads up to anyone building here.

Transparency will be crucial going forward. Companies developing devices that use living brain cells need to communicate openly about their methods. Public trust depends on honest engagement with ethical concerns, not polished messaging. Additionally, independent oversight can help prevent potential abuses before they become headline problems.

Conclusion

The new 3D device uses living brain cells in ways that seemed genuinely impossible just a decade ago. I’ve covered a lot of “next big things” in ten years of tech writing — most of them weren’t. But this one feels different, and the science backs that instinct.

We’ve covered how biological computing differs from traditional chips, explored applications in drug discovery and disease modeling, examined the technical breakthroughs making this viable, and confronted the ethical questions that demand real answers. So, where does that leave you?

What you should do next:

- Follow research from Cortical Labs and Johns Hopkins University’s organoid intelligence program — both are worth bookmarking

- Monitor FDA and NIH announcements about emerging biocomputing regulations

- Think seriously about how biological computing might affect your specific industry within the next decade

- Stay informed about ethical developments as this field evolves faster than most people expect

- Explore whether hybrid computing architectures could solve problems you’re already wrestling with today

The convergence of neuroscience and computer engineering is accelerating, and it isn’t slowing down. A new 3D device that uses living brain cells isn’t a novelty or a curiosity — it’s a preview of computing’s biological future. Whether you’re in tech, healthcare, or policy, this technology will eventually touch your work. Worth paying attention to now, while there’s still time to understand it before it arrives.

FAQ

What exactly is a new 3D device that uses living brain cells?

It’s a computing platform that uses cultured human neurons or brain organoids as processing units. Specifically, neurons grow on three-dimensional scaffolds embedded with electrodes that send input signals and read output signals from the neural network. The biological tissue performs computation through natural synaptic processes. Consequently, the device can learn and adapt without traditional programming — which is still kind of remarkable when you say it plainly.

How does biological computing compare to artificial intelligence?

Traditional AI runs on silicon chips using mathematical models loosely inspired by neurons. However, biological computing uses actual living neurons — not a simulation of them. The key differences are energy efficiency and adaptability: living neural networks consume far less power and learn on their own. Nevertheless, silicon-based AI is currently faster for many specific tasks. The two approaches will therefore likely complement each other rather than compete directly, and most researchers expect hybrid systems to emerge first.

Is the new 3D device that uses living brain cells available commercially?

Not yet for general computing purposes — the technology remains primarily in research labs. Companies like Cortical Labs are actively developing commercial platforms, and additionally several startups are pursuing drug discovery applications specifically. Experts estimate limited commercial products could emerge within five to ten years. Meanwhile, academic research continues advancing faster than most people outside the field realize.

Are there ethical concerns with using living brain cells for computing?

Yes, and they’re significant — don’t let anyone wave them away. The primary concern involves whether neural tissue can experience sensation or suffering. Currently, organoids are too simple for consciousness. However, as the technology scales, this question becomes considerably more pressing. Furthermore, issues around cell donor consent and commercial ownership need resolution urgently. Multiple institutions are proactively developing ethical frameworks, which is the right instinct.

How long can a biological computing device remain functional?

Current systems operate reliably for weeks to months. Some well-maintained organoids have survived over a year in laboratory conditions. Notably, microfluidic systems that continuously deliver nutrients and remove waste extend operational lifetimes significantly. Longevity remains an active research challenge, with teams exploring cryopreservation and continuous cell replacement strategies to extend device lifespan further. Neither approach is fully cracked yet, but progress is real.

Could a new 3D device that uses living brain cells replace traditional computers?

Not entirely — and anyone telling you otherwise is overselling it. Biological computers excel at specific tasks like pattern recognition and adaptive learning. However, they’re slower than silicon for precise mathematical calculations. Therefore, the most likely future involves hybrid systems that combine biological processors for certain tasks with traditional chips for others. Think of it as adding a genuinely powerful new tool to the computing toolkit rather than tossing out everything that came before.