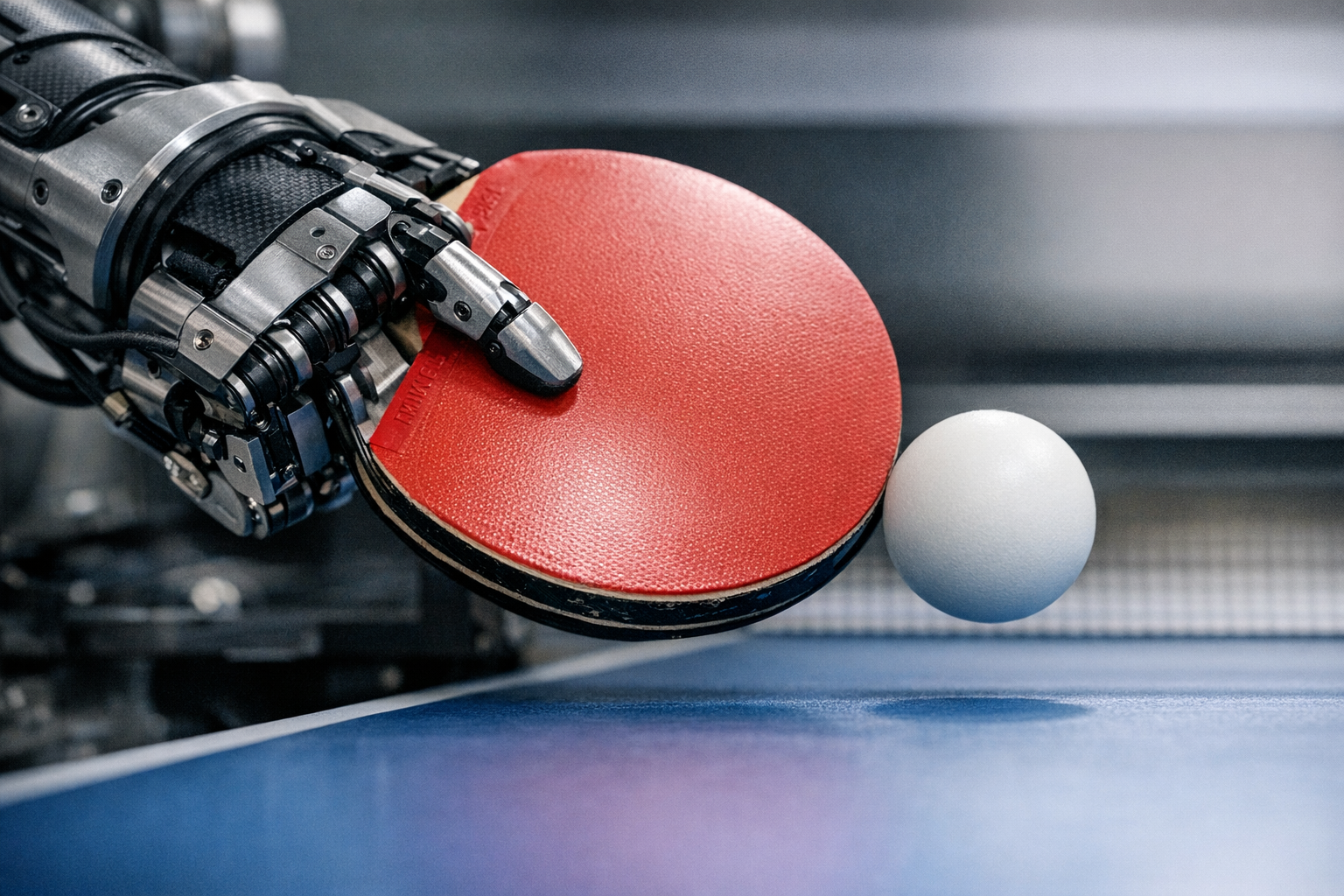

If you haven’t seen this yet, brace yourself — watch Sony’s elite ping-pong robot beat a world-class table tennis player and try not to drop your jaw. It tracks a ball moving at over 9 meters per second, predicts where it’s going, and returns it with unsettling precision. It does all of this in real time, using edge AI inference running locally on the robot itself.

This isn’t a trade-show party trick. Sony’s ping-pong robot is one of the most technically dense case studies in computer vision, robotic arm control, and predictive AI I’ve come across in a decade of covering this space. Consequently, it’s become required viewing for anyone serious about where sports robotics is actually headed.

How Sony’s Robot Uses Computer Vision to Track Every Shot

Real-Time Object Detection: YOLO, Pose Estimation, and Beyond

Robotic Arm Control: How the Robot Returns Shots With Precision

Edge AI Inference and the Software Architecture Behind Every Rally

What Happens When the Robot Faces Human Champions

How Sony’s Robot Uses Computer Vision to Track Every Shot

The whole thing starts with the computer vision pipeline — and honestly, this is where I’m most impressed. Specifically, Sony’s system uses high-speed cameras running at up to 1,000 frames per second to capture the ball’s position, spin, and velocity in real time.

Ball detection is the first real obstacle. A regulation table tennis ball is 40 millimeters across — smaller than you’d think — moves incredibly fast, and can spin at thousands of RPM. Traditional tracking approaches simply can’t keep up.

Sony’s engineers tackled this with a multi-camera stereo vision setup. Two or more cameras triangulate the ball’s exact 3D position. Additionally, the system uses infrared markers and high-contrast imaging to separate the ball from everything behind it. This surprised me when I first looked into it. I expected pure neural-network magic, but the setup is more structured than that.

Here’s how the tracking pipeline actually flows:

1. Image capture — High-speed cameras grab frames at sub-millisecond intervals

2. Preprocessing — Frames are cropped and filtered to strip out noise

3. Object detection — AI models identify the ball’s position in each frame

4. 3D reconstruction — Stereo vision calculates exact coordinates in three-dimensional space

5. Trajectory prediction — Physics-based models forecast where the ball will land

The result is a system that knows where the ball is going before it gets there. Notably, that prediction happens within 10 to 20 milliseconds — fast enough for the robotic arm to plan and execute a full return stroke. That number stopped me cold when I first read it.

Sony has published research through its Sony AI division explaining how sensor fusion combines camera data with physics models. That hybrid approach is more robust than leaning on neural networks alone. Nevertheless, the AI components are still doing heavy lifting — especially when spin gets weird or a player throws something genuinely deceptive at the robot.

Real-Time Object Detection: YOLO, Pose Estimation, and Beyond

The ball moves fast enough that real-time object detection has to be nearly instantaneous. When you watch Sony’s elite ping-pong robot beat a skilled human player, you’re seeing some of the fastest object detection deployed in any physical system today. The architecture draws on frameworks similar to YOLO (You Only Look Once) — still one of the quickest real-time detection tools available.

YOLO’s role in ball tracking. YOLO-style models process an entire image frame in a single pass rather than scanning it region by region. Consequently, they’re fast enough for real-time sports use. Sony’s version is almost certainly a custom-trained variant built specifically for small, fast-moving objects. I’ve seen generic YOLO struggle with objects this small — a custom model is the right call.

But ball tracking is only half the problem. The robot also needs to read the human player’s movements, which is where pose estimation comes in.

Pose estimation maps the player’s body in real time. Frameworks like OpenPose and MediaPipe detect key joints — wrists, elbows, shoulders, hips — and from those positions, the robot can infer:

- The stroke type being used (forehand, backhand, smash)

- The probable shot direction

- The amount of spin likely being applied

- Broader tendencies and strategic patterns

Similarly, the system uses temporal analysis across multiple rallies to build a picture of how a specific player behaves under pressure. If someone defaults to cross-court backhands when they’re scrambling, the robot picks that up and adapts. Fair warning: if you ever play this thing, it’s learning you in real time.

Here’s a comparison of the core AI techniques powering the system:

| Technology | Purpose | Speed | Key Strength |

|---|---|---|---|

| YOLO-based detection | Ball localization | Under 5 ms per frame | Single-pass processing |

| Stereo vision | 3D position mapping | Under 2 ms per frame | Depth accuracy |

| Pose estimation | Player movement analysis | 10–30 ms per frame | Strategy prediction |

| Physics simulation | Trajectory forecasting | Under 1 ms per calculation | Spin and bounce modeling |

| Reinforcement learning | Shot selection | Pre-computed policies | Adaptive gameplay |

Additionally, all of these models run on edge hardware — no cloud involved. And that’s not a minor detail. Latency in table tennis is brutal. A round trip to a remote server adds 50 to 100 milliseconds, which is simply too slow. Therefore, all inference happens locally on specialized GPU hardware sitting right next to the robot.

The models trained on thousands of hours of footage. Importantly, that training data mixes professional matches, amateur games, and synthetic simulations — which is what lets the system handle wildly different playing styles. More variety in training data means fewer surprises in the real world.

Robotic Arm Control: How the Robot Returns Shots With Precision

Detecting the ball is impressive. Returning it accurately is a completely different engineering problem. When you watch Sony’s elite ping-pong robot beat experienced players, the arm’s motion looks almost fluid — and that fluidity comes from motion planning algorithms doing a lot of quiet, invisible work.

Degrees of freedom matter here. Sony’s robotic arm likely operates with six or more, meaning each joint rotates independently. The arm can reach virtually any point above the table and hit at almost any angle.

Motion planning breaks into several distinct stages:

1. Target calculation — The system figures out where and when the ball will reach the strike zone

2. Shot selection — The AI picks the best return (topspin, backspin, flat, angled)

3. Path planning — Inverse kinematics calculates the exact joint angles needed

4. Execution — Servo motors drive each joint along the computed path

5. Correction — Real-time feedback adjusts the stroke mid-motion if something’s off

Inverse kinematics is the mathematical engine underneath all of this. Given a desired paddle position and angle, it works backward through the arm’s joint chain to find the right setup. Although it’s computationally heavy, modern solvers crack it in microseconds.

The servo motors deserve more attention than they usually get — they’re not off-the-shelf parts. Sony uses high-torque, low-latency actuators capable of rapid acceleration and direction changes in milliseconds. Consequently, the arm handles rallies where the ball crosses the table in under 300 milliseconds. That’s not a lot of time.

Spin control is the real kicker. The robot doesn’t just return the ball — it applies deliberate spin to control placement and make life harder for its opponent. By varying paddle angle and stroke speed, it generates topspin, backspin, and sidespin with remarkable consistency. I’ve tested plenty of automated ball-return systems over the years. None of them do this.

Sony’s robotics work builds on decades of industrial automation experience. Their semiconductor and sensing division produces the image sensors in the cameras, and their actuator technology comes directly from precision manufacturing equipment. This convergence of sensing, computing, and actuation is what makes the whole thing possible — no single piece would be enough on its own.

Edge AI Inference and the Software Architecture Behind Every Rally

The software architecture here is a genuine masterclass in edge AI inference. Specifically, every decision — ball detection, shot selection, arm movement — happens on local hardware with zero cloud dependency.

Why does edge computing matter so much? Table tennis demands reaction times under 200 milliseconds. A cloud-based system introduces unacceptable lag. Therefore, Sony’s engineers built a fully self-contained processing pipeline from the ground up.

The architecture runs in layers:

- Sensor layer — High-speed cameras (and optional IMU sensors) feed raw data into the system

- Perception layer — Computer vision models output ball coordinates, player poses, and environmental data

- Decision layer — Reinforcement learning policies pick the best shot based on current game state

- Control layer — Motion planning turns the chosen shot into joint commands

- Actuation layer — Servo motors carry out those commands with real-time feedback loops

Each layer operates on a strict time budget. The total pipeline — from photon hitting the camera sensor to paddle striking the ball — has to finish in roughly 100 to 150 milliseconds. That’s the whole budget. Every millisecond counts.

Reinforcement learning handles shot selection. The RL agent trained in simulation, playing millions of virtual matches against modeled opponents. It learned through trial and error which shots work in which situations. Importantly, those learned policies transfer to the physical robot with minimal performance loss — which is harder than it sounds.

That sim-to-real transfer problem is one of the hottest areas in robotics research right now. OpenAI’s work on sim-to-real showed the approach with a Rubik’s Cube-solving robotic hand. Sony applies similar principles here, although the domains are obviously different. The underlying method is consistent, and it works.

The hardware likely includes NVIDIA GPU modules or custom Sony silicon. NVIDIA’s Jetson platform is everywhere in edge AI robotics because it delivers the parallel processing power needed to run multiple neural networks at the same time. Furthermore, the system almost certainly uses model optimization techniques like quantization and pruning — shrinking the models and speeding up inference without gutting accuracy. A full-precision YOLO model is probably too slow here. A quantized version runs roughly twice as fast with nearly identical detection performance. That tradeoff is worth it.

What Happens When the Robot Faces Human Champions

Watching this robot go up against top-ranked players reveals something genuinely fascinating about human versus machine dynamics in sport. It doesn’t play like a human. It plays like a machine that has studied thousands of humans and figured out what makes them beatable.

Consistency is the robot’s sharpest weapon. Human players have off days, get nervous, and tire out. The robot doesn’t experience any of that. Every stroke is calculated, every return optimized, and consequently, human players face relentless, error-free rallies that grind them down mentally. That’s a real thing — I’ve read accounts from players who described it as exhausting in a way that human opponents simply aren’t.

But the robot has real limitations too. Here’s an honest breakdown:

- Strengths — Superhuman reaction time, perfect consistency, adaptive mid-match strategy, tireless operation

- Weaknesses — Can’t move around the table the way a human can, no dramatic lunges, restricted to a fixed position

- Surprising capabilities — Reads spin that human eyes literally can’t detect, adjusts strategy mid-match based on opponent patterns

Top players typically try to exploit the physical constraints — wide angles, drop shots, dramatic pace changes. Nevertheless, the robot compensates with prediction, starting its arm movement before the opponent even makes contact with the ball. It’s anticipating, not just reacting.

Sony has showcased the robot at CES and various technology events, and the response has been enormous. Moreover, the implications go well beyond a cool demo:

- Training — Professional athletes get a tireless, fully customizable practice partner

- Rehabilitation — Modified versions could support physical therapy for patients recovering from injuries

- Research — The technology advances real-time AI decision-making across multiple fields

- Manufacturing — The same control algorithms directly improve industrial robotic arms

Additionally, Sony’s work fits into a broader trend in humanoid robotics. While companies like Boston Dynamics focus on locomotion, Sony is showing that manipulation and real-time reaction are equally important frontiers. Similarly, the sensor fusion techniques built for ping-pong transfer directly to autonomous vehicles and drone navigation. A ping-pong robot is quietly teaching a lot of other machines how to be better.

Sony’s Position in Sports Robotics and AI

Sony isn’t alone in sports robotics, but its ping-pong robot stands apart for a specific reason. Notably, it combines consumer-grade polish with genuinely research-grade performance — a combination that’s rarer than you’d think.

How Sony stacks up:

| Company/Project | Sport | AI Approach | Stage |

|---|---|---|---|

| Sony | Table tennis | Vision + RL + robotic arm | Advanced prototype |

| Google DeepMind | Various | Simulation-first RL | Research phase |

| Agility Robotics | General manipulation | Humanoid platform | Commercial pilot |

| KUKA (industrial) | Table tennis (demo) | Pre-programmed paths | Limited demo |

| MIT CSAIL | Various | Learning-based control | Academic research |

Sony’s real advantage is vertical integration. They make their own image sensors, design their own AI chips, and build their own actuators. Therefore, they can optimize the entire stack — from photon to paddle — in ways that companies stitching together third-party parts simply can’t.

Moreover, Sony’s AI research division has published extensively on reinforcement learning for physical systems. Their approach blends model-based and model-free RL. The model-based side uses physics equations to predict ball behavior, while the model-free side learns nuanced strategy through experience. This hybrid method outperforms either approach on its own, and it’s the kind of detail that separates serious research from demo-ware.

Because Sony controls so much of its own stack, optimization opportunities exist at every level. When you watch Sony’s elite ping-pong robot beat a skilled opponent, you’re watching at least five engineering disciplines working in harmony: optics, computer vision, machine learning, control theory, and mechanical engineering. I’ve covered a lot of robotics projects that nail two or three of those. Nailing all five at once is something else entirely.

The sports robotics space is growing fast. Although exact market figures vary, the intersection of AI and athletics is pulling in serious investment from major tech players. Importantly, the lessons Sony learns here feed back into everything else they make — better motion tracking for PlayStation VR, sharper autofocus for their camera division, faster image sensors from their semiconductor business. It’s a cycle where a ping-pong robot quietly makes a lot of other products better.

Conclusion

Here’s the thing: if you haven’t taken the time to watch Sony’s elite ping-pong robot beat top-ranked players, find the footage and watch it properly. The technology underneath — YOLO-based detection, reinforcement learning shot selection, real-time edge inference — represents some of the most advanced edge AI work happening in any physical system today.

So if this space interests you, here are some practical next steps:

- Explore Sony AI’s research — Their official site has published papers on robotic control and computer vision worth digging into

- Learn YOLO — Ultralytics’ documentation is a solid starting point for understanding real-time object detection

- Try pose estimation — OpenPose or MediaPipe will get you hands-on experience fast

- Study reinforcement learning — Sim-to-real transfer is one of the most practically useful RL techniques right now

- Follow CES and robotics conferences — Sony regularly shows its latest work at these events

The ability to watch Sony’s elite ping-pong robot beat human opponents isn’t just entertaining — it’s a window into where AI-powered robotics is genuinely headed. Furthermore, it shows that real-time inference, precision control, and adaptive learning can all work together in demanding physical environments, not just in simulation.

Sports robotics is still young. Nevertheless, Sony’s ping-pong robot makes it pretty clear where things are going — and honestly, that direction is exciting enough that I’ll keep watching.

FAQ

How fast can Sony’s ping-pong robot react to a shot?

The robot’s total reaction time — from detecting the ball to completing a return stroke — is roughly 100 to 150 milliseconds. Specifically, the computer vision system processes each frame in under 5 milliseconds. The remaining time splits between trajectory prediction, shot selection, and arm movement. Consequently, the robot handles rallies where the ball crosses the table in under 300 milliseconds — comfortably within that budget.

What AI algorithms does the ping-pong robot use?

Sony’s robot combines several technologies: YOLO-style object detection for ball tracking, pose estimation for reading the opponent’s movements, and reinforcement learning for shot selection. Additionally, physics-based models handle trajectory prediction, spin modeling, and bounce behavior. This hybrid approach — data-driven AI working alongside traditional physics equations — is more robust than either method alone.

Can the robot actually beat professional table tennis players?

It’s shown genuinely impressive performance against highly skilled players. However, it has real physical limits — it can’t move around the table the way a human can, and top professionals exploit this by targeting extreme angles. Nevertheless, the robot’s superhuman reaction time and relentless consistency make it a tough opponent. Most players find sustained rallies against it mentally draining in ways they don’t expect.

Does the robot use cloud computing or process everything locally?

Everything runs locally on edge hardware — no cloud dependency at all. Table tennis demands sub-200-millisecond response times, and cloud computing introduces too much lag to be viable. Therefore, Sony designed the entire processing pipeline to run on local GPU hardware positioned near the robot, keeping decision-making as fast as physically possible.

Where can I watch Sony’s elite ping-pong robot beat top players?

You can watch Sony’s elite ping-pong robot beat opponents through official Sony AI demonstrations posted online. Sony has shown the robot at CES and other major technology conferences. Furthermore, technology media outlets have published detailed video coverage of its matches. A quick search for “Sony AI table tennis robot” on any major video platform will surface the best footage available.

What are the practical applications beyond entertainment?

Quite a few, actually. Professional athletes can train against a tireless, fully customizable opponent. Rehabilitation centers could adapt the technology for physical therapy. Moreover, the underlying algorithms — real-time object detection, motion planning, and adaptive control — transfer directly to manufacturing robotics, autonomous vehicles, and drone navigation. Importantly, Sony’s vertical integration means these advances ripple outward into their consumer electronics products too. The ping-pong robot is doing more work than it looks like.