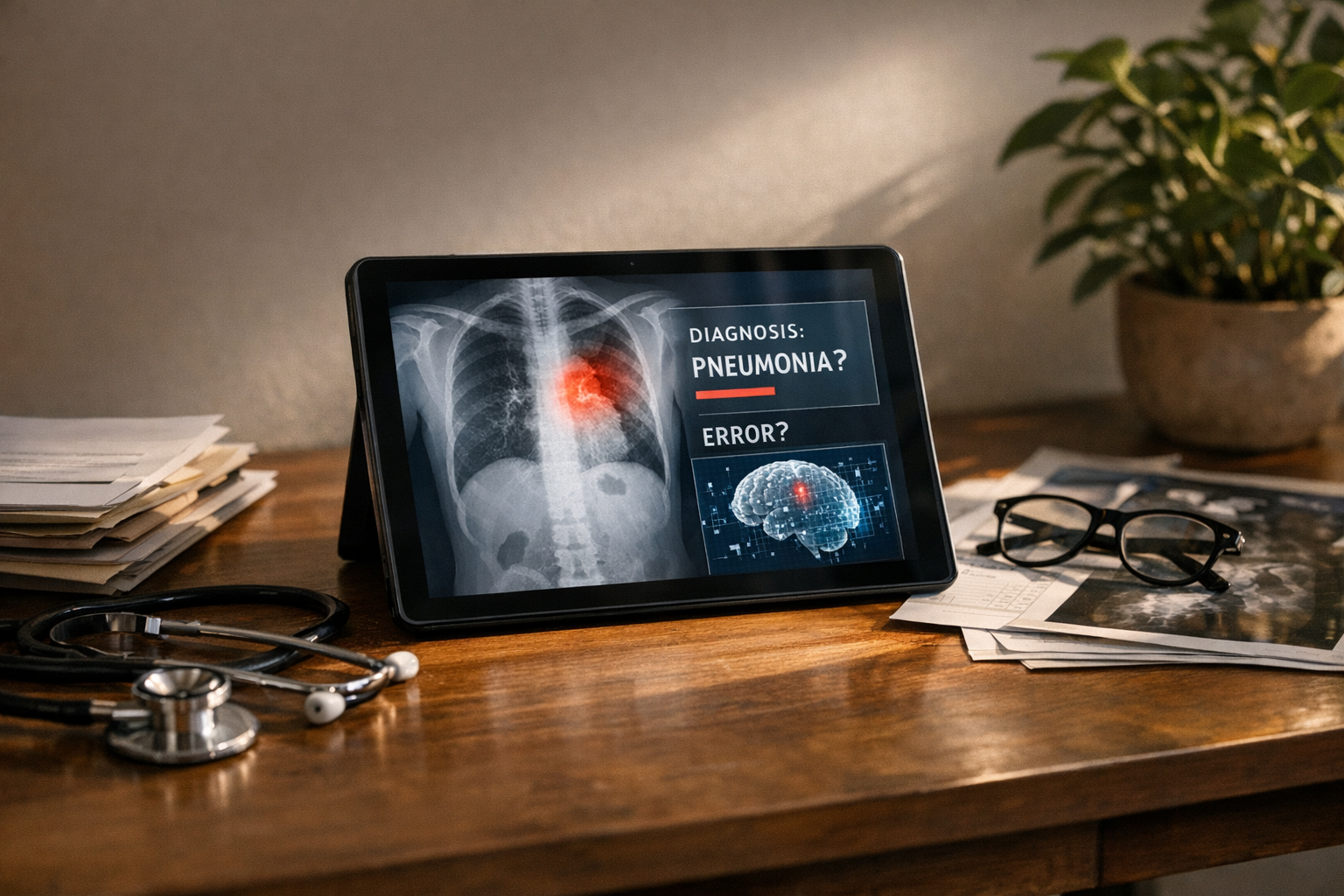

AI hallucination in healthcare diagnosis Ontario medical AI systems isn’t just a technical glitch. It’s a patient safety emergency — and honestly, the healthcare industry is only beginning to reckon with how serious that is. When a clinical AI confidently generates a wrong diagnosis, real people suffer real harm.

Hospitals across North America are racing to adopt AI tools, and Ontario’s healthcare system is no exception. However, the rush to deploy has badly outpaced our ability to manage their most dangerous flaw: hallucinations. These are the moments when AI fabricates plausible-sounding but entirely false medical information — and does so with complete, unearned confidence.

Here’s the thing: a hallucinating chatbot that invents a pasta recipe is merely annoying. A hallucinating diagnostic AI that invents a condition — or misses one — can kill. Furthermore, the legal frameworks governing these failures remain dangerously underdeveloped, especially in Canadian provinces like Ontario.

How AI Hallucinations Threaten Healthcare Diagnosis in Ontario

To understand the crisis, you need to understand the mechanism. AI hallucination occurs when a large language model (LLM) or machine learning system generates output that sounds confident but has no basis in its training data or reality. This particular failure mode genuinely keeps me up at night.

In medicine, hallucination takes several dangerous forms:

- Fabricated diagnoses — the AI suggests a condition the patient doesn’t have

- Invented citations — the system references medical studies that don’t exist (and they look completely real)

- Missed critical findings — the AI overlooks obvious pathology in imaging or lab results

- Contradictory recommendations — treatment suggestions that flatly conflict with established clinical guidelines

Specifically, Ontario’s healthcare system faces unique vulnerability here. The province has been actively integrating AI into radiology, pathology, and primary care triage. Ontario Health oversees digital health strategy across the province. Nevertheless, no provincial framework specifically addresses liability when AI-generated diagnoses go wrong.

The problem is fundamentally architectural. Models like GPT-4, Med-PaLM, and similar clinical AI tools predict the most statistically likely next token. They don’t “understand” medicine in any meaningful sense. Consequently, they can produce outputs that look medically authoritative but are completely fabricated.

A key distinction matters here. Traditional software bugs are reproducible — you can find them, document them, fix them. AI hallucinations are often stochastic, meaning they’re random and genuinely hard to predict. That makes them uniquely dangerous in clinical settings and, notably, uniquely difficult to litigate.

Real Cases Where AI Hallucination Caused Patient Harm

The liability crisis isn’t theoretical. Real cases are already emerging — and the pattern is concerning.

The radiology misread problem. In 2023, researchers at Stanford found that AI diagnostic tools for chest X-rays produced clinically significant errors in a meaningful percentage of cases. Some errors were hallucinations — the AI “saw” nodules that weren’t there. Others were omissions. Both categories cause harm, but fabricated findings are particularly insidious because they look like positive diagnoses.

Chatbot-driven misdiagnosis. The National Library of Medicine has published multiple studies documenting cases where AI chatbots provided dangerously inaccurate medical advice. In one documented scenario, an AI suggested a benign diagnosis for symptoms that actually indicated a cardiac emergency. That’s not a minor error. That’s the kind of miss that ends lives.

Ontario-specific concerns. Ontario hospitals using AI-assisted triage systems have reported instances where algorithms prioritized patients incorrectly. Although no public lawsuits have emerged yet in Ontario specifically, legal experts say it’s only a matter of time. I’d bet on sooner rather than later.

The medication interaction gap. AI systems have hallucinated safe drug combinations that are actually contraindicated. For elderly patients on multiple medications — common in Ontario’s aging population — this error type is potentially fatal. It’s also one of the harder errors to catch in a busy clinical environment.

Moreover, the documentation trail creates additional liability exposure. When an AI system generates a hallucinated diagnosis and a clinician acts on it, the electronic health record preserves that entire decision chain. Consequently, plaintiffs’ attorneys can reconstruct exactly how AI hallucination in healthcare diagnosis contributed to harm — step by step, timestamp by timestamp.

Here’s what makes this a true crisis: patients trust AI-generated information, often more than they should. Studies show people frequently trust algorithmic recommendations over human ones. Therefore, a confidently stated hallucination may override a patient’s own instinct to seek a second opinion. That’s a deeply uncomfortable dynamic.

Regulatory Gaps in Ontario Medical AI

The regulatory picture is a patchwork with gaping holes. Notably, no single framework adequately addresses AI hallucination in healthcare diagnosis Ontario medical AI deployments — and that gap is getting more dangerous every month.

| Regulatory Area | Current Status (Canada/Ontario) | Current Status (United States) |

|---|---|---|

| AI device approval | Health Canada reviews under Medical Devices Regulations | FDA’s 510(k) pathway covers AI/ML devices |

| Hallucination-specific standards | None exist | None exist |

| Post-market surveillance for AI errors | Limited requirements | FDA adverse event reporting applies |

| Provincial liability framework | Common law negligence applies | Varies by state; product liability emerging |

| Mandatory AI disclosure to patients | Not required | Not federally required |

| Clinical validation requirements | Voluntary best practices | FDA requires clinical evidence for clearance |

Health Canada treats AI diagnostic tools as medical devices. However, the approval process wasn’t designed for systems that can produce different outputs for identical inputs — which is a fundamental mismatch. Similarly, the U.S. Food and Drug Administration has cleared hundreds of AI medical devices but hasn’t established hallucination-specific testing requirements. Both regulators are playing catch-up with technology that moved faster than their frameworks.

The Canadian gap is especially concerning. Ontario’s Regulated Health Professions Act governs healthcare providers but says nothing about AI-assisted decision-making. Consequently, when an AI hallucinates and a physician follows its recommendation, liability falls entirely on the clinician. The AI vendor often escapes accountability entirely — which is, frankly, absurd.

Additionally, no mandatory reporting system exists for AI hallucinations in clinical settings. A radiologist who catches an AI error might correct it quietly and move on. That error never enters any database. Consequently, the same hallucination pattern could harm patients at dozens of other facilities before anyone notices a trend.

The informed consent question looms large. Should patients be told when AI contributes to their diagnosis? Ontario’s consent framework doesn’t require it. Meanwhile, patient advocacy groups argue — compellingly — that AI involvement in diagnosis is a material fact that affects consent. This debate is going to get much louder.

The European Union’s AI Act classifies medical AI as “high-risk” and imposes strict transparency requirements. Canada and Ontario have nothing comparable. This regulatory vacuum makes the AI hallucination in healthcare diagnosis liability crisis considerably worse. Importantly, it also leaves patients with no meaningful recourse when things go wrong.

Who Bears Liability When Ontario Medical AI Causes Harm

The liability question is genuinely unsettled. And that uncertainty itself is part of the crisis — nobody wants to own this problem.

Potential liable parties include:

- The healthcare provider — Physicians have a duty of care. If they rely on AI without exercising adequate clinical judgment, they’re exposed. Ontario’s medical malpractice framework doesn’t distinguish between human error and AI-assisted error — the standard of care is the standard of care.

- The hospital or health system — Institutions that deploy AI tools may face vicarious liability. They chose the system, trained staff on it, and bear responsibility for how it’s built into care workflows.

- The AI vendor — Software companies could face product liability claims. However, most vendor contracts include extensive liability disclaimers — and I’ve read enough of these to know they’re written by very careful lawyers. Whether those disclaimers hold up in court when patient harm occurs is a different question entirely.

- The data providers — If hallucinations stem from biased or incomplete training data, the organizations that supplied that data could share liability. This one’s largely untested, but it’s coming.

Importantly, Ontario courts haven’t yet ruled on an AI hallucination in healthcare diagnosis case. However, precedent from other technology liability cases suggests courts will examine foreseeability closely. Was it foreseeable that the AI could hallucinate? Almost certainly yes — vendors know this. Did the deploying institution take reasonable precautions? That’s where cases will be won or lost.

The “learned intermediary” doctrine adds real complexity here. Traditionally, this doctrine shields medical product manufacturers because physicians act as informed intermediaries between product and patient. But does it apply when AI recommendations are so authoritative that they effectively override clinical judgment? Legal scholars remain divided, and notably, no Canadian court has weighed in yet.

Furthermore, class action potential exists. If an AI system produces systematic hallucinations across multiple patients, those affected could bring collective claims. The discovery process in such cases would force AI vendors to reveal their training data, validation methods, and known error rates — which is probably why vendors are so eager to avoid that scenario.

Insurance implications are already emerging. The Canadian Medical Protective Association provides liability protection to physicians and has begun issuing guidance on AI use. Nevertheless, coverage gaps exist for AI-specific failures. Malpractice premiums may rise as hallucination risks become better documented — and that cost ultimately flows back to the healthcare system.

Mitigation Strategies for Providers Using AI Diagnostic Tools

The crisis is real, but it isn’t hopeless. The difference between organizations that handle this well and those that don’t usually comes down to process discipline rather than technology choices.

Healthcare providers can take concrete steps to reduce AI hallucination in healthcare diagnosis Ontario medical AI risk — starting today.

Clinical workflow safeguards:

- Never use AI as the sole diagnostic authority — treat it as one input among several, not the final word

- Set up mandatory human review for all AI-generated diagnoses before they reach patients

- Create clear documentation protocols that record when and how AI contributed to a clinical decision

- Set up escalation procedures for cases where AI output conflicts with clinical judgment — and make sure clinicians actually use them

Technical validation measures:

- Demand hallucination rate data from AI vendors before procurement — if they won’t provide it, walk away

- Run regular “red team” exercises where clinicians deliberately test AI systems with edge cases

- Monitor AI output drift over time, because hallucination patterns can shift as models update

- Require vendors to provide model cards documenting known limitations and failure modes

Legal and administrative protections:

- Review and negotiate vendor liability clauses — don’t accept blanket disclaimers without pushback

- Update informed consent processes to disclose AI involvement in diagnosis

- Maintain detailed audit trails of all AI-assisted clinical decisions

- Purchase AI-specific liability coverage if your malpractice insurer offers it — not all do yet

Staff training essentials:

- Train all clinical staff on AI limitations, specifically hallucination risks — this can’t be a one-time onboarding checkbox

- Teach clinicians to recognize common hallucination patterns specific to their specialty

- Build a culture where questioning AI output is actively encouraged, not quietly penalized

- Run regular case reviews of AI errors to build institutional knowledge over time

Conversely, some organizations are taking a more radical approach — limiting AI to administrative tasks and keeping it entirely out of diagnostic workflows until regulatory frameworks mature. Although this gives up real efficiency gains, it eliminates AI hallucination in healthcare diagnosis liability almost entirely. It’s worth considering if your institution has the appetite for it.

Vendor selection matters enormously — more than most procurement teams realize. Not all medical AI systems are equal. Tools specifically designed for clinical use — like those reviewed through Health Canada’s medical device pathway — go through more rigorous validation than general-purpose LLMs repurposed for medical advice. Additionally, validated clinical tools are far more likely to carry documented hallucination benchmarks that procurement teams can actually compare. The real kicker? Many hospitals are deploying general-purpose tools without realizing the validation gap.

Conclusion

The AI hallucination in healthcare diagnosis Ontario medical AI crisis demands immediate attention from healthcare providers, regulators, and technology vendors alike. False AI outputs in clinical settings aren’t minor inconveniences. They’re potential death sentences — and the legal and ethical accountability structures to address them barely exist.

Ontario and Canada broadly lag behind the EU in regulating high-risk AI applications. Meanwhile, hospitals continue deploying AI diagnostic tools without adequate hallucination safeguards. The liability exposure grows daily, and so does the patient risk.

Here’s what you should do right now:

- If you’re a healthcare administrator, audit every AI system touching patient diagnosis — document hallucination risks and mitigation measures before something goes wrong, not after

- If you’re a clinician, never trust AI output without independent verification — your clinical judgment remains the standard of care, full stop

- If you’re a policymaker, push hard for hallucination-specific testing requirements in medical AI approval processes — the EU figured this out, and so can we

- If you’re a patient in Ontario or anywhere else, ask your provider whether AI contributed to your diagnosis — you have a right to know, even if nobody’s required to tell you yet

The technology isn’t going away. AI will eventually transform healthcare diagnosis for the better — I genuinely believe that. But right now, the gap between AI capability and AI reliability in medicine represents a genuine liability crisis. Addressing AI hallucination in healthcare diagnosis Ontario medical AI systems isn’t optional. It’s urgent, it’s overdue, and the clock is running.

FAQ

What exactly is an AI hallucination in healthcare diagnosis?

An AI hallucination in healthcare diagnosis occurs when an artificial intelligence system generates medical information that sounds completely plausible but is factually wrong. This could mean inventing a diagnosis, citing nonexistent medical studies, or recommending treatments that contradict established guidelines. The AI doesn’t “know” it’s wrong — it produces the most statistically likely output regardless of accuracy. In clinical settings, these errors can directly harm patients, and the confident delivery makes them especially dangerous.

How common are AI hallucinations in Ontario medical AI systems?

Precise rates are difficult to pin down because no mandatory reporting system exists in Ontario. However, research on general-purpose LLMs shows hallucination rates ranging from single digits to double-digit percentages depending on task complexity. Importantly, medical AI systems specifically trained and validated for clinical use tend to hallucinate less than general-purpose models. Nevertheless, even a low hallucination rate becomes significant when multiplied across thousands of daily diagnostic decisions — the math gets uncomfortable fast.

Who is legally responsible when AI hallucination causes patient harm in Ontario?

Currently, Ontario medical AI liability falls primarily on the treating physician and the healthcare institution. The physician’s duty of care doesn’t diminish because they used AI — that’s a point Ontario courts are likely to be firm on. Additionally, hospitals that deploy AI tools bear institutional responsibility for their selection and oversight. AI vendors may face product liability claims, though their contracts typically include significant liability limitations. Ontario courts haven’t yet established clear precedent specifically for AI hallucination cases, which is itself part of the problem.

Can patients in Ontario sue over an AI-generated misdiagnosis?

Yes. Patients can bring medical malpractice claims when AI-assisted diagnosis leads to harm. The legal standard remains the same: did the healthcare provider meet the accepted standard of care? If a clinician blindly followed a hallucinated AI recommendation without exercising independent judgment, that likely falls below the standard — and a plaintiff’s attorney will make exactly that argument. Furthermore, patients may also pursue claims against the AI vendor under product liability theories, although this legal path remains largely untested in Canadian courts. That will change.

What regulations govern medical AI in Canada and Ontario?

Health Canada regulates AI diagnostic tools as medical devices under the Medical Devices Regulations. However, these regulations weren’t designed for AI-specific risks like hallucination — and that design gap is consequential. Ontario has no provincial legislation specifically addressing AI hallucination in healthcare diagnosis. The Regulated Health Professions Act governs clinician conduct but doesn’t mention AI. Consequently, a significant regulatory gap exists that leaves both patients and providers in genuinely uncertain territory.

How can healthcare providers protect themselves from AI hallucination liability?

Providers should set up multiple overlapping safeguards — no single measure is enough on its own. Always require human review of AI-generated diagnoses and document when and how AI contributed to clinical decisions. Negotiate vendor contracts to include meaningful liability sharing rather than accepting boilerplate disclaimers. Train staff to recognize hallucination patterns and update informed consent processes to disclose AI involvement. Additionally, consider purchasing AI-specific malpractice coverage where available. Treat AI as an assistant, never as an authority. These steps won’t eliminate risk entirely, but they substantially reduce AI hallucination in healthcare diagnosis Ontario medical AI liability exposure — and they show the kind of reasonable precaution that matters enormously in court.

References

- Editorial photograph for «AI Hallucinations in Ontario Healthcare: A Growing Liability Crisis».

- Ontario Health

- National Library of Medicine

- Health Canada

- U.S. Food and Drug Administration

- Regulated Health Professions Act

- AI Act

- Canadian Medical Protective Association

- Health Canada’s medical device pathway