When I say human-in-the-loop (HITL) design might be the defining pattern in AI engineering, I mean it. We’re building increasingly autonomous systems, yet the smartest teams I’ve worked alongside know exactly when to pause and ask a real person. That tension — between speed and safety — is precisely where this pattern lives.

Here’s the thing: the concept itself isn’t complicated. You build AI that handles routine tasks automatically, but at critical decision points, the system routes to a human for verification. Consequently, you get the efficiency of automation combined with the judgment of someone who can actually be held accountable. It’s not a new idea — but it’s becoming essential as AI agents grow more powerful, and frankly, more dangerous when they’re wrong.

This post covers practical design patterns, working code examples, and real-world use cases. Whether you’re building healthcare tools, financial systems, or content moderation pipelines, you’ll find actionable blueprints here.

Why Human-in-the-Loop Will Define AI Engineering

Core Design Patterns for Human-in-the-Loop Systems

Building Decision Trees That Route to Humans Intelligently

Real-World Use Cases: Healthcare, Finance, and Content Moderation

Integrating HITL with Agentic AI and Workflow Tools

Why Human-in-the-Loop Will Define AI Engineering

Autonomous AI sounds incredible in demos.

In production, however, fully autonomous systems create liability nightmares that no amount of clever engineering can fix. A medical chatbot that misdiagnoses a patient can’t say “sorry, the model hallucinated.” A trading algorithm that executes a bad position can’t undo millions in losses. I’ve seen both scenarios play out, and neither ends well.

Human-in-the-loop solves this. Specifically, it creates structured checkpoints where human judgment overrides or confirms AI recommendations before something irreversible happens. The National Institute of Standards and Technology (NIST) AI Risk Management Framework explicitly calls for human oversight mechanisms. Furthermore, the EU AI Act mandates human oversight for high-risk AI systems — so this isn’t just good engineering practice, it’s increasingly the law.

Here’s why this pattern is accelerating right now:

- Regulatory pressure — New laws require human oversight in healthcare, finance, and hiring

- Liability concerns — Companies need someone accountable when AI fails

- Trust gaps — Users don’t trust fully autonomous systems for high-stakes decisions, and honestly, they shouldn’t yet

- Model limitations — Large language models (LLMs) still hallucinate and make confident errors at an uncomfortable rate

- Edge cases — AI handles 95% of cases well but fails badly on the remaining 5%

Moreover, the rise of agentic AI makes this more urgent than ever. When AI agents can browse the web, execute code, and make API calls on their own, the blast radius of a single mistake grows fast. Therefore, human-in-the-loop isn’t a nice-to-have — it’s a non-negotiable requirement for any production AI that does something consequential.

Core Design Patterns for Human-in-the-Loop Systems

Not all HITL implementations look the same. The pattern you choose depends on your risk tolerance, latency requirements, and domain — and picking the wrong one is an expensive mistake I’ve watched teams make repeatedly.

Here are the four primary patterns that actually work in production.

1. Approval Gate Pattern

The AI generates a recommendation, and a human approves or rejects it before execution. This is the most common pattern — simple, effective, and easy to explain to stakeholders who aren’t engineers.

Use cases: financial transactions above a threshold, medical treatment suggestions, content publishing workflows.

class ApprovalGate:

def __init__(self, confidence_threshold=0.85):

self.confidence_threshold = confidence_threshold

def evaluate(self, ai_decision):

if ai_decision.confidence >= self.confidence_threshold:

return {"action": "auto_approve", "reason": "High confidence"}

return {

"action": "route_to_human",

"reason": f"Confidence {ai_decision.confidence} below threshold",

"context": ai_decision.supporting_data

}

2. Escalation Ladder Pattern

The system tries increasingly capable AI models first. Consequently, only unresolved cases ever reach humans — who end up handling only the genuinely hard problems. This one surprised me when I first built it; the drop in human workload was dramatic.

3. Parallel Review Pattern

AI and humans process simultaneously, and the system compares outputs while flagging disagreements. This works especially well for training data generation and quality assurance, where you want a ground-truth signal.

4. Post-Hoc Audit Pattern

AI acts on its own, but humans review a sample of decisions afterward. Although this doesn’t prevent individual errors, it catches systematic problems early — before they compound into something much worse.

Here’s how these patterns compare:

| Pattern | Latency Impact | Human Workload | Risk Reduction | Best For |

|---|---|---|---|---|

| Approval Gate | High | High | Very High | Healthcare, finance |

| Escalation Ladder | Medium | Low | High | Customer support, triage |

| Parallel Review | Low | Medium | High | Content moderation |

| Post-Hoc Audit | None | Low | Medium | Recommendations, search |

Notably, many production systems combine multiple patterns. A content moderation pipeline might use parallel review for flagged content and post-hoc audits for auto-approved content. Additionally, the Google Responsible AI Practices guide recommends layered approaches for complex systems — and in my experience, that advice holds up.

Building Decision Trees That Route to Humans Intelligently

The biggest mistake teams make with HITL? Routing too much to humans.

If your system sends everything for review, you’ve built an expensive inbox — not a safety net. Intelligent routing is what separates useful HITL systems from bureaucratic bottlenecks that everyone eventually learns to rubber-stamp.

Confidence-based routing is the simplest approach: set a threshold, route below it to humans. However, raw confidence scores from LLMs are notoriously unreliable — this is one of those things that catches people off guard. Therefore, you need calibrated confidence, not just raw model outputs.

class IntelligentRouter:

def __init__(self):

self.high_risk_categories = ["medical", "financial", "legal"]

self.confidence_threshold = 0.90

self.ambiguity_threshold = 0.15

def route(self, prediction):

if prediction.category in self.high_risk_categories:

if prediction.confidence < 0.95:

return "human_review"

# Route ambiguous predictions

top_two_diff = prediction.top_score - prediction.second_score

if top_two_diff < self.ambiguity_threshold:

return "human_review"

# Route low confidence

if prediction.confidence < self.confidence_threshold:

return "human_review"

return "auto_process"

Similarly, you should factor in these routing signals beyond raw confidence:

- Domain risk level — Medical decisions always get more scrutiny than product recommendations

- Input novelty — If the input looks unlike anything in your training data, route to a human

- Disagreement between models — Run two models and flag when they contradict each other

- User-reported issues — Prior complaints about similar cases should lower your auto-approval threshold

- Regulatory requirements — Some decisions legally require human sign-off regardless of confidence

Meanwhile, the Microsoft Responsible AI Standard provides genuinely useful guidelines for deciding when human oversight is required versus optional — worth reading before you finalize your routing logic.

A well-designed routing system should send roughly 5–15% of decisions to humans. Above 30%, your AI isn’t adding enough value. Below 2%, you’re probably missing critical edge cases. That range is narrow enough that hitting it takes real iteration.

Real-World Use Cases: Healthcare, Finance, and Content Moderation

Theory is nice. Production is messy. Here’s how human-in-the-loop plays out across three industries where the stakes are genuinely high.

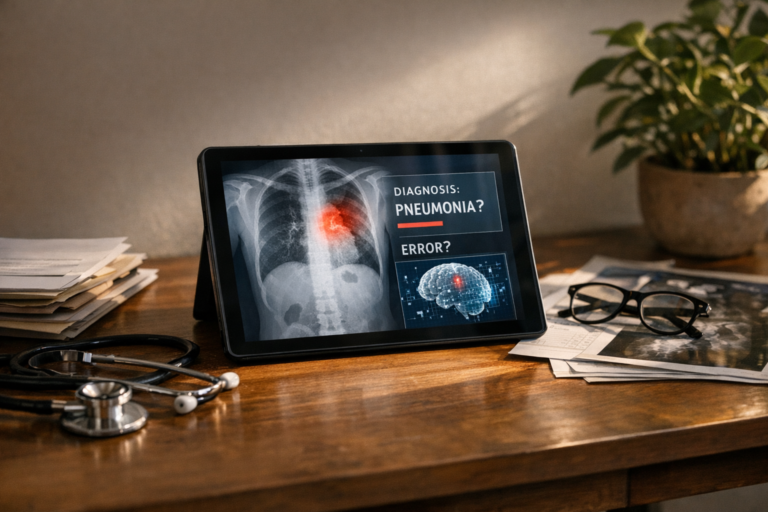

Healthcare: Radiology AI Triage

Radiology AI systems — including those built on frameworks from Google Health — don’t replace radiologists. Instead, they prioritize the reading queue. The AI scans images and flags urgent findings, but a radiologist still reviews every single image. Critical cases simply jump to the front of the line.

The HITL pattern here is an escalation ladder:

- AI scans the image and assigns urgency (low, medium, high, critical)

- Critical findings trigger an immediate alert to the on-call radiologist

- High-urgency cases get prioritized in the reading queue

- Low-urgency cases are read in standard order

- All AI assessments are logged for post-hoc audit

Importantly, the AI never makes a diagnosis — it speeds up the human’s workflow. That distinction matters for regulatory compliance, and it’s also just the right way to think about the problem.

Finance: Transaction Monitoring

Banks process millions of transactions daily. Anti-money laundering (AML) systems use AI to flag suspicious activity. Nevertheless, a human investigator must review flagged transactions before filing a Suspicious Activity Report (SAR). No shortcuts here — regulators are watching.

The typical flow:

- AI scores every transaction for risk (0–100)

- Scores above 80 go directly to a senior investigator

- Scores between 50–80 enter a standard review queue

- Scores below 50 are auto-cleared but sampled for audit

- Investigators can override AI scores in either direction

Consequently, the system catches more fraud while cutting false positives. The human provides the judgment call that regulators require — and that the AI genuinely can’t replicate yet.

Content Moderation: Hybrid Review Pipeline

Social media platforms process billions of posts. Fully manual review is impossible. Fully automated review misses context, sarcasm, and cultural nuance in ways that create real PR disasters. Therefore, platforms use a hybrid approach — and it’s more carefully engineered than most people realize.

class ContentModerationPipeline:

def process(self, content):

# Layer 1: Hash matching (known violations)

if self.hash_match(content):

return "auto_remove"

# Layer 2: AI classification

ai_result = self.classify(content)

# Layer 3: Routing logic

if ai_result.violation_score > 0.95:

return "auto_remove_with_audit"

elif ai_result.violation_score > 0.60:

return "human_review_priority"

elif ai_result.violation_score > 0.30:

return "human_review_standard"

else:

return "auto_approve_with_sampling"

Additionally, content moderation requires specialized HITL considerations that pure engineering teams often overlook. Reviewer well-being matters — rotating reviewers through difficult content categories helps prevent burnout and secondary trauma. That’s not a soft concern; it directly affects the accuracy of your labels.

Integrating HITL with Agentic AI and Workflow Tools

The newest challenge is integrating human oversight into AI agent workflows. Agents that can browse, write code, and take real-world actions need guardrails — and this is where I think human-in-the-loop becomes the most critical pattern of all, because the failure modes are genuinely scary.

Tools like LangChain and CrewAI already support human-in-the-loop interrupts. Here’s how to set them up effectively.

Kanban-style task management works surprisingly well for HITL agent workflows. Each agent task moves through columns: Queued → AI Processing → Human Review → Approved → Executed. This gives teams visibility into what agents are doing and where human judgment is actually needed — which is harder to see than you’d expect.

Key integration principles:

- Checkpoint before irreversible actions — Sending an email, making a purchase, or deleting data should always require approval

- Provide full context — Show the human what the agent did, why it decided that, and what alternatives it considered

- Set time limits — If a human doesn’t respond within a defined window, escalate or default to the safer option

- Log everything — Every human decision becomes training data for improving the AI’s future routing

class AgentCheckpoint:

def __init__(self, action_type, timeout_seconds=300):

self.action_type = action_type

self.timeout = timeout_seconds

async def request_approval(self, agent_context):

approval_request = {

"action": self.action_type,

"agent_reasoning": agent_context.chain_of_thought,

"proposed_action": agent_context.next_step,

"alternatives": agent_context.alternative_actions,

"risk_assessment": agent_context.risk_score,

"deadline": time.time() + self.timeout

}

response = await self.notify_human(approval_request)

if response is None: # Timeout

return "default_safe_action"

return response.decision

For voice agents specifically, latency matters enormously. You can’t pause a phone conversation for five minutes while waiting for human approval. Conversely, you can set up “warm handoff” patterns where the AI agent transfers to a human mid-conversation when confidence drops — I’ve seen this work really well when it’s built thoughtfully.

Furthermore, the OpenAI Safety Best Practices documentation recommends output filtering and human review for any customer-facing AI application. It’s worth reading before you deploy anything public-facing.

Measuring Success: Metrics That Matter for HITL Systems

You can’t improve what you don’t measure.

With human-in-the-loop systems, the temptation is to measure only the AI’s performance — which misses half the picture. You need to measure the whole system, including the human side.

Track these metrics:

- Routing accuracy — What percentage of human-routed cases actually needed human intervention?

- Override rate — How often do humans change the AI’s recommendation?

- Time to resolution — How long do cases wait in the human review queue?

- Automation rate — What percentage of total decisions are handled without human involvement?

- Error rate by path — Compare error rates for auto-processed versus human-reviewed decisions

- Reviewer agreement — When two humans review the same case, how often do they agree?

Additionally, watch for these warning signs:

- Rising override rates suggest your model is degrading or hitting distribution shift

- Growing queue times mean you need more reviewers or better routing — one of these is much cheaper to fix than the other

- Low routing rates with high error rates mean your thresholds are too loose

- Reviewer fatigue patterns — accuracy drops measurably after long review sessions, and most teams don’t track this until it’s already a problem

Notably, the best teams treat human decisions as training signals from day one. Every time a reviewer overrides the AI, that becomes a labeled example for model improvement. Consequently, the system gets smarter over time and routes fewer cases to humans — which is the whole point. That compounding effect is, honestly, the most underrated benefit of building HITL properly.

Conclusion

After building and studying these systems for a decade, I genuinely believe human-in-the-loop is one of the most important design patterns in modern AI engineering. It’s not a temporary fix while models improve. It’s a permanent architectural choice for any high-stakes AI system — and the teams ignoring it are building up risk they can’t see yet.

Here are your actionable next steps:

- Audit your current AI systems — Identify every decision point where errors could cause real harm

- Choose your pattern — Match approval gates, escalation ladders, parallel review, or post-hoc audits to each decision point

- Build intelligent routing — Don’t send everything to humans; use confidence, risk level, and novelty signals

- Instrument everything — Track override rates, queue times, and automation rates from day one

- Create feedback loops — Use human decisions to retrain and improve your models continuously

The teams that treat human-in-the-loop as a core design principle — not an afterthought — will build AI systems that are faster, safer, and more trustworthy. Start with the highest-risk decision in your pipeline. Add a human checkpoint. Measure the results. Then expand from there.

FAQ

What exactly is a human-in-the-loop AI system?

A human-in-the-loop (HITL) AI system includes structured checkpoints where a person reviews, approves, or overrides AI decisions. The AI handles routine processing automatically. However, at critical points, the system pauses and routes to a human for judgment. This pattern balances automation speed with human accountability — and it’s specifically that balance that makes it worth the added complexity.

How does human-in-the-loop differ from human-on-the-loop?

Human-in-the-loop means a person actively takes part in each decision cycle. Human-on-the-loop means a person monitors the system and can step in but doesn’t review every decision. Similarly, human-out-of-the-loop means fully autonomous operation. Most production systems use a mix — auto-processing low-risk decisions while keeping humans in the loop for high-risk ones. The tricky part is drawing that line correctly.

Won’t human-in-the-loop slow down my AI system?

It depends entirely on your implementation. Approval gates add latency — that’s unavoidable, and anyone who tells you otherwise is selling something. Nevertheless, smart routing cuts the impact significantly. If you’re only routing 5–10% of decisions to humans, overall system throughput stays high. Additionally, patterns like post-hoc audits add zero latency to the primary decision path. The key is matching the right pattern to your actual latency requirements.

What tools support building human-in-the-loop workflows?

Several frameworks support HITL natively. LangChain and LangGraph offer human interrupt nodes for agent workflows, and CrewAI supports human input tasks. Specifically, workflow tools like Temporal and Apache Airflow can model approval gates as workflow steps. For annotation and review interfaces, tools like Label Studio and Prodigy offer ready-made review UIs — and fair warning: UI quality matters more than most engineers expect, because bad tooling creates reviewer fatigue fast.

How do I decide which AI decisions need human oversight?

Start with a risk assessment. Ask three questions: What’s the worst outcome if the AI is wrong? Is the decision reversible? Are there regulatory requirements for human review? Importantly, any irreversible action with significant consequences should include human-in-the-loop oversight. Financial transactions, medical recommendations, and content removal are the classic examples — and notably, that list is only going to grow as AI systems take on more real-world actions.

How do I prevent reviewer fatigue in human-in-the-loop systems?

Reviewer fatigue is a real problem, especially in content moderation — and it’s one of the most underinvested areas in HITL system design. Rotate reviewers across categories regularly and set maximum review session lengths (typically 90 minutes before a mandatory break). Furthermore, provide clear decision guidelines and calibration exercises so reviewers aren’t constantly second-guessing themselves. Track accuracy over time to catch fatigue patterns before they affect your labels. Most importantly, invest in good tooling that surfaces relevant context so reviewers can make fast, confident decisions — because slow, uncertain reviews are where quality falls apart.