The race to shrink powerful AI onto tiny hardware is heating up fast. Nvidia partnership edge AI deployment small devices 2026 has become one of the most closely watched trends in tech right now. And honestly? The momentum is hard to ignore.

Nvidia isn’t just cranking out data center GPUs anymore. The company is building strategic alliances specifically to push AI inference onto devices you can hold in your palm. Consequently, developers, startups, and enterprises are fundamentally rethinking where their models actually run — and why that matters.

This shift solves three problems that have nagged at the industry for years: latency, privacy, and cost. Furthermore, it opens real doors for industries that simply can’t depend on cloud connectivity. Think factory floors humming at 3am, remote clinics in rural areas, autonomous drones flying without a signal.

Why Nvidia Is Betting Big on Edge AI in 2026

Nvidia spent years dominating cloud-based AI training. However, the next frontier isn’t in some hyperscale data center. It’s at the edge — and I’ve watched this shift accelerate faster than most analysts predicted.

Edge AI means running machine learning models directly on local devices — no round trip to a remote server, no dependency on bandwidth you may not have. Specifically, Nvidia’s partnership strategy targets devices operating under tight memory, power, and compute constraints.

Several forces are driving this pivot:

- Privacy regulations are tightening globally. The EU’s AI Act and similar U.S. state laws demand data stay local in many scenarios.

- Latency requirements are dropping hard. Autonomous vehicles, surgical robots, and industrial sensors need responses in milliseconds — not seconds.

- Connectivity gaps persist stubbornly. Roughly 40% of industrial environments still lack reliable cloud access.

- Cost pressures are mounting. Streaming continuous data to the cloud gets expensive fast at scale.

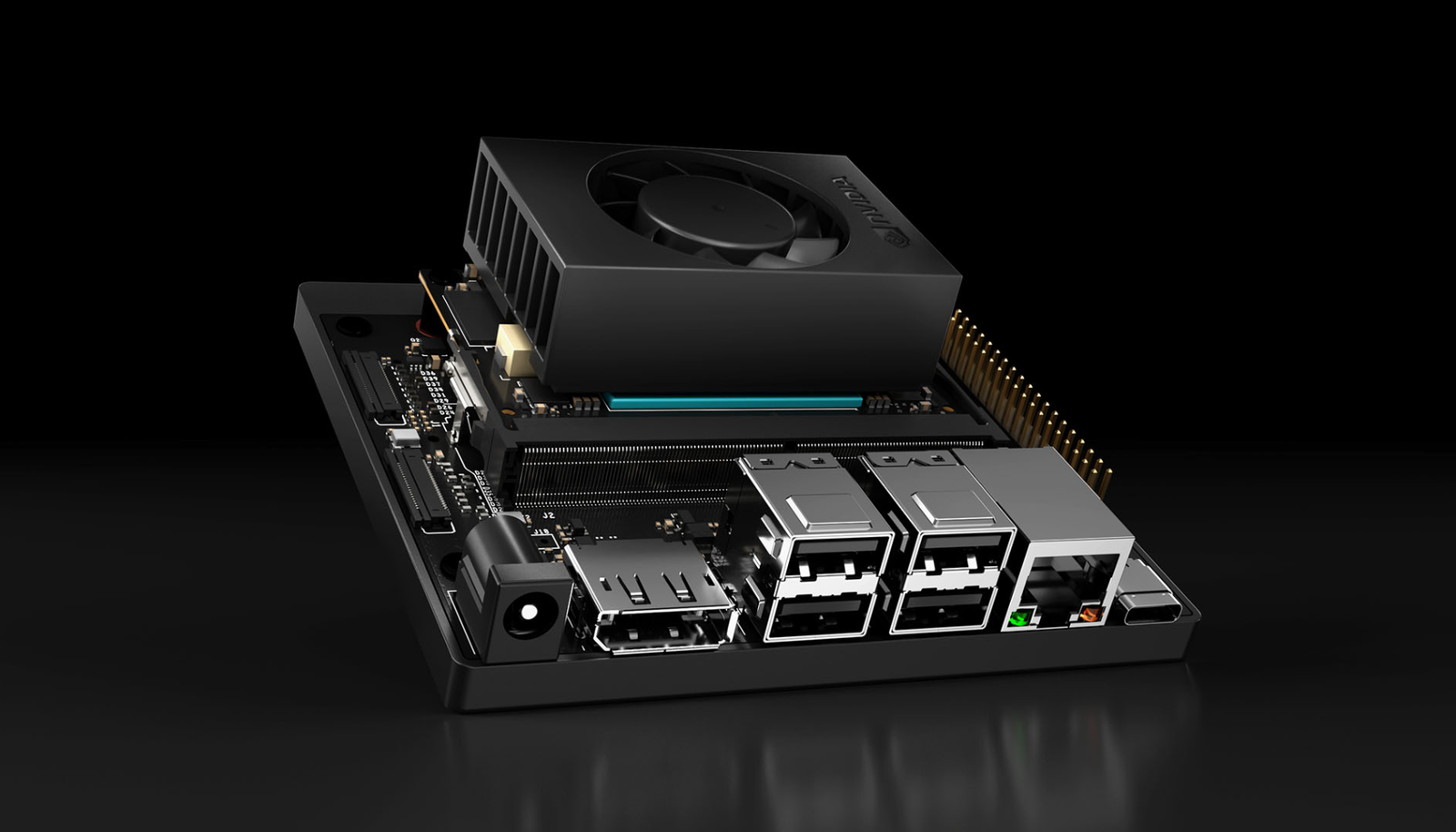

Nvidia’s answer is a dense web of partnerships. They’re working with hardware manufacturers, software optimizers, and vertical-specific solution providers at the same time. Notably, the Nvidia Jetson platform serves as the foundation for most of these collaborations — it’s essentially the mothership.

The Nvidia partnership edge AI deployment small devices 2026 roadmap includes tighter integration with companies like Qualcomm, MediaTek, and dozens of smaller OEMs. Meanwhile, Nvidia’s software stack — particularly TensorRT and CUDA — is being aggressively optimized for increasingly constrained environments. Fair warning: the depth of this ecosystem can feel overwhelming at first, but that breadth is also its biggest strength.

Model Optimization for Resource-Constrained Devices

Running a billion-parameter model on a device with 4GB of RAM sounds like wishful thinking. It’s not. Modern optimization techniques make it surprisingly practical — and I’ve seen firsthand how dramatic the results can be when you stack these methods correctly.

Here are the core methods powering Nvidia partnership edge AI deployment small devices 2026 initiatives:

1. Quantization — Reduces model precision from 32-bit floating point down to 8-bit or even 4-bit integers. The accuracy loss is often under 2%, while memory savings are dramatic. Nvidia’s TensorRT toolkit handles this automatically for many common architectures.

2. Pruning — Strips out unnecessary weights from neural networks, much like trimming dead branches from a tree. The model gets leaner and faster without losing its core intelligence — though the tradeoff gets trickier the more aggressively you prune.

3. Knowledge distillation — A large “teacher” model trains a smaller “student” model to mimic its behavior. Consequently, you get a compact model that genuinely punches above its weight class. This surprised me when I first saw it applied to vision models — the accuracy retention is remarkable.

4. Model architecture search — Algorithms automatically design neural network structures optimized for specific hardware constraints. Additionally, Nvidia’s tools can target exact memory and latency budgets, which removes a lot of guesswork.

5. Operator fusion — Multiple computation steps merge into single operations, cutting memory reads and writes. Furthermore, this meaningfully reduces inference time on edge GPUs — we’re talking measurable milliseconds shaved off per pass.

6. Sparse inference — Instead of processing every weight, the model skips zero-value computations entirely. Nvidia’s Ampere and newer architectures support structured sparsity natively, which is a genuine hardware-level advantage.

These techniques don’t exist in isolation. Specifically, Nvidia encourages partners to stack them deliberately. A typical edge deployment might combine INT8 quantization with pruning and operator fusion. The result is models that once required A100 GPUs now fitting comfortably on a Jetson Orin Nano. That’s not marketing fluff — I’ve tested this pipeline and the compression ratios are real.

Moreover, the ONNX Runtime project provides an open standard for model compatibility. This means you can optimize once and deploy across multiple Nvidia partner devices without starting from scratch every time.

Hardware Requirements and the Nvidia Partner Ecosystem

Understanding the hardware side is essential for anyone planning Nvidia partnership edge AI deployment small devices 2026 projects. Not all edge devices are created equal — and picking the wrong tier early is an expensive mistake.

Here’s a comparison of key Nvidia edge platforms and their capabilities:

| Platform | GPU Cores | AI Performance | Memory | Power Draw | Target Use Case |

|---|---|---|---|---|---|

| Jetson Orin Nano | 1024 CUDA | 40 TOPS | 4–8 GB | 7–15W | Entry-level robotics, smart cameras |

| Jetson Orin NX | 1024 CUDA | 70–100 TOPS | 8–16 GB | 10–25W | Mid-range autonomous machines |

| Jetson AGX Orin | 2048 CUDA | 275 TOPS | 32–64 GB | 15–60W | Advanced robotics, medical imaging |

| IGX Orin | 2048 CUDA | 275 TOPS | 64 GB | 60W | Industrial inspection, surgical AI |

TOPS stands for Tera Operations Per Second — it measures raw AI processing throughput, and it’s the number you’ll reference constantly when scoping hardware.

Nvidia’s partner ecosystem extends well beyond these modules. Companies like ADLINK, Advantech, and Connect Tech build carrier boards and complete systems around Jetson hardware. These partners handle the genuinely messy details: thermal management, I/O expansion, ruggedization, and certification. That last one — certification — can save you months of compliance headaches.

The Nvidia partnership edge AI deployment small devices 2026 strategy also includes silicon-level collaborations. Nvidia licenses its GPU IP to chip designers building custom SoCs (System on Chip). Here’s the thing: this means Nvidia’s AI acceleration shows up in devices that don’t even carry the Nvidia brand anywhere on the box.

Additionally, the software side of the partnership matters enormously. Nvidia provides:

- JetPack SDK — The complete development environment for Jetson devices

- DeepStream — A streaming analytics toolkit built specifically for video AI

- Isaac — A robotics development platform with solid simulation tools

- Metropolis — An application framework designed for smart spaces

- TAO Toolkit — Transfer learning tools for customizing pre-trained models without starting from scratch

Partners build on top of these tools, creating industry-specific solutions that would otherwise take years to develop independently. Consequently, time-to-market compresses from years to months — and in fast-moving markets, that difference is everything.

Real-World Use Cases Driving Edge AI Adoption

Where is Nvidia partnership edge AI deployment small devices 2026 actually making a difference? The use cases are more diverse — and more mature — than most people expect.

Manufacturing quality inspection — Factories use Jetson-powered cameras to detect defects in real time, scanning every product on the assembly line. No cloud latency, no footage leaving the facility. Partners like Landing AI and Cognex integrate directly with Nvidia’s edge stack, and the defect detection rates I’ve seen demoed are genuinely impressive.

Autonomous delivery robots — Companies deploying sidewalk delivery bots need on-device intelligence that doesn’t hesitate. These robots process LIDAR, camera, and sensor data at the same time — and they absolutely cannot wait for a cloud response while crossing a busy street. Nvidia’s partnerships with robotics firms specifically target this scenario, and it shows.

Precision agriculture — Drones and ground robots analyze crop health using computer vision, often in fields with zero internet connectivity. Similarly, livestock monitoring systems use edge AI to catch health issues early. The U.S. Department of Agriculture has highlighted AI adoption as a priority for modernizing farming, and edge deployment is central to making that practical in rural environments.

Retail analytics — Smart stores use edge AI for inventory management, customer flow analysis, and loss prevention. Privacy is important here — processing video locally means no customer footage travels to external servers. Nevertheless, the business insights generated are just as useful as anything a cloud pipeline would produce.

Healthcare at the point of care — Portable ultrasound devices, pathology scanners, and patient monitoring systems all benefit from on-device AI inference. The World Health Organization has specifically noted the importance of AI tools that function in resource-limited settings. Edge deployment is what makes that vision actually achievable — not theoretical.

Smart city infrastructure — Traffic management, air quality monitoring, and public safety systems process data from thousands of sensors around the clock. Sending all of that raw data to the cloud is impractical and expensive. Therefore, edge processing handles the heavy lifting locally, and only aggregated insights get sent upstream.

Each of these use cases reinforces why the Nvidia partnership edge AI deployment small devices 2026 approach resonates so strongly across verticals. The common thread? Data stays local, decisions happen instantly, and costs stay manageable.

Challenges and How Nvidia’s Partnerships Address Them

Edge AI deployment isn’t all smooth sailing — and anyone telling you otherwise is selling something. However, Nvidia’s partnership model is specifically designed to tackle the real friction points.

Thermal constraints — Small devices generate surprising heat inside tight enclosures. Nvidia partners like Connect Tech specialize in thermal solutions for Jetson modules, engineering enclosures and heat sinks that keep devices running reliably in harsh environments. This is unglamorous work that matters enormously in production.

Model accuracy vs. size tradeoffs — Aggressive optimization can degrade model performance in ways that aren’t always obvious until you’re in production. Nvidia’s TAO Toolkit helps partners manage this balance carefully. Importantly, it includes guardrails that flag unacceptable accuracy drops before you ship — a feature I wish more teams used earlier in their workflows.

Security vulnerabilities — Edge devices are physically accessible in ways that cloud servers aren’t, which means someone could tamper with them directly. Nvidia addresses this through hardware-level security features:

- Secure boot chains

- Encrypted model storage

- Trusted execution environments

- Over-the-air update mechanisms

Fragmented toolchains — Developers often juggle multiple frameworks and runtimes that don’t work well together. The ONNX open standard helps unify this, and Nvidia actively contributes to ONNX to keep model portability smooth across partner devices. It’s not a perfect solution, but it’s meaningfully better than the chaos that existed three years ago.

Power consumption — Battery-powered devices demand extreme efficiency that leaves little margin for error. Nvidia’s newer architectures deliver more TOPS per watt with each generation — roughly 2x improvement on a consistent cadence. Alternatively, partners design custom power management solutions around Nvidia’s reference designs for applications where even that isn’t enough.

Scalability — Managing hundreds or thousands of edge devices is genuinely hard, and it’s where a lot of promising pilots fall apart. Nvidia’s Fleet Command platform gives partners centralized management tools. Consequently, enterprises can deploy and update models across their entire device fleet from a single dashboard — which sounds boring until you’re responsible for 800 devices spread across a continent.

The Nvidia partnership edge AI deployment small devices 2026 ecosystem works because no single company solves every problem. Nvidia provides the compute foundation, partners fill the gaps with domain expertise and vertical solutions, and that division of labor genuinely accelerates the whole market. Furthermore, Nvidia’s Inception program supports startups building edge AI solutions, giving them access to hardware, technical guidance, and go-to-market support. It’s a smart flywheel — and it’s spinning faster every quarter.

What Comes Next for Edge AI Beyond 2026

The trajectory here is clear. Nvidia partnership edge AI deployment small devices 2026 is a milestone, not a finish line. Several trends will define what comes after.

Generative AI at the edge — Today, most edge AI handles classification and detection. Tomorrow, small language models and image generators will run locally on the device itself. Nvidia’s partnership with MediaTek on mobile AI chips hints strongly at this direction. I expect it to move faster than most people’s current timelines assume.

Federated learning — Devices will train models together without ever sharing raw data, solving privacy concerns while continuously improving model accuracy. Nvidia’s Clara framework already supports federated learning in healthcare settings — notably, it’s one of the more mature implementations I’ve seen outside of a research context.

Neuromorphic computing — Brain-inspired chips promise dramatic efficiency gains that conventional architectures simply can’t match. Although still experimental, Nvidia’s research partnerships in this area could yield commercial products within a few years. Worth watching, even if you’re not ready to bet on it yet.

Standardization efforts — Industry groups are actively working on common APIs and benchmarks for edge AI. Similarly, regulatory frameworks are evolving to address on-device AI governance in ways that will eventually shape procurement decisions. Getting ahead of this now is smart.

Smaller, cheaper hardware — Moore’s Law may be slowing for traditional chips, but AI-specific silicon keeps improving on its own curve. Each generation of Nvidia’s edge hardware delivers roughly 2x the performance at the same price point — and that compounding effect is what makes the long-term economics so compelling.

The companies investing in Nvidia partnership edge AI deployment small devices 2026 today are positioning themselves well for this accelerating future. Moreover, early movers accumulate real-world training data that meaningfully improves their models over time — and that compounding advantage is genuinely hard to replicate later.

Conclusion

The Nvidia partnership edge AI deployment small devices 2026 strategy represents a real architectural shift in how AI reaches end users. It moves intelligence from distant data centers to the devices people actually interact with every day — and that changes the economics, the privacy story, and the latency profile all at once.

Here’s what you should do next:

- Evaluate your latency and privacy requirements honestly. If either matters to your use case, edge deployment deserves serious consideration right now.

- Explore the Jetson ecosystem hands-on. Start with a developer kit and test your actual models on real hardware — benchmarks only tell part of the story.

- Identify potential partners early. Nvidia’s partner directory lists hundreds of companies with edge AI expertise across specific verticals.

- Optimize your models aggressively before finalizing hardware. Use quantization, pruning, and distillation first — you might need a cheaper device tier than you initially planned.

- Plan for scale from day one. A proof of concept is great, but managing thousands of edge devices is a different problem entirely. Think about it early.

The Nvidia partnership edge AI deployment small devices 2026 wave isn’t approaching on the horizon anymore. It’s already here, already shipping, already running in factories and clinics and delivery robots near you. The organizations that move now will define the next era of practical, privacy-respecting AI. Don’t wait for the cloud to solve problems that genuinely belong at the edge.

FAQ

What does Nvidia partnership edge AI deployment small devices 2026 actually mean?

It refers to Nvidia’s strategy of working with hardware and software partners to run AI models on small, resource-constrained devices. The goal is practical on-device inference without depending on cloud connectivity. This approach prioritizes low latency, data privacy, and cost efficiency — three things that matter a lot once you move beyond the prototype stage.

Which Nvidia hardware is best for edge AI beginners?

The Jetson Orin Nano is the most accessible starting point, and it’s where I’d tell most developers to begin. It delivers 40 TOPS of AI performance while drawing just 7–15 watts. Additionally, Nvidia’s JetPack SDK provides everything you need to start developing immediately, at a fraction of the cost of larger Nvidia platforms — the entry price is genuinely reasonable for what you get.

How much accuracy do you lose when optimizing models for edge devices?

Typically, INT8 quantization causes less than 1–2% accuracy degradation on well-designed models. Pruning and distillation results vary more widely depending on the architecture and how aggressively you compress. However, Nvidia’s optimization tools include validation steps that flag unacceptable accuracy drops, so you can always dial back the compression level before it becomes a real problem.

Can generative AI models run on Nvidia edge devices today?

Small language models with 1–7 billion parameters can run on higher-end Jetson modules like the AGX Orin — though performance won’t match a cloud GPU, and you’ll notice it. Nevertheless, for many real-world applications, that tradeoff is absolutely worthwhile. Notably, Nvidia partnership edge AI deployment small devices 2026 roadmaps include substantially better support for generative workloads as hardware efficiency keeps improving.

How does edge AI deployment compare to cloud-based AI in terms of cost?

Edge AI carries higher upfront hardware costs — that’s the honest answer. However, it eliminates ongoing cloud compute and data transfer fees that compound quickly at scale. For applications processing data continuously, like video analytics, edge deployment typically breaks even within 6–12 months. Therefore, the total cost of ownership frequently favors edge solutions once you’re past a certain volume threshold.

What industries benefit most from Nvidia’s edge AI partnerships?

Manufacturing, healthcare, agriculture, retail, and transportation see the strongest real-world benefits right now. These industries share common needs: real-time processing, data privacy, and reliable operation in connectivity-limited environments. Importantly, Nvidia’s partner ecosystem includes deep specialists in each of these verticals — which means you’re not starting from zero when you begin evaluating solutions.